The CPU Strikes Back: Architecting Inference for SLMs on Cisco UCS M7

CPU inference SLM workloads are the most underserved category in enterprise AI architecture today. In the current AI gold rush, the industry standard advice has become lazy: “If you want to do AI, buy an NVIDIA H100.”

For training a massive foundation model? Yes. For running ChatGPT-4 scale services? Absolutely — as we covered in our deep dive on H100 infrastructure. But for the 95% of enterprise use cases — internal RAG (Retrieval Augmented Generation) chatbots, log summarization, and edge inference — that advice is architecturally wasteful. It’s like buying a Ferrari to deliver Uber Eats.

The rise of Small Language Models (SLMs) like Llama 3 (8B) and Mistral (7B) has changed the math. These models don’t need massive parallel compute; they need low-latency matrix math. And thanks to updates in the silicon you likely already own, your CPU is ready to handle them.

The Secret Weapon Behind CPU Inference SLM Performance: Intel AMX

If you have refreshed your servers in the last 18 months, you probably have Cisco UCS M7 blades or rack servers running 4th or 5th Gen Intel Xeon Scalable processors (Sapphire Rapids / Emerald Rapids).

Buried in the spec sheet of these chips is a feature called Intel AMX (Advanced Matrix Extensions).

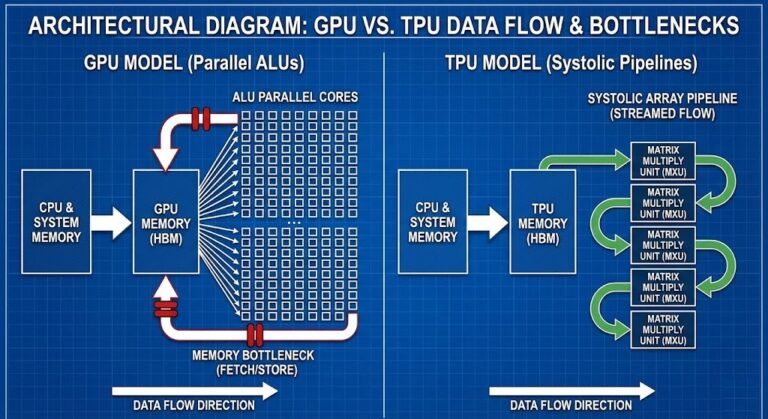

Think of AMX as a “Mini-Tensor Core” built directly into the CPU silicon. Unlike standard AVX-512 instructions which process vectors, AMX processes 2D tiles. This allows the CPU to crunch the specific linear algebra (Matrix Multiply) used in Transformer models significantly faster than previous generations.

We aren’t talking about a 10% boost. In our validation of quantization workloads, AMX can deliver inference speeds that are “human-readable” (20–40 tokens per second) for quantized models.

The Physics of Good Enough: CPU vs GPU TCO

Why does this matter for your TCO? Because the CPU inference SLM case isn’t about compromise — it’s about matching the right silicon to the workload. GPUs are expensive, scarce, and power-hungry.

If you are building an internal chatbot for your HR department to query PDF policy documents (a classic RAG workload), you do not need 200 tokens per second. The human eye reads at roughly 5-10 tokens per second.

- The GPU Approach: 150 tokens/sec. The user waits 0.1 seconds for the answer. Cost: $30,000 card + 300W power.

- The CPU (AMX) Approach: 35 tokens/sec. The user waits 0.5 seconds for the answer. Cost: $0 (You already bought the server) + 0W additional TDP.

When you model the true Total Cost of Ownership (TCO)—factoring in the six-figure acquisition cost of a GPU node versus the sunk cost of your existing CPU infrastructure, plus the ongoing OpEx of power and cooling—the “CPU-First” strategy is often the only financially viable choice for these edge workloads.

Quantization: The Enabler for Llama 3 on CPU

You cannot run these models in full 16-bit precision (FP16) on a CPU efficiently; the memory bandwidth will choke performance. The secret that makes CPU inference SLM viable at enterprise scale is Quantization.

By converting the model weights from 16-bit floating point to 8-bit integers (INT8) or even 4-bit integers (INT4), you reduce the model size by 75%.

- Llama 3 8B (FP16): ~16GB VRAM required.

- Llama 3 8B (INT4): ~5GB RAM required.

Cisco UCS M7 nodes typically ship with 512GB to 2TB of DDR5 RAM. A 5GB model is a rounding error in your memory footprint. You can run dozens of these agents side-by-side on the same hardware that runs your ESXi cluster.

This aligns perfectly with the “Lean Core” concept we discussed in our VCF Operations Guide—using available resources rather than buying new bloat.

The Architectural Blueprint: Running Llama 3 on Cisco UCS M7

If you want to pilot this today without buying a single GPU, here is the reference architecture:

- Hardware: Cisco UCS X210c M7 Compute Node.

- CPU: Intel Xeon Gold or Platinum (4th/5th Gen) with AMX enabled in BIOS.

- Software: Use Ollama or vLLM as the inference engine. These modern runtimes automatically detect Intel AMX instructions and offload the matrix math.

- Model:

Llama-3-8b-instruct-v0.2.Q4_K_M.gguf(The “Q4” denotes 4-bit quantization).

Running multiple quantized SLM agents on a shared UCS cluster means storage throughput becomes the next bottleneck. Before you scale your CPU inference deployment, validate your Ceph storage layer can handle the model load and retrieval patterns your RAG workload demands.

→ Validate My Storage ThroughputConclusion: Rightsizing the AI Wave

The goal of the Enterprise Architect is not to chase benchmarks; it is to solve business problems at the lowest acceptable TCO.

For 70B parameter models, buy the GPU. But for the wave of SLMs that will power your internal tools, agents, and edge analytics, CPU inference SLM architecture is not just capable — it is the financially superior choice.

Don’t let the vendor hype cycle force you into a hardware refresh you don’t need. Check your existing inventory. You might already own your AI inference farm.

Q: What Intel CPU generation is required to use AMX for Llama 3 inference?

A: Intel AMX requires 4th Generation Xeon Scalable processors (Sapphire Rapids) or newer. 5th Generation (Emerald Rapids) delivers improved AMX throughput. 3rd Generation Xeon (Ice Lake) and earlier do not support AMX — those systems rely on AVX-512 for matrix operations, which delivers significantly lower inference performance. Check your UCS blade spec sheet for the processor generation before planning a CPU inference deployment.

Q: Which quantization level is recommended for Llama 3 8B on Cisco UCS M7?

A: INT4 quantization (Q4_K_M in GGUF format) is the practical sweet spot for enterprise CPU inference. It reduces the model from ~16GB to ~5GB, fits comfortably in DDR5 memory, and delivers 30-40 tokens per second on AMX-enabled Xeon processors. INT8 delivers slightly better output quality at roughly double the memory footprint — viable on UCS M7 nodes configured with 256GB+ RAM. FP16 is not recommended for CPU inference due to memory bandwidth constraints.

Q: Can this architecture run multiple SLM agents simultaneously on the same UCS node?

A: Yes — this is one of the primary architectural advantages of the CPU inference approach. A UCS X210c M7 node with 512GB DDR5 can run 10+ simultaneous INT4 quantized 8B parameter model instances. Each instance consumes approximately 5GB of RAM plus overhead. The practical limit is memory capacity and CPU core allocation per instance, not GPU VRAM contention. This makes horizontal scaling of internal AI agents significantly cheaper than GPU-based approaches.

Q: Does Ollama automatically use Intel AMX or does it require manual configuration?

A: Ollama detects and uses Intel AMX automatically on supported processors when running on Linux with a kernel version of 5.16 or later. No manual configuration is required. On Windows Server, AMX support requires enabling the feature in BIOS and verifying the OS supports the instruction set. Use llama.cpp's built-in benchmark tool to confirm AMX is being utilized — look for AVX512_BF16 and AMX_BF16 in the CPU feature flags output.

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session