Cloud Egress Costs Explained: Why Your Architecture Is Paying a Tax You Never Modeled

You modeled compute. You modeled storage. You built cost estimates, ran capacity planning, and got sign-off on the architecture before a single resource was provisioned.

You did not model what it costs to move data.

Cloud egress is the tax that accumulates invisibly — not from a single expensive operation, but from thousands of small data movement events your architecture was never designed to account for. It shows up as a line item in the monthly bill that nobody owns, that nobody predicted, and that grows consistently as the system scales. The teams that get surprised by it are not the ones who made poor architectural decisions. They are the ones who made good architectural decisions without egress as a design constraint.

This guide covers what cloud egress costs actually are, where they come from, the architectural patterns that multiply them silently, and how to model them before the invoice arrives rather than after it does.

What Cloud Egress Actually Is

Egress is data leaving a cloud environment. Every time your system moves data — from a server to a user, from one region to another, from one availability zone to another — there is a potential cost event attached to it. The direction matters: inbound data transfer (ingress) is almost always free. Outbound data transfer (egress) is almost always metered.

There are three distinct egress categories, and most architecture reviews only account for one of them.

Internet egress is the most visible. Data leaving the cloud provider entirely — to a user’s browser, a mobile client, an external API, a partner system — is metered at published rates. This is the egress line item that appears in every cloud cost guide. It is also, for many architectures, not the largest egress cost.

Cross-region egress is data moving between two regions within the same cloud provider. A service in us-east-1 calling a service in eu-west-1. A replication job copying data from one region to another for disaster recovery. Each inter-region data transfer is a metered event, and for architectures with active multi-region deployments, this cost compounds quickly.

Cross-zone egress is the one most teams miss entirely until they see the bill. Availability zones within the same region are not free to communicate. AWS charges $0.01/GB in each direction for cross-AZ data transfer. In a microservice architecture where services are spread across multiple AZs for high availability — as they should be — every inter-service call that crosses an AZ boundary is a billable event. At high request volumes, this accumulates into a significant monthly cost that no single service team is responsible for and no single team monitors.

| Provider | Internet Egress (first 10TB) | Cross-Region | Cross-Zone | Free Tier |

|---|---|---|---|---|

| AWS | $0.09/GB | $0.02/GB | $0.01/GB each direction | 100GB/month |

| GCP (Premium Tier) | $0.08/GB | $0.01–0.08/GB | $0.01/GB | 1GB/month |

| GCP (Standard Tier) | $0.085/GB | $0.01–0.08/GB | $0.01/GB | 1GB/month |

| Azure | $0.087/GB | $0.02/GB | $0.01/GB | 5GB/month |

Note: Rates vary by region, volume tier, and service. Cross-region rates vary significantly by region pair. These are representative rates for reference — check current provider pricing pages before budgeting.

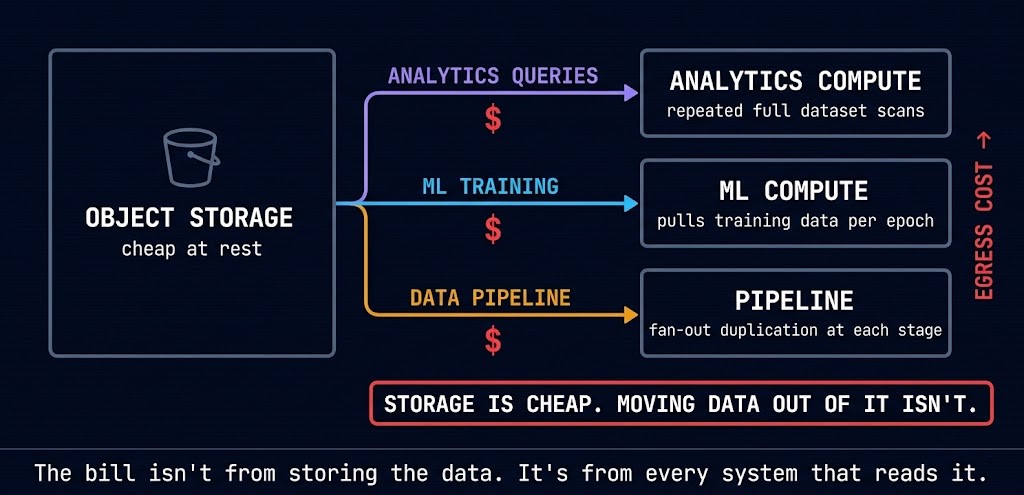

Storage Is Cheap. Moving Data Out of It Isn’t.

Object storage is one of the cheapest resources in the cloud. S3, GCS, and Azure Blob Storage charge fractions of a cent per GB per month for standard storage. The pricing makes data retention economically trivial — keeping historical data indefinitely is cheaper than the engineering cost of managing deletion policies.

The cost is not in storing the data. It is in every system that reads it.

Analytics queries that scan large datasets pull gigabytes of data from object storage to compute on every execution. An ML training pipeline that reads training data from S3 into a GPU instance for each training run generates egress from storage to compute on every epoch. A data pipeline that copies data between storage tiers — landing zone to processed bucket to archive — duplicates the data movement at each stage rather than querying in place. None of these feel like egress events in the conventional sense. They do not look like “data leaving the cloud.” They are data moving within the cloud, between services, across the boundaries that cloud providers meter.

The architectural response is not to stop running analytics or ML workloads. It is to design data locality into the architecture: collocate compute with storage in the same region and AZ, query data in place with serverless analytics engines (BigQuery, Athena, Redshift Spectrum) rather than pulling it to dedicated compute, and use caching layers to prevent repeated reads of the same data across multiple pipeline stages. The AI inference cost architecture series covers data gravity — the principle that compute should move to data rather than data moving to compute — in the context of inference pipelines where this cost is now the largest line item for many AI workloads.

Storage is cheap. Moving data out of it isn’t.

Analytics, ML, and data pipeline architectures quietly generate the largest object storage egress bills — not from storing data, but from every system that reads it across a metered boundary. The cost is in the reads, not the retention.

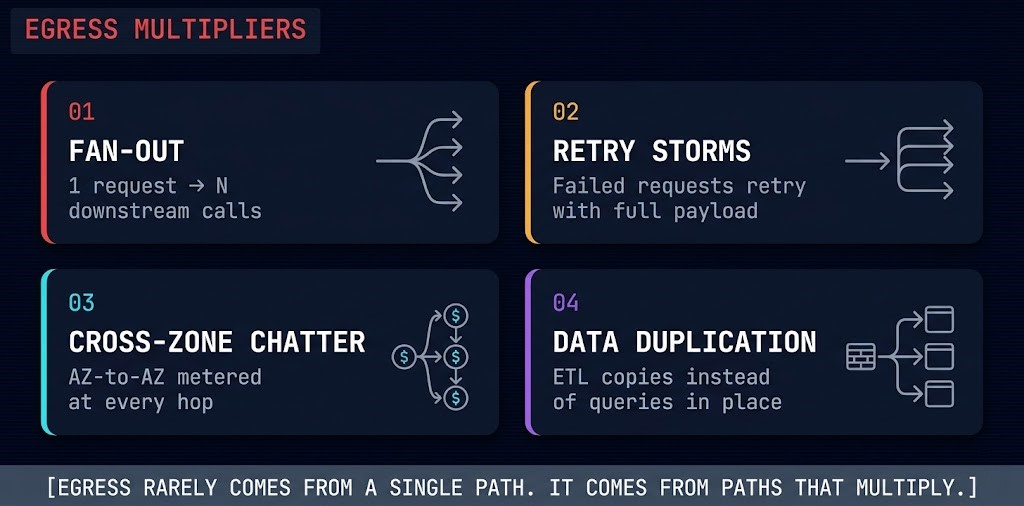

Egress Multipliers

Most egress cost analyses focus on individual data transfer events. The real problem is architectural patterns that multiply egress — where a single user action, pipeline trigger, or retry event generates orders of magnitude more data movement than the operation itself warrants. Understanding the multipliers is more valuable than optimizing individual transfer rates.

A single inbound request triggers N downstream service calls, each of which pulls data from storage, calls an external API, or crosses a zone boundary. One user action becomes ten egress events. Ten concurrent users become a hundred. Fan-out architectures are correct designs for scalability — they become egress problems when the fan-out multiplier is never modeled against data transfer costs. The cascading failure risk in multi-cloud architectures follows the same fan-out pattern — one trigger, many downstream cost events.

A service encounters a transient failure and retries with the full request payload. At scale, retry storms generate egress volume that can exceed the original traffic by multiples — the same data transferred repeatedly without successful delivery. Retry logic without exponential backoff, jitter, or payload size awareness turns a brief service degradation into a sustained egress event. For agentic AI systems without execution budgets, retry storms are one of the primary uncontrolled cost drivers — the same principle applies to egress at the infrastructure layer.

Microservice architectures distributed across availability zones for resilience generate inter-AZ traffic on every service-to-service call that crosses a zone boundary. A request chain that traverses five services across three AZs generates five potential cross-zone transfer events — each metered at $0.01/GB each direction. At high request volumes with large payloads, the cross-zone chatter from a well-designed resilient architecture becomes a meaningful cost line. Zone-aware routing — routing requests to service instances in the same AZ where possible — reduces this without sacrificing the availability architecture.

ETL and ELT pipelines that copy data between storage tiers — raw to processed, processed to curated, curated to archive — generate egress at every stage rather than transforming in place. A pipeline that copies 1TB through four stages transfers 4TB, not 1TB. The architectural alternative is transformation in place using serverless query engines that scan data where it lives rather than moving it to dedicated compute for processing. For AI data fabric architectures, data duplication across pipeline stages is one of the primary hidden cost drivers as training dataset sizes grow.

Egress rarely comes from a single path. It comes from paths that multiply.

Where the Hidden Costs Live by Provider

Each cloud provider structures egress costs differently, and the differences have architectural implications beyond the per-GB rate.

AWS charges $0.01/GB in each direction for cross-AZ traffic within the same region — a cost that is easy to miss because it appears as a line item shared across dozens of services rather than attributable to a single architecture decision. For microservice architectures with high inter-service call volumes across AZs, this compounds into significant monthly spend. AWS also charges for data transfer between services within the same region in some configurations — S3 to EC2 in the same region is free, but EC2 to EC2 across AZs is not.

GCP’s global VPC model eliminates many of the cross-zone cost traps that AWS architectures encounter. A single VPC spans all regions, and intra-region traffic between zones is cheaper than AWS equivalent. The more significant GCP egress decision is Premium Tier versus Standard Tier for internet egress — Premium Tier keeps traffic on Google’s private backbone from source to destination, Standard Tier routes via the public internet. Choosing Standard Tier to reduce costs eliminates the core network advantage GCP’s architecture is built on. See the full <a href=”https://www.rack2cloud.com/cloud-hybrid-strategy-google-cloud-platform/” class=”r2c-link”>GCP architecture guide</a> for how the network model affects egress economics.

Azure follows a similar cross-zone model to AWS, with inter-AZ transfer metered within a region. Azure’s ExpressRoute provides private connectivity with different egress economics than public internet transfer — for enterprise hybrid architectures with high on-premises-to-cloud data movement, the ExpressRoute cost model often justifies the circuit cost at sufficient volume.

The provider comparison matters less than the architectural principle: wherever data moves across a billing boundary — zone, region, or provider — that movement has a cost, and that cost multiplies with request volume.

AI and Inference Egress: The New Problem

Inference pipelines have introduced an egress cost category that traditional architecture cost models were never designed to capture. An inference request that pulls retrieval context from object storage, queries a vector database in a different zone, calls an embedding model in a separate service, and returns a response to a user has generated egress events at every step — most of which never appeared in the original cost estimate.

AI inference cost is the new egress. The principle established in cloud architecture for data movement — that cost emerges from behavior, not provisioning — applies directly to inference pipelines. A model that runs cheaply on a per-token basis can generate significant egress costs when the retrieval context, feature data, and model artifacts are distributed across zones or regions without data locality design.

The architectural response is data gravity: run inference where the data lives. A GPU instance in the same AZ as the vector database it queries, the object storage it reads from, and the feature store it accesses eliminates the cross-zone egress events that accumulate invisibly in architectures where compute and data were placed independently. For <a href=”https://www.rack2cloud.com/infiniband-vs-rocev2-ai-fabric/” class=”r2c-link”>high-throughput AI fabric architectures</a>, the fabric choice and data placement strategy directly determines the egress cost profile of the inference workload. This is not a FinOps concern — it is an architecture constraint that belongs in the design review, not the post-launch cost analysis.

How to Reduce Cloud Egress Costs

Egress cost reduction is not a FinOps exercise. It is an architecture exercise. The levers that actually move the number are design decisions, not billing dashboard adjustments.

Collocate compute and data. Place compute resources in the same region and availability zone as the data they consume. This is the single highest-leverage decision for reducing cross-zone and cross-region egress. Zone-aware Kubernetes scheduling — using topology spread constraints and affinity rules to route requests to service instances in the same AZ — reduces cross-zone chatter without changing the service architecture. See how <a href=”https://www.rack2cloud.com/cloud-native-kubernetes-cluster-orchestration/” class=”r2c-link”>Kubernetes cluster orchestration</a> handles topology-aware scheduling for locality-sensitive workloads.

Query in place. Use serverless analytics engines — BigQuery, Athena, Redshift Spectrum — to run queries against data where it lives in object storage rather than pulling data to dedicated compute. A BigQuery query that scans 50GB of a petabyte dataset transfers nothing out of storage. The equivalent operation on a dedicated analytics cluster pulling data to compute transfers 50GB every time it runs.

Cache aggressively. CDN caching for user-facing content eliminates internet egress for repeated requests. In-memory caching (Redis, Memcached) for inter-service data reduces cross-zone calls for frequently accessed data. Read-through caches at the service layer reduce object storage reads for hot data. Every cache hit is an egress event that did not happen.

Compress before transfer. Compression reduces payload size before data crosses a billing boundary. For large dataset transfers, high-ratio compression (zstd, Brotli) reduces egress volume by 60–80% with minimal compute overhead. For inter-service calls with structured payloads, binary serialization formats (Protocol Buffers, Avro) reduce payload size versus JSON by 3–10x. The compute cost of compression is almost always less than the egress cost of uncompressed transfer at volume.

Audit the multipliers. Before optimizing individual transfer rates, identify which architectural patterns are generating the highest egress volume. Fan-out patterns, retry storms, and cross-zone chatter are more valuable to fix than negotiating a lower per-GB rate. The <a href=”https://www.rack2cloud.com/cloud-cost-increases-2026-analysis/” class=”r2c-link”>2026 cloud cost analysis</a> documents the specific egress patterns driving the largest unplanned spend increases across enterprise cloud environments.

Architect’s Verdict

Egress is not a billing problem. It is an architecture problem that surfaces as a billing problem after the system is in production and the design decisions that generated it are too expensive to reverse.

The teams that control egress costs are not the ones running tighter FinOps reviews or negotiating better committed use discounts. They are the ones who modeled data movement as a first-class architectural constraint at design time — who asked “what does this data transfer cost at 10x volume?” before the architecture was approved, not after the first invoice arrived.

The patterns that generate the largest egress bills are not misconfigurations. They are correct architectural decisions — high availability across AZs, fan-out for scalability, retry logic for resilience — made without egress as a design input. The fix is not to undo those decisions. It is to add egress modeling to the architectural review process so that the cost of each pattern is visible before it is committed to.

Model it like compute. Model it like storage. It is the same tax, arriving from a direction you didn’t expect.

Egress is one cost constraint in a broader cloud architecture decision framework. The Cloud Architecture Strategy hub covers platform selection, cost governance, and the hybrid architecture patterns that determine where your workloads belong.

Explore Cloud Strategy →Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session