Sub-500ms LLM Inference on AWS Lambda: The GenAI Architecture Guide

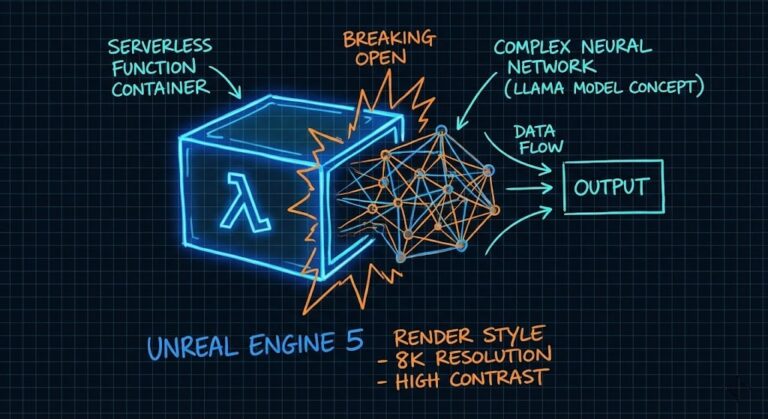

When I posted my Llama 3.2 benchmarks on r/AWS few days ago, the reaction was a mix of excitement and outright disbelief. “It feels broken,” one engineer commented, referencing their own 12-second spin-up times for similar workloads. Another asked if I was violating physics. I understand the skepticism. For years, the industry standard for “Serverless…