STORAGE ARCHITECTURE PATH

ENTERPRISE STORAGE, SDS, AND RESILIENT FABRICS.

Why Storage Architecture Matters

Compute is the brain of your infrastructure, but storage is its permanent memory.

When you treat storage as a static “box,” you inevitably design a bottleneck. Poorly architected storage leads to catastrophic latency spikes, I/O queuing, and unpredictable application behavior under stress. Without a strict understanding of storage physics, your infrastructure suffers from massive operational waste (over-provisioning) and extreme vulnerability to silent data corruption.

This learning path cuts through the vendor feature lists. You will learn how to build data fabrics that deliver deterministic throughput — whether you are architecting hyperconverged storage, disaggregated NVMe-oF, or distributed cloud fabrics.

No marketing IOPS.

No “infinite capacity” myths.

Just the raw physics of data persistence.

Who This Path Is Designed For

To master the data layer, you must transition from “Disk Administrator” to “Storage Architect.”

- Storage & Infrastructure Engineers: Responsible for SAN/NAS fabrics, hyperconverged storage orchestration, and hardware-level troubleshooting.

- Platform & SRE Engineers: Designing high-availability storage clusters and cloud-integrated storage that must survive site-level failures.

- Architects & Consultants: Senior engineers who must analyze the trade-offs between performance (IOPS), cost ($/GB), and resiliency across heterogeneous platforms.

Note: Understanding CPU scheduling and hypervisor fundamentals (covered in our Compute and Virtualization paths) is highly recommended to fully grasp how “Data Locality” impacts storage performance.

The 4 Phases of Storage Architecture Mastery

Phase 1: Storage Topology & Media Physics

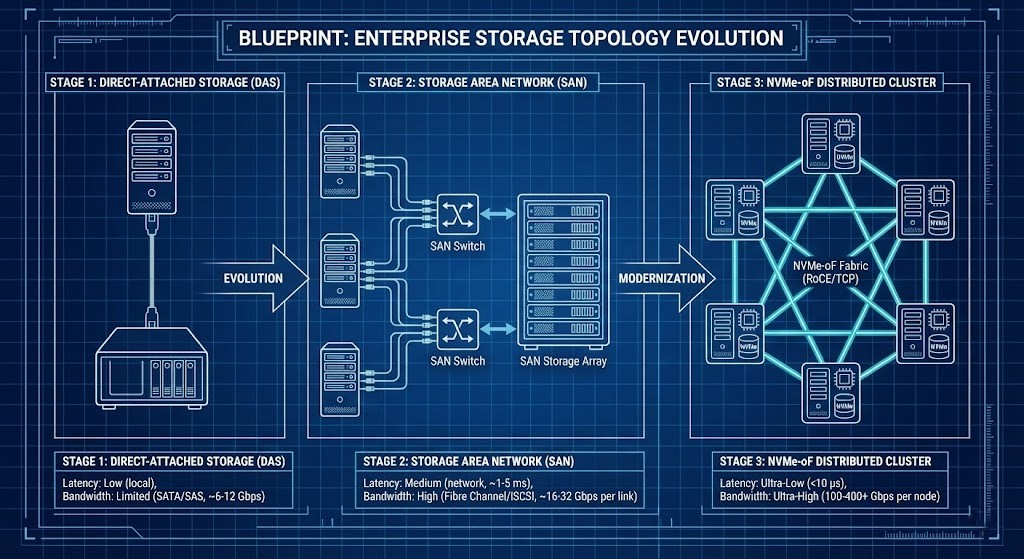

Determinism begins at the media layer. Before you abstract the data, you must understand the physical constraints of the media and the wire. We are moving rapidly from traditional monolithic arrays to high-velocity fabrics.

Architects must understand:

- The evolution of SAN vs. NAS vs. DAS.

- The physical limitations of SAS/SATA vs. NVMe and NVMe-oF fabrics.

- Persistent memory (PMEM) tiers.

- Flash write endurance and garbage collection penalties.

- Queue depth and media latency curves.

>_ Deep Dive Spoke: Compare the underlying file system logic of modern open-source storage in our guide: ZFS vs Ceph vs NVMe-oF Architecture.

Outcome: You will design storage systems aligned to actual workload behavior — not vendor marketing.

Phase 2: Software-Defined Storage & Distributed Algorithms

Storage is now software. Mastering SDS means understanding how physical media is abstracted into programmable, distributed pools of capacity directly by the hypervisor.

While our Modern Virtualization Pillar breaks down the compute abstraction of these platforms, this phase focuses strictly on the data layer. Whether you are deploying VMware vSAN, Nutanix AOS, or Ceph, the underlying data physics and distributed consensus rules remain constant.

Architects must understand:

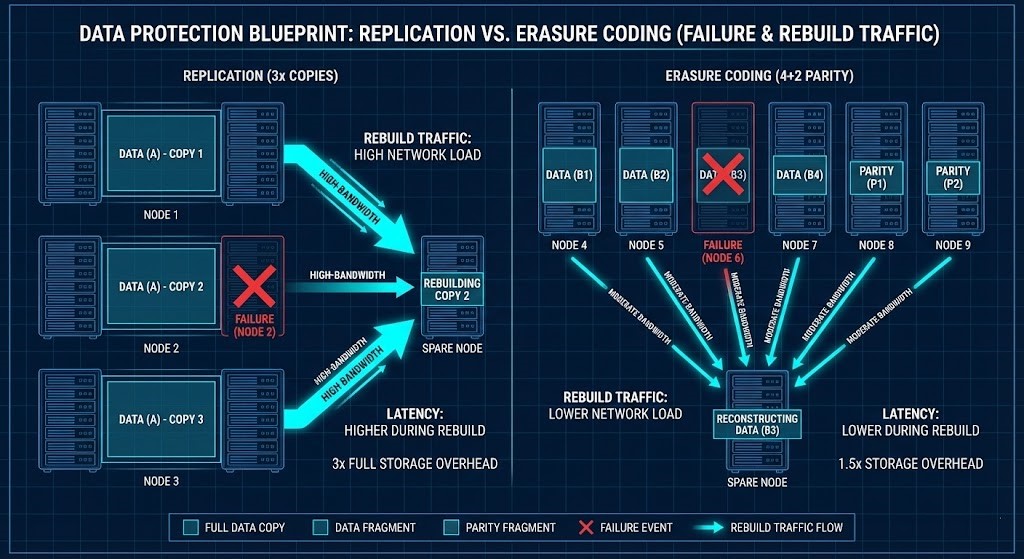

- Replication factors (RF2 vs. RF3) and their write penalties.

- Erasure Coding (EC) mathematical tradeoffs.

- Rebuild amplification and node failure physics.

- Data locality logic (keeping read I/O local to the compute node).

- Distributed consensus behavior and split-brain prevention.

>_ Deep Dive Spoke: See exactly how the hypervisor architecture impacts storage performance in our massive data study: Nutanix AHV vs vSAN 8 I/O Benchmark.

Engineering Workbench Integration:

- AI Ceph Throughput Calculator: Sizing a distributed storage backend for AI? Calculate your exact aggregate bandwidth, Ceph node counts, and Erasure Coding overhead before you buy hardware.

Outcome: You will model rebuild storms before they destabilize production.

Phase 3: Data Efficiency & Economic Determinism

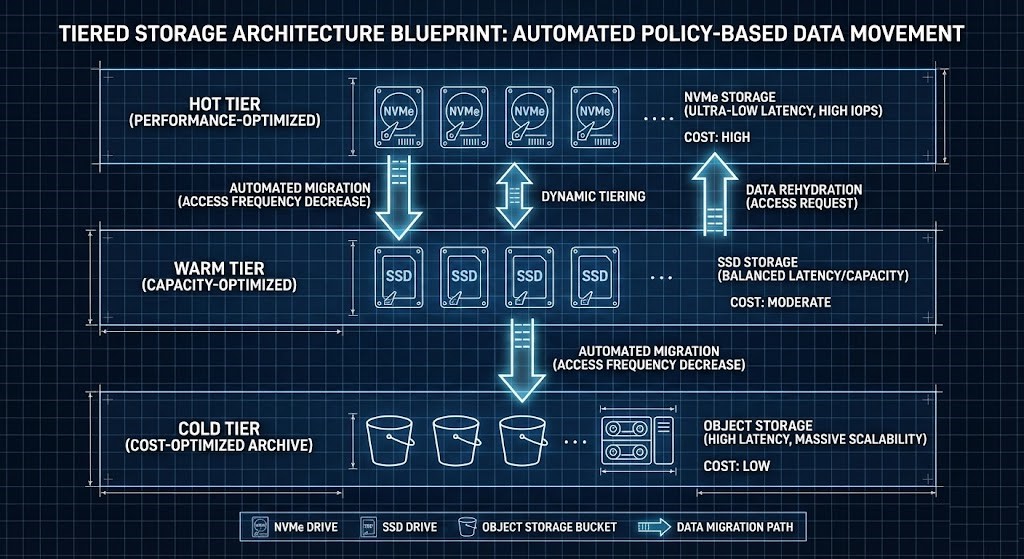

Capacity without efficiency is waste. You must learn to balance cost ($/GB) against compute overhead by utilizing the right data reduction mechanics.

Architects must balance:

- Inline vs. Post-Process Deduplication physics.

- Compression overhead and CPU Ready-time impacts.

- The operational risk of Thin Provisioning.

- Tiering strategies (hot/warm/cold data movement).

- The harsh tradeoff between $/GB and $/IOPS.

Outcome: Storage economics become mathematically measurable, not speculative.

Phase 4: Resiliency, Observability & Survival Architecture

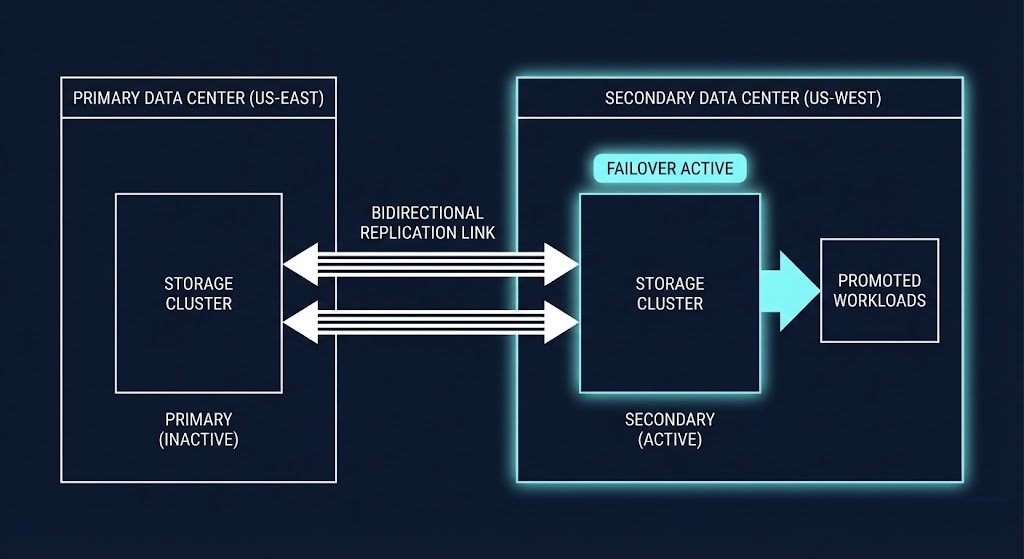

Storage must survive failure. Your storage fabric is the absolute last line of defense against a total corporate collapse, whether from hardware death or adversarial encryption.

Architects design for:

- Immutable, Write-Once-Read-Many (WORM) snapshots.

- Synchronous vs. Asynchronous multi-site replication.

- Silent data corruption detection and scrubbing.

- Non-disruptive firmware lifecycle management.

- Jurisdictional data sovereignty requirements.

>_ Deep Dive Spoke: Learn how to separate compute scaling from storage scaling by integrating external arrays with HCI in: Breaking the HCI Silo: Nutanix Integration with Pure Storage.

Engineering Workbench Integration:

- Veeam Storage Estimator: Immutability requires massive capacity. Calculate your exact storage requirements for ransomware-proof Grandfather-Father-Son (GFS) retention tiers.

Outcome: Data remains recoverable under ransomware, site loss, or silent corruption events.

Vendor Implementations Through an Architectural Lens

| Platform | Algorithm Model | Economic Model | Ideal Use Case |

|---|---|---|---|

| vSAN | Integrated with hypervisor | Core-aligned licensing | HCI environments |

| AOS | Distributed SDS | Bundled stack economics | Hyperconverged clusters |

| Ceph | CRUSH-based placement | Open & scalable | Sovereign & hyperscale |

Architectural decisions must be physics-driven, not feature-driven.

Continue the Architecture Path

Storage cannot be designed in a vacuum. Once you have mastered the physics of data persistence and resiliency on this page, your next step is to understand the networks that carry that data and the compute layer that processes it. Continue mastering the stack:

Frequently Asked Questions

Q: Is prior compute knowledge required?

A: Yes, understanding CPU/Memory scheduling and hypervisor fundamentals is critical to understanding how “Data Locality” impacts application performance.

Q: Are these examples vendor-neutral?

A: Yes, while we utilize Nutanix AOS, VMware vSAN, and Ceph as examples, the underlying Data Physics apply across all storage systems.

Q: Do I need hands-on experience for this?

A: Highly recommended. You cannot truly grasp the impact of an “Erasure Coding Rebuild” or a “Snapshot Commit” until you observe it under stress in a lab environment.

DETERMINISTIC STORAGE AUDIT

Storage is the persistent memory of your enterprise. Stop guessing at your deduplication physics, rebuild times, and NVMe-oF latency boundaries. Run your environment through our deterministic calculators to validate your architecture.

LAUNCH THE ENGINEERING WORKBENCH