Sizing On-Prem AI: An Architect’s Look at Nutanix’s New GPT-in-a-Box Workflow

The “T-Shirt Sizing” Era of AI is Over

For the last year, sizing AI workloads on-premises has felt a bit like the Wild West. We’ve been relying on rough spreadsheets, “t-shirt sizes” (Small, Medium, Large), and a fair amount of guesswork regarding inference overhead.

That changed today.

Nutanix released Sizer 6.0.94 (Release Date: 16-Dec-2025), and while the version number looks incremental, the capability jump is significant. The headliner is native support for GPT-in-a-Box 2.0, but for those of us digging into the BOMs (Bill of Materials), the updates to Nutanix Unified Storage (NUS) and logic corrections are equally critical.

Here is the architectural breakdown of what’s new and why it matters for your next design.

GPT-in-a-Box 2.0: The End of “Guesstimation”

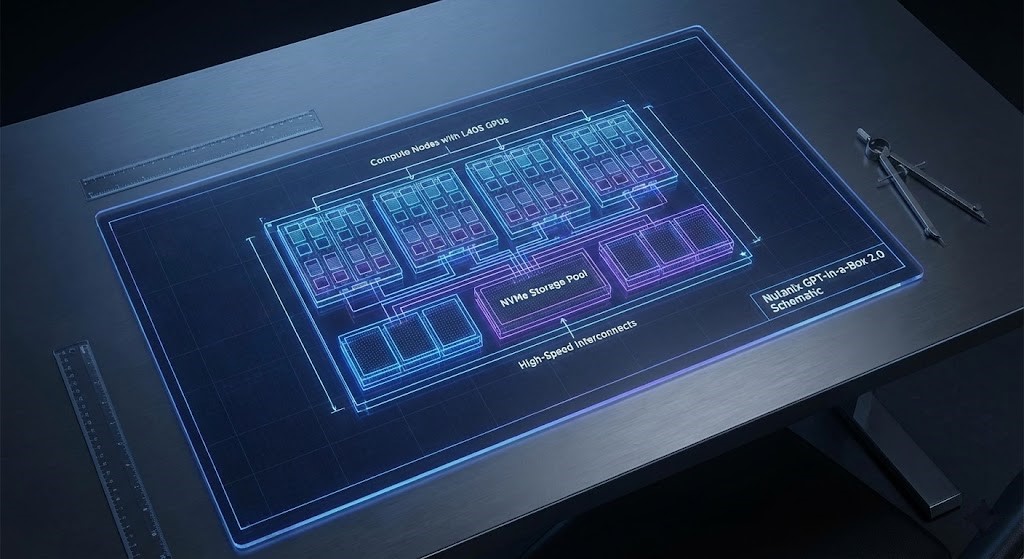

The biggest friction point in pitching on-prem AI has been the complexity of the hardware requirements. Sizer 6.0.94 introduces a dedicated workflow for Nutanix AI (NAI).

Instead of manually calculating how much VRAM a specific LLM needs or how many tokens per second your CPU can handle, the Sizer now handles:

- AI Workload Planning: It calculates the specific compute, memory, and storage IOPS required for LLM inference tasks.

- GPU Guardrails: It ensures you are pairing the right GPUs (like the L40S) with the right nodes, eliminating the “Missing GPU” errors that were plaguing previous manual configs.

- Future-Proofing: The logic is designed to scale, allowing you to size for Day 1 inference and Day 2 growth without rebuilding the cluster design from scratch.

The “Architect’s Fine Print”: NUS & Networking

While the AI features grab the marketing headlines, the changes to Nutanix Unified Storage (NUS) will physically change the hardware you order.

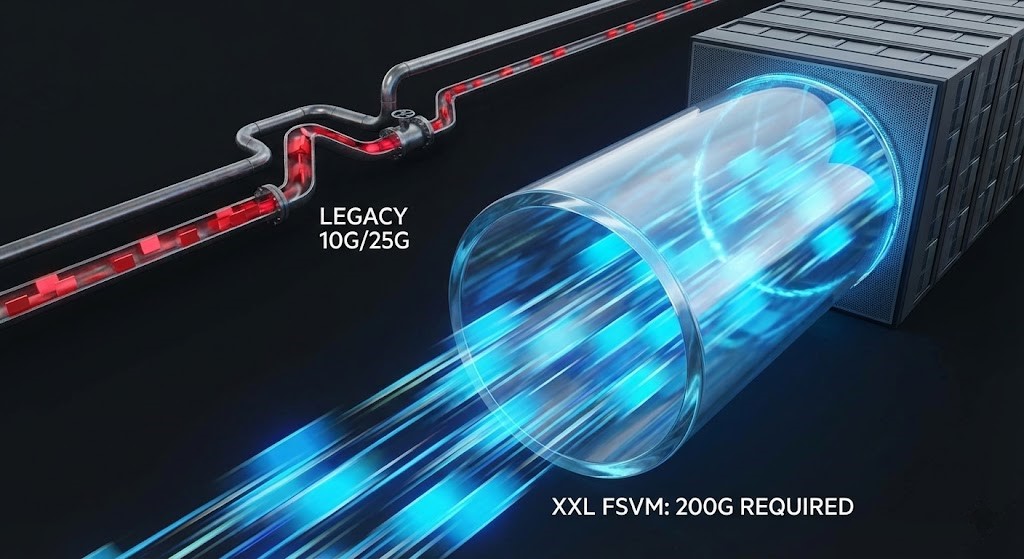

The 200G NIC Requirement If you are designing high-performance filesers using the XXL FSVM profile, Sizer now enforces a hard requirement for a 200G NIC.

- Why this matters: If you are quoting a standard 25GbE or 100GbE environment, your design will fail validation. This ensures the physical pipe matches the software capabilities of the updated FSVM profiles.

EC-X Logic During Failure This is a massive fix for capacity planning. Previously, when simulating a node failure, Sizer might have conservatively disabled Erasure Coding (EC-X) in its calculations, showing a terrifying spike in storage utilization that wouldn’t necessarily happen in real life.

- The Fix: The updated logic keeps EC-X applied if it was enabled initially. This provides a much more accurate representation of “n-1” capacity, preventing you from over-provisioning storage just to satisfy a broken simulation.

Quality of Life & Bug Fixes

A few nagging issues have been resolved that will make the design process smoother:

- Cisco Guardrails: If you are building on UCS, Sizer now enforces memory configuration guardrails for single and dual-socket M7 & M8 servers.

- AWS Pricing: The AWS bare metal pricing has been updated to reflect “Compute Savings Plans” (all upfront) rather than the older Reserved Instance model, giving a fairer TCO comparison.

- Imported Workloads: Fixed a critical bug where imported workloads showed incorrect core counts. Note: You should re-run sizing for any active designs that used imported data.

The Foundation Still Matters

It is easy to get distracted by the shiny new AI capabilities in Sizer, but an AI workload is only as good as the underlying compute cluster it runs on.

Whether you are sizing for a massive GPT-in-a-Box deployment or a standard VDI cluster, the physics of hypervisor overhead and core contention remain exactly the same. Before you layer on AI requirements, you must nail the baseline compute sizing.

>_ Engineering Action: If you are evaluating the shift to Nutanix, benchmark your legacy footprint and calculate your exact Broadcom VVF/VCF core-to-license exposure using our VMware Core Calculator in the Engineering Workbench.

Continue the Path

To master the infrastructure physics required to support on-premises LLMs and high-performance workloads, explore our foundational strategy guides:

- Modern Virtualization Learning Path

- AI Architecture Strategy Guide

- Hyper-V vs Nutanix AHV Sizing – A Decision Framework (Deep Dive)

Additional Resources:

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

This architectural deep-dive contains affiliate links to hardware and software tools validated in our lab. If you make a purchase through these links, we may earn a commission at no additional cost to you. This support allows us to maintain our independent testing environment and continue producing ad-free strategic research. See our Full Policy.