Sovereign Infrastructure Strategy: When Hybrid Cloud Becomes Dependency with Latency

Why Sovereignty Is a Control-Plane Problem — Not a Marketing Feature

For a decade, “hybrid cloud” was positioned as independence. In practice, it usually meant placing infrastructure on-premises while keeping identity, APIs, licensing checks, certificate validation, and automation pipelines tethered to a hyperscale region.

If the fiber was cut, the illusion collapsed.

Workloads might continue running — but identity failed. Control APIs became unreachable. Certificate validation began to decay. Change operations stopped. Within hours or days, the environment was operationally paralyzed.

That is not sovereignty. It is dependency with latency.

A disconnected cloud is something else entirely — an environment that can operate indefinitely without external control-plane reachability. The distinction between data-plane isolation and control-plane autonomy is where most architectures fail. For the foundational cloud architecture framework that underpins everything in this guide, start with the Cloud Architecture Learning Path.

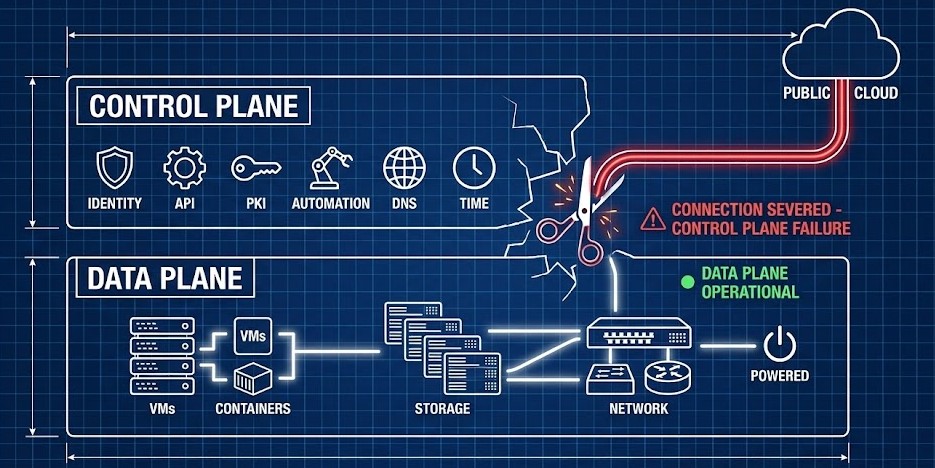

Control Plane vs. Data Plane: The Misunderstood Boundary

Most edge and hybrid designs focus on isolating the data plane — local compute, local storage, segmented networking, cached container images. Engineers draw the network diagram, segment the VLANs, and declare the environment sovereign.

But sovereignty is not about traffic flow. It is about authority.

The control plane determines who can authenticate, who can change state, who can deploy, who can rotate credentials, who can audit, and who can recover. If those capabilities require external identity providers, public certificate authorities, SaaS consoles, or remote APIs — the architecture remains externally dependent regardless of where workloads run.

The hypervisor layer is where this distinction becomes most operationally visible. The difference between a platform that maintains management authority during a network partition and one that freezes is the difference between Nutanix Prism’s local control plane and vCenter’s dependency on external PSCs and licensing validation — covered in detail in Nutanix vs VMware: Availability vs Authority in the Post-Broadcom Datacenter.

The Physical Litmus Test

Unplug the fiber. Ask these questions:

- Can you still deploy new infrastructure?

- Can you rotate credentials?

- Can you approve RBAC changes?

- Can you restore from backup without hitting an external rehydration dependency?

If the answer to any of these is no, the environment is not sovereign. It is delayed dependency. Isolation of traffic does not equal isolation of authority.

The Hidden Outbound Graph

Disconnected environments rarely fail because of storage limits or compute exhaustion. They fail because of invisible outbound dependencies. Every workload sits on a chain of trust and authority:

Workload → Identity → PKI → DNS → Time → Artifact → Licensing → Telemetry

Even in environments labeled “air-gapped,” outbound calls often exist for OIDC token refresh, STS validation, OCSP certificate checks, CRL retrieval, SaaS license verification, artifact pulls, NTP synchronization, and log forwarding.

These dependencies are rarely mapped end-to-end. During isolation, they become the failure points. Sovereignty begins with dependency graph mapping — not subnet segmentation.

Silent outbound network loops — particularly OIDC and private endpoint validation calls that look like internal traffic but resolve externally — can be surfaced using the Azure Private Endpoint Auditor Tool. For Azure-hosted workloads, this is the first step in the dependency graph audit before claiming sovereignty.

The broader infrastructure drift that accumulates in sovereign environments — manual console changes that bypass IaC pipelines and create undocumented outbound dependencies — is addressed in the Infrastructure Drift Detection Guide. In a sovereign environment, drift is not just a configuration problem — it is a trust boundary problem.

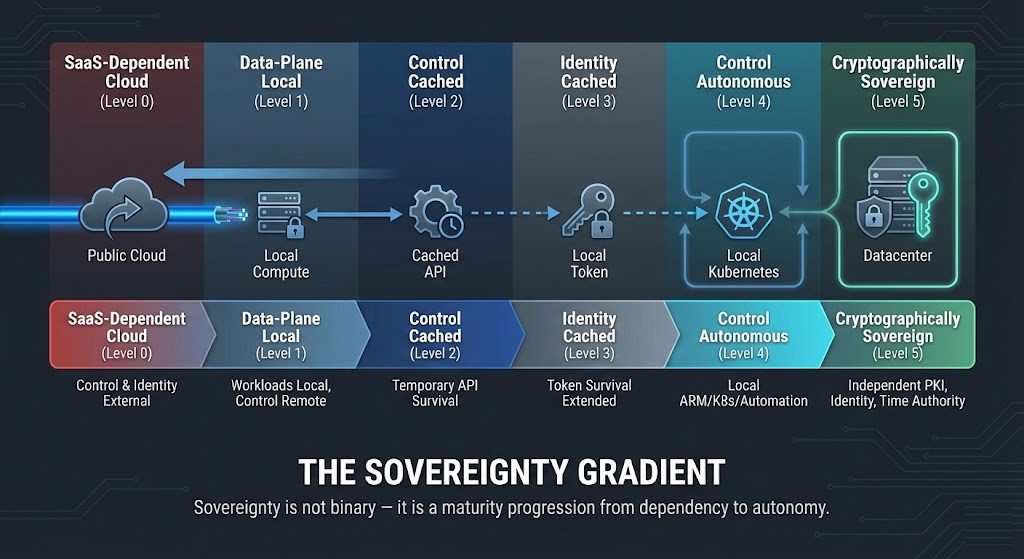

The Sovereignty Gradient

Disconnected cloud is not binary. It exists on a maturity spectrum:

- Stage 0 — Full SaaS Dependency: Identity, control, and validation fully external. Workloads run locally but authority lives remotely. Survivability: minutes

- Stage 1 — Cached Dependencies: Token caching and API response caching extend survivability from minutes to hours — but the architecture remains fundamentally dependent

- Stage 2 — Control Plane Autonomy: Infrastructure automation engines, identity authorities, PKI services, DNS zones, artifact registries, and logging systems all run locally and require no external validation. This is where true sovereignty begins

- Stage 3 — Cryptographic Sovereignty: Independent certificate authority chains, internal revocation mechanisms, internal time authority, local hardware security modules, and deterministic supply-chain validation

Sovereignty is a progression — not a checkbox. The foundational framework for navigating this spectrum is in the Sovereign Cloud vs Public Cloud: Navigating Compliance in a Non-Deterministic Landscape pillar.

For practical Day-2 implementation of control plane autonomy at the Kubernetes orchestration layer — where identity federation failures surface first during isolation events — see the Kubernetes Day-2 Operations Guide.

The Failure Modes of Isolation

Air-gapped and disconnected systems fail in predictable patterns. Understanding the sequence prevents the 3 AM phone call.

Control Plane Paralysis

When the public API becomes unreachable, workloads continue running — but nothing can change. No new deployments. No RBAC modifications. No credential rotation. Operational authority freezes. Without an external source of truth, configuration drift becomes entirely a local responsibility — and undocumented drift that accumulated before the isolation event becomes the primary recovery obstacle. See the Infrastructure Drift Detection Guide for the IaC governance framework that prevents this.

Identity Authority Loss

OIDC JWKS endpoints become unreachable. STS tokens expire. Federation chains collapse. Systems continue running — but administrators cannot authenticate. Identity fails silently before compute does.

This failure mode surfaces in container orchestrators first. The Kubernetes ImagePullBackOff pattern during IAM federation failure is one of the clearest early warning signals that identity authority is degrading — before the broader authentication infrastructure collapses.

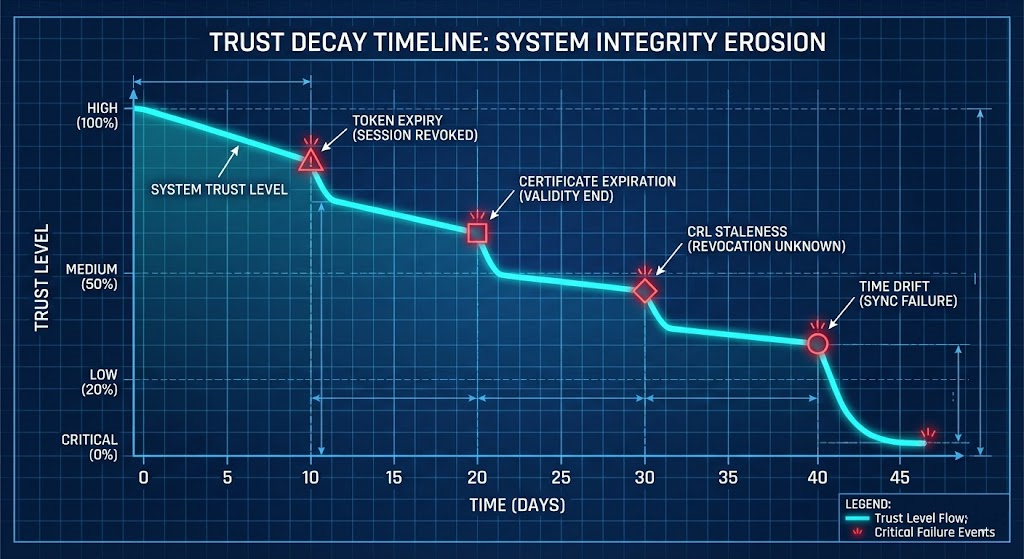

Cryptographic Expiry

Certificates expire. Revocation lists go stale. OCSP checks fail. Trust decays over time in a mathematically predictable pattern that most architecture reviews ignore entirely. The half-life of your trust infrastructure determines your actual sovereignty duration — not your fiber uptime SLA.

For data protection architectures that must survive identity authority loss — specifically how Rubrik and Veeam behave differently when the management endpoint loses external federation — see the Rubrik vs Veeam in the Sovereign Backup Estate.

Time: The Overlooked Control Plane

Air-gapped environments consistently overlook time synchronization until it becomes a cascading failure vector. Kerberos authentication, TLS certificate validation, JWT token lifetimes, and OCSP verification all depend on synchronized clocks across all nodes in the trust chain.

Without an internal NTP hierarchy — ideally multi-stratum and physically independent from the external network — clock drift compounds every other failure mode. A 5-minute clock skew that Kerberos considers a replay attack will lock out administrators from systems that are otherwise fully operational.

Time is part of the control plane. If you do not own time, you do not own trust.

The Half-Life of Trust

Disconnected cloud is highly duration-dependent. A system engineered to survive a 1-hour fiber cut may catastrophically fail at Day 30. Trust components expire on different schedules:

- JWT tokens: hours to days

- TLS certificates: weeks to years

- CRL freshness: hours to days

- Kerberos tickets: hours

- HSM lease renewals: configurable but often overlooked

Map the shortest expiry in your trust chain. That is your actual sovereignty duration — regardless of what your SLA documentation claims.

Identity Severance Engineering

Identity is the hardest sovereignty problem to solve and the most common reason disconnected architectures fail in practice. True autonomy requires:

- An independent identity authority with local OIDC provider capability

- An independent RBAC data store with offline consistency guarantees

- An internal certificate authority chain with local issuance capability

- An offline CRL or OCSP stapling strategy

- Local HSM-backed signing keys that do not require external activation

If identity must call home — even once per token lifecycle — the environment is not sovereign. It is sovereign-adjacent, which is a different engineering condition with a different failure profile.

AI and the Return of Data Gravity

AI infrastructure has accelerated the sovereignty discussion beyond compliance into competitive strategy. Proprietary datasets sent to public inference APIs introduce IP risk, jurisdictional uncertainty, and compliance exposure simultaneously.

Fine-tuned model weights trained on internal data are strategic assets. They must be stored, versioned, integrity-verified, and deployed within sovereign architectural boundaries — not cached in a hyperscaler’s inference endpoint.

Data gravity dictates that compute must eventually move to where the data lives. For sovereign AI deployments, this means on-premises GPU infrastructure with local inference serving. The hardware architecture decisions — TPU vs GPU, NVLink topology, thermal density — are covered in the Sovereign AI Private Infrastructure Architecture guide.

For the egress cost physics that make data gravity a financial reality — not just a theoretical concern — see The Physics of Data Egress. The same economics that make it expensive to move data out of a hyperscaler make it strategically rational to keep sensitive training data on sovereign infrastructure from day one.

The Cost of Sovereignty

Disconnected cloud is not inherently superior. It is expensive.

You lose elastic burst capacity. Hardware must be sized for peak load, not average load. You own the PKI lifecycle, the identity lifecycle, the patch validation pipeline, the artifact promotion process, and the hardware refresh cycles — without hyperscaler operational leverage.

Sovereignty is justified when the blast radius of hyperscaler lockout, regulatory violation, or IP leakage exceeds the operational cost of autonomy. Architecture — not ideology — should determine the decision.

The Reversibility Test: If you cannot remove your hyperscaler without redesigning your control plane, you do not have sovereignty. You have a managed dependency that you’ve chosen not to examine.

For the cloud egress and storage cost modeling that quantifies the financial side of this decision, the Cloud Restore Calculator surfaces the actual cost delta between sovereign on-premises backup and cloud-dependent recovery — a number that often reframes the sovereignty investment as cost-neutral within 18-24 months.

Actionable Takeaway: The Disconnected Cloud Audit

Below is a condensed operational checklist to validate your isolation architecture. Use this directly or download the structured audit worksheet.

↓ Download the 30-Day Sovereignty Audit Framework1. Control Plane Locality Audit

- Can new infrastructure be deployed during full internet isolation?

- Can automation pipelines execute locally without public APIs?

- Can credentials and secrets be rotated without external reachability?

- Can RBAC changes be approved and enforced locally?

- Can backups be restored without public endpoints?

- Can failed nodes be rebuilt from local artifacts?

2. Identity Independence Audit

- Is the identity authority fully local?

- Do OIDC token refreshes require public endpoints?

- Can federation survive 30+ days offline?

- Are signing keys locally controlled and HSM-backed?

- Is RBAC policy data stored locally?

3. Cryptographic Sovereignty Audit

- Is there an independent internal CA chain?

- Can certificates be renewed offline?

- Are CRLs distributed internally?

- Does key rotation require public KMS endpoints?

- Can trust remain valid after 30+ days of isolation?

4. Time Authority Audit

- Is there an internal NTP hierarchy?

- Are multiple stratum levels configured?

- Is there an independent trusted time source?

- Has clock drift tolerance been tested under isolation?

5. Supply Chain Audit

- Is there a local artifact registry?

- Are container images and packages mirrored internally?

- Is there an offline vulnerability database mirror?

- Can patching occur without public pulls?

- Are artifacts cryptographically verified internally?

6. 30-Day Isolation Simulation

- Day 1: Internet loss — what fails immediately?

- Day 7: Certificate nearing expiry — what breaks?

- Day 15: Token refresh cycle — what depends on external identity?

- Day 30: Patch release required — can it be applied offline?

- Day 45: Hardware replacement — can a node be rebuilt locally?

SCORED BELOW 16?

Your architecture has hidden outbound dependencies that will surface during your next isolation event — not during your next audit. Let’s map the control plane gaps before they become an incident.

Consult an ArchitectAdditional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session