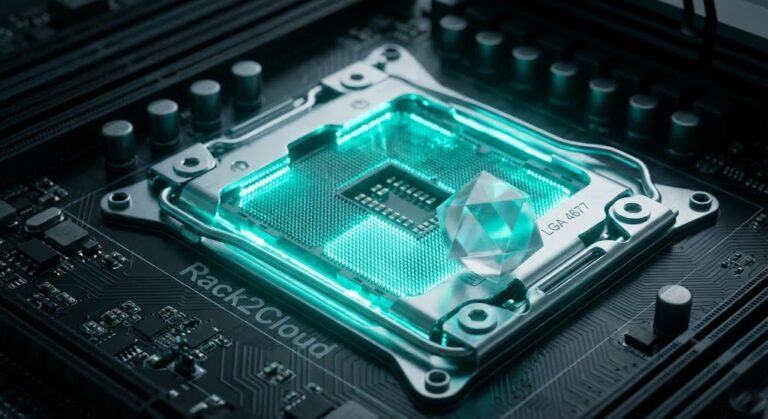

The CPU Strikes Back: Architecting Inference for SLMs on Cisco UCS M7

CPU inference SLM workloads are the most underserved category in enterprise AI architecture today. In the current AI gold rush, the industry standard advice has become lazy: “If you want to do AI, buy an NVIDIA H100.” For training a massive foundation model? Yes. For running ChatGPT-4 scale services? Absolutely — as we covered in…