PersistentVolumes vs StorageClasses: When You Actually Need Each

The PersistentVolume vs StorageClass confusion is not a syntax problem. It is an architectural model problem.

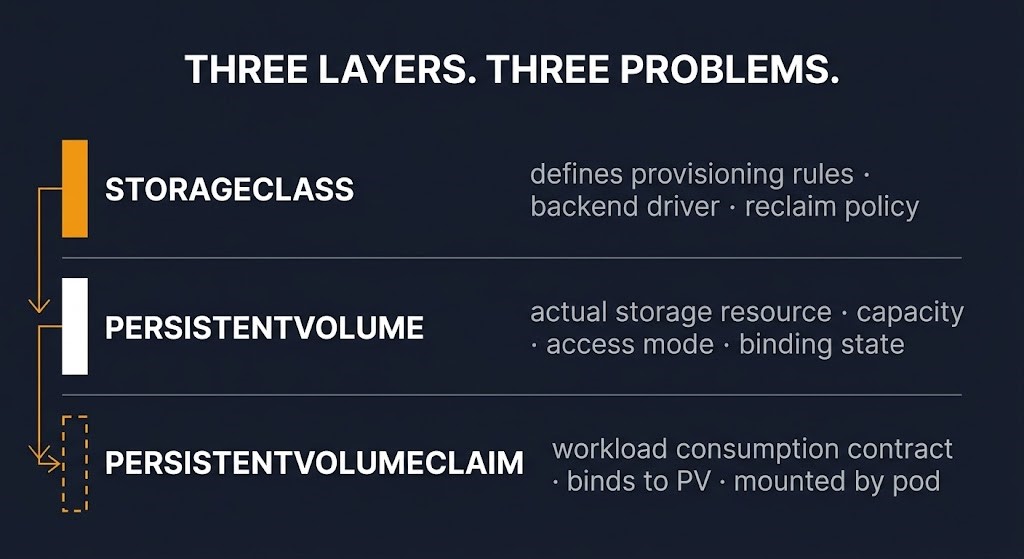

Teams get confused because they compare the factory to the disk and forget the claim is what the workload actually touches. PersistentVolume and StorageClass are not alternatives. They operate at different layers of the same provisioning stack — and PersistentVolumeClaim sits between the workload and both of them.

The persistentvolume vs storageclass decision only makes sense once PVC is in the picture. The real chain is:

StorageClass → PersistentVolume → PersistentVolumeClaim → Pod

Once that chain is clear, the decision is not “PV or StorageClass.” The decision is static provisioning or dynamic provisioning — and PVC exists in both models.

PersistentVolume vs StorageClass: The Layer Distinction

The three objects own three different problems:

StorageClass is the provisioning policy. It defines how storage gets created — which backend driver, which parameters, which reclaim behavior. It does not hold data. It defines the rules under which storage is produced.

PersistentVolume is the storage resource. It is the actual disk — capacity, access mode, reclaim policy, binding state. It exists independently of any workload. In dynamic provisioning, StorageClass creates it. In static provisioning, an admin creates it directly.

PersistentVolumeClaim is the consumption contract. It is how a workload requests storage. The pod does not mount a PV directly — it mounts a PVC. The PVC binds to a PV. The PV is backed by real storage. The workload only ever sees the claim.

This is the layer that teams most commonly skip in their mental model. When a PVC binds successfully and a pod mounts cleanly, the entire chain worked. When something breaks — or when data disappears — the failure is almost always in the reclaim policy or the provisioning model, not in the claim itself.

Understanding which layer owns the problem is the first diagnostic step. Kubernetes resource management operates the same way — the object the workload consumes is not always the object where the failure lives.

Dynamic Provisioning: When You Need a StorageClass

Dynamic provisioning is the correct default for most Kubernetes-native workloads. When a PVC is created, the StorageClass intercepts the request, calls the appropriate CSI driver, provisions a PV automatically, and binds the claim. The workload gets storage without any manual intervention.

Use dynamic provisioning when:

- Workloads are Kubernetes-native and their storage lifecycle should follow pod lifecycle

- StatefulSets require per-replica volumes provisioned on demand

- Development and staging environments need storage that appears and disappears with workloads

- The underlying storage platform supports a CSI driver (most modern platforms do)

Dynamic provisioning is not just convenient. It is the correct architectural model for workloads where storage is ephemeral to the workload. The StorageClass is the policy layer. PVC is the request. The PV is produced automatically.

The failure mode in dynamic provisioning is not the provisioning itself — it is the reclaim policy attached to the StorageClass. More on that below.

Static Provisioning: When You Need a Static PV

Static provisioning is the correct model when the storage exists before Kubernetes does — or must survive after it. An admin creates the PV manually, pointing it at a specific storage backend. A PVC then binds to that pre-existing PV. The workload consumes it through the claim.

Use static provisioning when:

- Pre-existing NFS, SAN, or NAS assets need to be consumed by Kubernetes workloads without reprovisioning

- Compliance requirements mandate that specific, audited storage volumes are used for specific workloads

- Data must outlive the cluster — DR scenarios, long-term retention, cross-cluster data sharing

- The storage backend does not support dynamic provisioning or a CSI driver

Static provisioning is not a legacy pattern. It is the correct answer when storage governance lives outside Kubernetes. Backup architecture is a clear example — recovery volumes are often pre-provisioned against specific storage tiers and must survive cluster deletion. Dynamic provisioning is the wrong model for that requirement.

The failure mode in static provisioning is different: teams pre-provision every volume regardless of whether the workload actually requires it, recreating the ticket-queue bottlenecks of pre-cloud infrastructure inside Kubernetes.

Where Teams Get It Wrong

The Default StorageClass Trap

A PVC is created without an explicit StorageClass reference. It binds to the cluster default. Nobody reviews what the default’s reclaim policy is set to. The workload is decommissioned during a routine cleanup. The PVC is deleted. The reclaim policy is Delete. The PV is removed. The data is gone.

No error. No warning. The provisioning worked exactly as configured. The deletion semantics were never reviewed because the default was never examined.

The Silent Delete

This is the most damaging failure mode in Kubernetes storage and the one most teams discover too late. Reclaim policy governs what happens to a PV when its PVC is deleted: Retain keeps the PV and data intact; Delete removes both. Delete is the default for most dynamically provisioned StorageClasses.

Most storage failures in Kubernetes are not provisioning failures. They are reclaim-policy failures discovered after data loss. Provisioning usually works. Deletion semantics are what hurt people.

Static PV Everywhere Syndrome

Teams pre-provision every volume “for safety” because dynamic provisioning feels risky or unfamiliar. The result is a manual volume management queue inside Kubernetes — the same operational bottleneck that cloud-native storage was designed to eliminate. Storage becomes the deployment bottleneck. The operational overhead is self-inflicted.

Policy Is Not Persistence

Teams tune StorageClass parameters — IOPS, replication factor, encryption settings — and assume they have solved data durability. They have only changed how the disk gets created. How long the data survives is governed by the reclaim policy, the backup strategy, and the data hardening model — none of which live in the StorageClass definition.

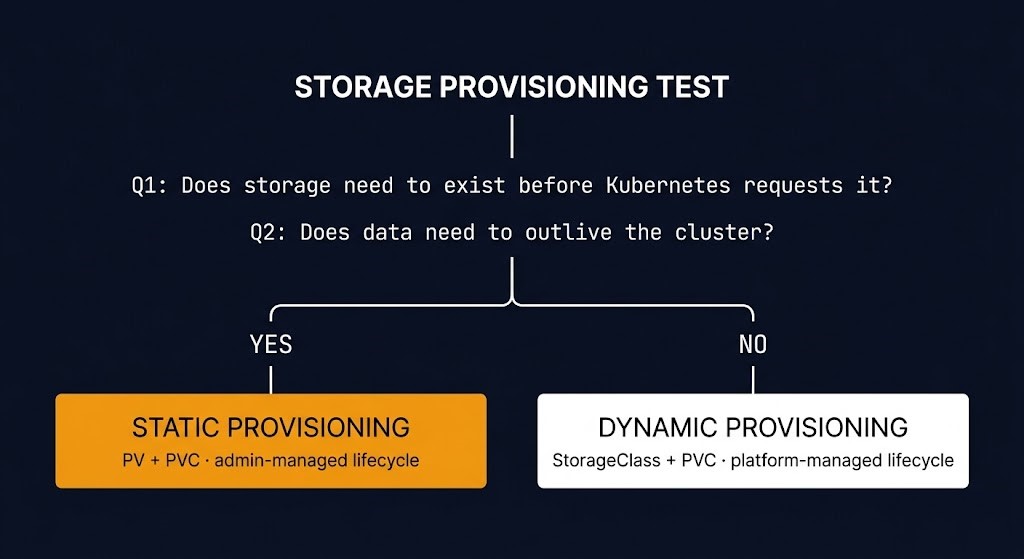

The Storage Provisioning Test

The persistentvolume vs storageclass decision resolves into one of two provisioning models. Two questions determine the correct one:

- Does the storage need to exist before Kubernetes requests it?

- Does the data need to outlive the cluster that mounts it?

If yes to either — start static. Pre-provision the PV. Bind via PVC. Manage the lifecycle explicitly.

If no to both — start dynamic. Define a StorageClass with explicit reclaim policy. Let PVCs drive provisioning. Review deletion semantics before workloads go to production.

The provisioning model is the decision. PV, StorageClass, and PVC are the objects that implement it. Kubernetes orchestration architecture applies the same principle at the cluster layer — the decision about what governs the resource is separate from the resource itself.

| Provisioning Model | Objects Used | Best For |

|---|---|---|

| Dynamic | StorageClass + PVC | Kubernetes-native workloads, stateful sets, ephemeral storage |

| Static | PV + PVC | Pre-existing storage, compliance-bound volumes, DR, cross-cluster data |

Architect’s Verdict

The persistentvolume vs storageclass confusion persists because the objects look like alternatives when they are actually layers. StorageClass is not a replacement for PV. It is the policy that produces PVs. PVC is not optional overhead. It is the only object the workload actually touches.

The provisioning decision — static or dynamic — determines which objects you manage directly and which ones the platform manages for you. Getting that decision wrong does not usually break provisioning. It breaks deletion. The Silent Delete is not a rare edge case. It is a default behavior that most teams only review after it has already happened.

StorageClass decides how storage appears. PersistentVolume decides what exists. PersistentVolumeClaim decides what the workload can trust.

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session