Google Just Moved the Control Plane Boundary

The control plane boundary just moved. Most platform architectures were not built for that assumption — and most teams have not noticed yet.

For a decade, the Kubernetes scaling playbook had one move: add another cluster.

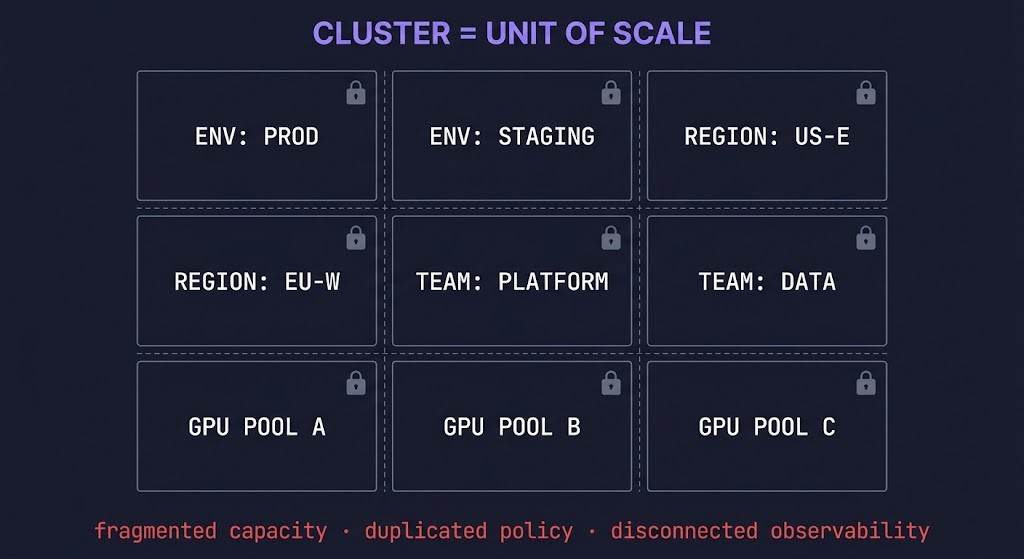

Need more capacity? Add a cluster. Need workload isolation? Add a cluster. Need regional separation? Add a cluster. Need a dedicated GPU pool? Add a cluster. The cluster became the unit of scale because the control plane could not scale far enough to avoid making it one.

At Google Cloud Next ’26, Google made the opposite bet. A single Kubernetes-conformant control plane spanning 256,000 nodes across multiple regions, managing a million accelerators as a unified capacity reserve. Not bigger Kubernetes. A different architectural claim entirely.

The claim is this: the control plane is now the unit of scale. The cluster is not.

Most platform architectures were not built around that assumption. They are still operating the old boundary — and that mismatch is what this post is actually about.

The Old Scaling Model Was Cluster Multiplication

The cluster-as-boundary model made sense when it emerged. Kubernetes control planes had real scale limits. Policy enforcement was cluster-scoped. Observability was cluster-local. Capacity pools were physically tied to the node groups a given control plane could manage. The ceiling was low enough that the only practical answer to growth was horizontal multiplication.

So teams multiplied. A cluster per environment. A cluster per region. A cluster per team. A cluster per workload class. A cluster per GPU type. The operational pattern became: when you hit a boundary, add another cluster.

That solved the immediate problem. It also created a different class of problem that compounded silently over time:

Capacity became fragmented. Each cluster held its own pool. Idle capacity in one cluster could not be claimed by a workload running out of headroom in another. The total resource picture was invisible to any single scheduling domain.

Policy became duplicated. Every cluster needed its own RBAC configuration, network policy, admission control, and security posture. Changes had to be applied across every cluster in the fleet. Drift was structural.

Observability became disconnected. Metrics, logs, and traces were cluster-local by default. Understanding system-wide state required stitching together signals from dozens of independent sources. The control plane shift was already underway before the tooling caught up.

Operational overhead compounded. Each cluster was a discrete operational object requiring lifecycle management, upgrade coordination, certificate rotation, and failure response. Fleets of dozens of clusters became fleets of dozens of independent operational burdens.

The industry normalized cluster multiplication because the alternative — scaling the control plane itself — was not a credible option. Until now it wasn’t.

Google Just Moved the Boundary

GKE Hypercluster is not a capacity announcement. It is an architectural boundary announcement.

A single, Kubernetes-conformant control plane managing 256,000 nodes across multiple Google Cloud regions, treating distributed infrastructure as a unified capacity reserve — that is a claim about where the boundary should sit. Not at the cluster. At the control plane.

The Control Plane Boundary is the logical boundary at which scheduling authority, policy enforcement, and capacity governance are unified. For a decade, that boundary was the cluster by necessity. Hypercluster is Google’s signal that it does not have to be.

When the control plane boundary moves outward — from cluster-scope to fleet-scope — several things change simultaneously:

Capacity planning becomes global. Idle compute anywhere in the fleet is visible and schedulable from a single domain. The fragmentation tax disappears.

Policy becomes a control plane concern, not a cluster concern. Enforcement happens once, at the boundary, rather than being replicated and synchronized across every cluster in the fleet.

Scheduling becomes capacity orchestration. Node placement within a cluster is a solved problem. Capacity allocation across a unified, multi-region pool governed by a single control plane is a different problem — one that maps directly to what GPU scheduling at scale actually requires.

Failure domains get redefined. When the control plane boundary expands, the assumptions that tied failure isolation to cluster boundaries need re-examination. This is not a reason to avoid the shift. It is a reason to design for it deliberately.

This is not a GKE-specific development to watch. It is a signal about where the architectural center of gravity is moving. The data gravity model has always described compute moving toward data. The control plane boundary shift describes governance moving toward compute — at fleet scale.

Most Teams Still Operate the Old Boundary

Here is where the post earns its teeth.

The Hypercluster announcement describes a capability most teams will not adopt immediately. But it encodes an architectural assumption that is already aging out regardless of what platform you run.

Most platform architectures today are still built around four cluster-scoped assumptions:

Cluster as operational boundary. Upgrades, certificates, API server health, node pool management — all scoped to the cluster. Operational runbooks are written cluster-by-cluster. This assumption made sense when each cluster was the largest coherent unit. It becomes overhead when the control plane boundary moves outward.

Cluster as policy boundary. RBAC, network policy, admission webhooks, security contexts — all applied at cluster scope. The practical consequence is policy duplication across every cluster in the fleet and the drift that follows. Kubernetes ingress and Gateway API migration is one visible symptom of this: policy boundaries that were cluster-scoped being renegotiated as the platform matures.

Cluster as capacity boundary. Cluster autoscaler, node pools, resource quotas — all defined within a cluster. Cross-cluster capacity awareness requires external tooling, federation layers, or manual coordination. This is the fragmentation problem that Hypercluster directly addresses.

Cluster as failure boundary. Blast radius assumptions, availability zone mapping, and incident scoping are all built around the cluster as the natural unit of failure. Platform resilience designed around cluster-local failure domains needs reexamination when the scheduling domain spans regions.

These assumptions were correct architectural choices when the control plane could not scale past them. They become architectural debt when the control plane boundary moves.

Most teams are designing as if the cluster is still the boundary. The industry is signaling it is not.

What Breaks When the Boundary Moves

The cluster-boundary assumptions do not just become inefficient when the control plane boundary shifts. Some of them break in ways that matter operationally.

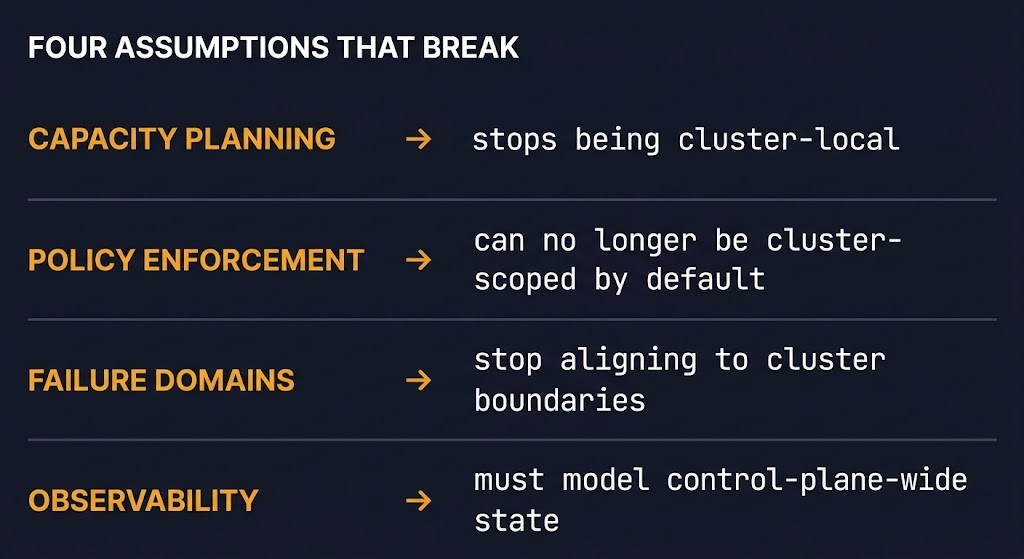

Capacity planning stops being cluster-local. The mental model of “how much headroom does this cluster have” becomes the wrong question. The right question becomes “what is the available capacity in this scheduling domain” — which may span regions and node types. Teams still doing capacity planning at cluster scope will misread their actual position. GPU idle is already a capacity forecasting failure in cluster-local models. It compounds in fleet-scale models without the right abstraction.

Policy can no longer be cluster-scoped by default. When the control plane boundary expands, policy that was cluster-scoped either gets promoted to control-plane scope or it creates inconsistency across the unified scheduling domain. The teams that treated policy duplication as a solved operational problem will find it is now a design problem.

Failure domains stop aligning cleanly to cluster boundaries. The assumption that a cluster failure is a bounded, isolated event was already weakening with multi-cluster federation. At control-plane-boundary scale, failure domain design becomes an explicit architectural decision rather than a cluster-topology default. Blast radius engineering at this layer is not a concept most Kubernetes operational runbooks have addressed.

Observability has to model control-plane-wide state. Cluster-local metrics describe local state. Control-plane-boundary-wide scheduling decisions require fleet-wide state visibility. The observability gap between what dashboards show and what the system is actually doing is well-documented at cluster scale. It does not shrink when the scheduling domain expands without deliberate instrumentation design.

Scheduling becomes capacity orchestration, not node placement. Kubernetes scheduling at cluster scope is a bin-packing problem. Scheduling at control-plane-boundary scope — across regions, node types, and accelerator pools — is a capacity allocation problem. The two require different mental models, different tooling, and different operational disciplines.

This is where Kubernetes operations becomes distributed control plane design. That is the actual shift — not the chip count.

The Million-Chip Problem Is Not About Chips

The headline number from Hypercluster is a million chips. That is the wrong thing to pay attention to.

The signal is not about AI scale. It is about architectural scale. Google is not telling you that you need to manage a million chips. Google is telling you that the next infrastructure bottleneck is not compute — it is the control plane that governs compute.

For the last decade, the Kubernetes scaling problem was answered by multiplying the unit that the control plane could manage. Add clusters. Keep the control plane boundary narrow. Solve capacity and isolation problems at the cluster layer.

That model is being retired — not because clusters are wrong, but because the control plane boundary has moved. The constraint that made cluster multiplication necessary no longer exists at the same ceiling. And architectures built around the old constraint do not automatically inherit the new capability.

The teams still scaling by multiplying clusters are solving yesterday’s bottleneck. The exit cost model applies here directly: the cost of a cluster-multiplication architecture is not just operational overhead. It is the structural cost of a boundary assumption that the industry is moving past. Every cluster added under the old model is a migration conversation waiting to happen under the new one.

The control plane boundary is not a GKE feature. It is the next architectural forcing function in distributed infrastructure. Google made the bet publicly at Next ’26. The architectural question for everyone else is not whether to adopt Hypercluster. It is whether your platform design is built around a boundary assumption that is already changing.

Architect’s Verdict

Kubernetes cluster multiplication was not a mistake. It was the correct architectural response to a real constraint: the control plane could not scale far enough to make it unnecessary.

That constraint has now been challenged directly. A single Kubernetes-conformant control plane governing 256,000 nodes across multiple regions is not an incremental improvement. It is a claim that the Control Plane Boundary — the logical boundary at which scheduling authority, policy enforcement, and capacity governance are unified — belongs at fleet scope, not cluster scope.

Most platform architectures are still designed around the cluster as that boundary. The four assumptions — cluster as operational boundary, policy boundary, capacity boundary, and failure boundary — were correct when the ceiling was low. They become architectural debt when the ceiling moves.

The million-chip number is not the story. The story is what it signals about where the bottleneck is moving. For a decade, teams added clusters to avoid hitting the control plane ceiling. The ceiling just moved. The question is whether your architecture was designed for the constraint, or for the problem the constraint was preventing you from solving.

The Control Plane Boundary has shifted. Most architectures have not.

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session