Beyond the VMDK: Translating Execution Physics from ESXi to AHV

“Lift-and-shift” is a business term, not an engineering one. When we migrate a workload from vSphere to Nutanix AHV, we are not simply moving binary files (VMDKs) between datastores; we are re-homing an execution thread into a fundamentally different kernel environment.

If you treat AHV as “vSphere with a different UI,” you risk encountering “Migration Stutter“—a performance degradation where the VM’s resource demand is met, but the interaction with the hypervisor creates unexpected latency. To avoid this, we must shift our focus from administrative configuration to Execution Physics.

The Philosophical Anchor: Determinism vs. Density

Before diving into kernel mechanics, we must acknowledge the fundamental shift in incentives between these two platforms:

- ESXi rewarded density via abstraction. Its heuristics are designed to cram as many workloads onto a host as safely possible, masking minor contentions through aggressive ballooning and scheduler juggling.

- AHV rewards determinism via architectural alignment. It prefers direct hardware coupling, predictability, and localized execution.

Migration failures almost always stem from confusing these incentives—attempting to force legacy density habits onto a platform that demands deterministic alignment. If you fail to translate this execution physics, you fall directly into the “lift-and-shift” lie, bringing legacy bottlenecks into a modern stack.

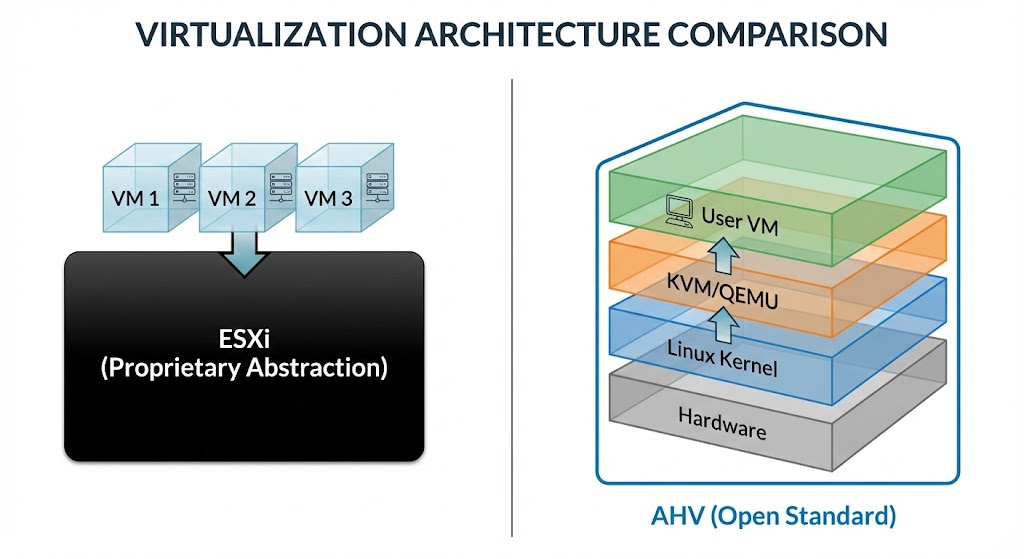

Architectural Divergence

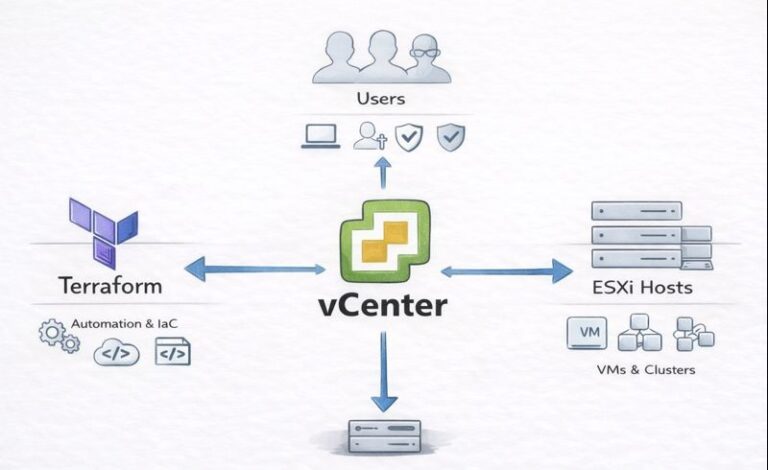

vSphere utilizes a proprietary, monolithic abstraction layer. Rather than concealing hardware entirely, it utilizes aggressive abstraction with automated topology management. It models the physical hardware and handles placement so smoothly that administrators rarely need to intervene.

AHV is built on KVM (Linux Kernel-based Virtual Machine). It is a transparent, open-standard hypervisor. This fundamental difference means your workload is no longer running inside a “black box” hypervisor, but rather as a KVM-managed QEMU process governed by the Linux CFS (Completely Fair Scheduler). This provides higher transparency for the OS, but it removes the “magic” of ESXi’s aggressive resource management, requiring you to architect for performance rather than relying on hypervisor-level heuristics.

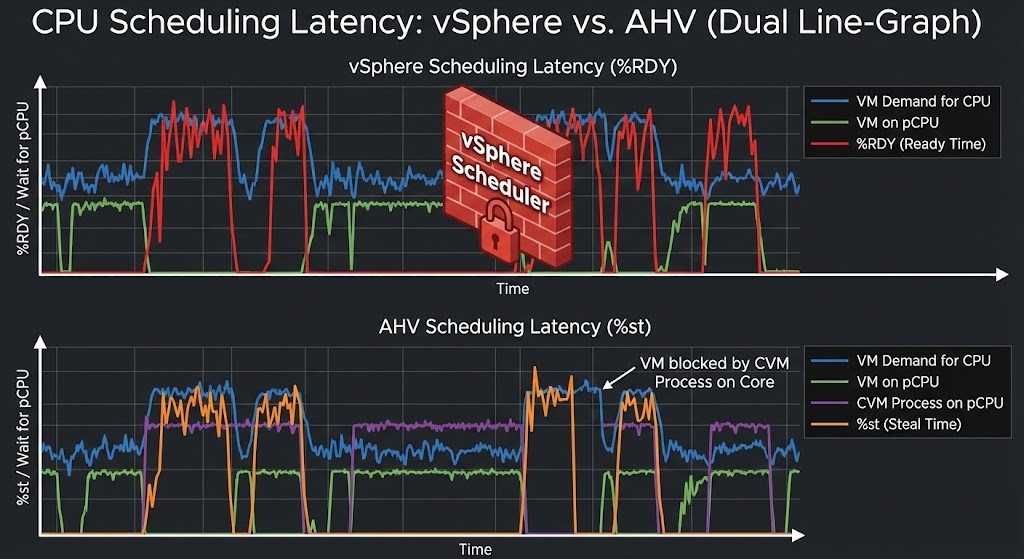

CPU Scheduling Translation: Ready vs. Steal

In the ESXi world, we monitor %RDY (Ready Time) to detect contention. In AHV, the metric shifts to %st (Steal Time).

- Ready Time (%RDY): The time a VM is ready to run but the hypervisor scheduler is preventing it.

- Steal Time (%st): The percentage of time the virtual CPU waited for the physical CPU while the hypervisor was busy servicing other tasks (including the host kernel and the CVM).

Crucial Distinction: In AHV, sustained 0% Steal Time under load is unrealistic in a healthy, active cluster. The CVM (Controller VM) is a resident tenant, and it consumes CPU cycles to manage I/O. The goal is not to eliminate Steal Time, but to ensure it remains deterministic.

Power & Frequency Governors:

Unlike vSphere, where power management is largely handled by the hypervisor and BIOS integration, AHV respects the Linux kernel’s frequency scaling. For deterministic performance, ensure the host cpupower governor is set to performance, not powersave or ondemand. Leaving this to default results in “latency jitter” via C-states and P-states as the CPU clocks up and down to save power.

SMT Translation: Logical ≠ Physical

In AHV, a 2x oversubscription on SMT-enabled hosts does not behave the same as it did under ESXi. vSphere has mature SMT fairness heuristics. KVM’s CFS scheduler, however, treats logical threads differently under pressure. Wide VMs pinned across sibling threads can induce cache thrash and scheduler jitter much faster than they would in an ESXi environment.

Wide VM Risk Envelope:

A 16-vCPU VM that performed seamlessly on a dual-socket ESXi host may degrade on AHV if its execution threads are fragmented across NUMA boundaries and SMT siblings without locality enforcement. In KVM, CFS scheduling can introduce severe latency when wide VMs span these boundaries, manifesting as scheduler wait times rather than traditional VMware “Co-Stop.”

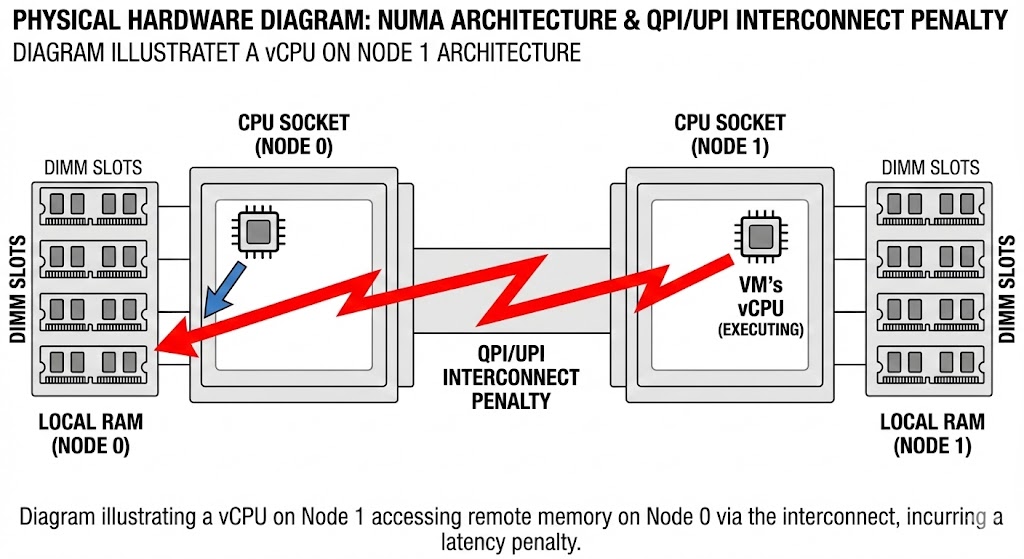

NUMA & Locality Gap

VMware’s DRS and memory management often mask NUMA boundary crossings. In KVM/AHV, these crossings are immediate performance risks.

- vTopology Alignment: If your VM is configured with 8 vCPUs, but your physical socket layout is 16 cores, you must ensure the VM’s virtual topology aligns strictly with the physical NUMA node.

- The Cross-Socket Penalty: If a VM’s memory is assigned to Node 0 but its vCPU is scheduled on Node 1, every memory access must traverse the interconnect (QPI/UPI). In a SAN-backed environment, this latency was often buried. In HCI, it becomes the primary bottleneck for memory-intensive workloads. Mapping these NUMA boundaries pre-migration is a mandatory step in any risk-deterministic framework for legacy workloads.

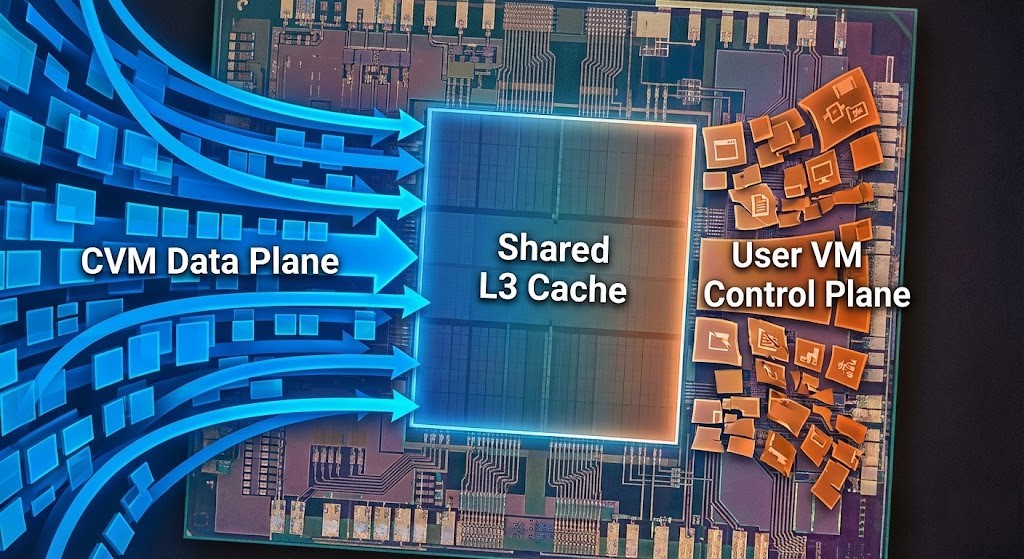

The Shared Silicon Problem: Control vs. Data Plane

In a legacy ESXi/SAN setup, the data plane (storage) lived in the array. The ESXi host was purely an abstraction layer for compute and memory. Scheduler contention and storage contention were orthogonal problems.

In AHV, they are coupled. The Data Plane (CVM) and the Control Plane (Your VM) share the same physical silicon.

The Controller Tax:

The CVM is the active storage controller living on the same CPU socket as your application. We must conceptually define the “Controller Tax” for any cluster sizing:

Effective Host CPU = Total Host CPU - CVM Baseline - CVM IO Spike EnvelopeExample: 32-core host − 4 (CVM baseline) − 6 (IO spike) = 22 effective coresIf you starve the CVM of this required CPU headroom, you aren’t just slowing down the storage; you are inducing arbitration latency directly into the application layer via L3 cache eviction.

Rebuild Amplification (The Failure Domain):

In ESXi + SAN, rebuild events occur on the array, with negligible impact on host CPU. In AHV, rebuild traffic consumes host CPU and network natively. During disk or node rebuild events, CVM CPU and network demand increases dramatically. A cluster operating comfortably at 75% CPU before a failure may enter severe arbitration latency during a rebuild if the CVM IO Spike Envelope was not mathematically protected.

Memory Pressure Semantics

ESXi uses proactive ballooning and transparent page sharing to reclaim memory. AHV uses a more direct, Linux-native approach.

- Reservation Physics: When you set a RAM reservation in AHV, reservations bias locality and prevent reclamation, but NUMA alignment still depends on initial placement policy.

- Strategy: For high-performance VMs, stop relying on hypervisor-level ballooning. Oversize your reservations to match the workload’s working set, as AHV will not proactively “shred” memory as aggressively as ESXi if it hits the limit—it will simply slow down the thread (or trigger OOM).

Storage Path & Interrupt Handling

In AHV, storage is accessed via VirtIO, and all I/O flows through the local CVM.

- Controller Arbitration: VM-level tuning (like adjusting queue depth) cannot bypass controller arbitration at the CVM layer. Misunderstanding this arbitration is a primary reason migrations fail during the “Day 2” reality of migrating VMware to Nutanix.

- VirtIO vs. VMXNET3/PSA: In vSphere, ESXi uses Pluggable Storage Architecture (PSA) to manage multipathing to external arrays. In AHV, the CVM abstracts the physical disks and manages data distribution internally. VirtIO introduces entirely different interrupt behavior than VMware’s stack.

- IRQ Balancing & SoftIRQ Pressure: Pay close attention to

irqbalancebehavior. For high packet-rate workloads or storage-heavy databases, misaligned interrupt affinity can spike SoftIRQ pressure. Ensure your storage threads are aligned with the physical CPU cores processing the CVM requests.

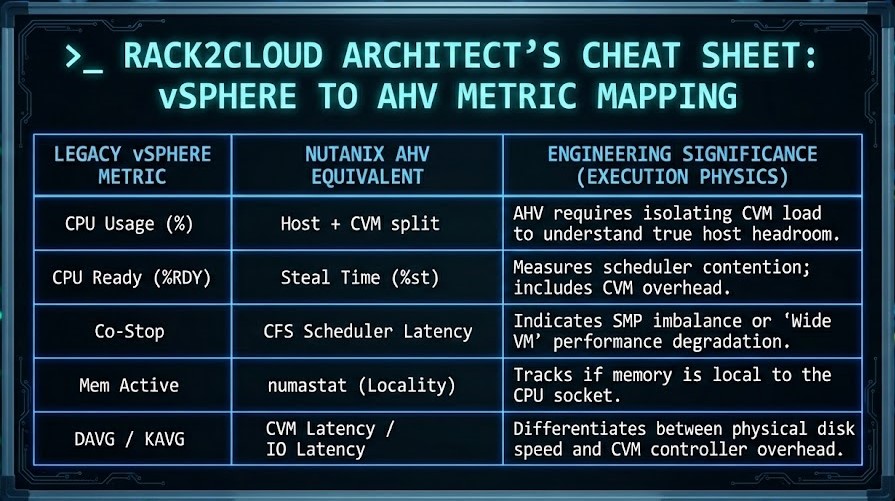

Observability Translation: ESXi to AHV Metric Mapping

If you are moving from vSphere, stop looking for vCenter metrics. Use this table as your transition guide for troubleshooting performance drift.

Deterministic Pre-Flight Framework

Before you migrate, run this “Go/No-Go” logic. You can use our VMware to HCI Migration Advisor to audit your current footprint, but from an engineering perspective, if you cannot satisfy these physical thresholds, the migration will suffer from performance drift.

- CPU Headroom Check: Is the host CPU utilization below 70% under P95 load, excluding the CVM IO spike envelope?

- Steal Time Baseline: Does the AHV host show a consistent Steal Time < 5% under normal load?

- NUMA Boundary: Is the VM’s vCPU count equal to or less than the physical core count of a single NUMA node? (If “No,” strictly enforce vTopology mapping).

- CVM CPU Arbitration: Is the CVM pinned to the correct cores for the IO-profile of the guest?

- IO Linearity Test: Does the storage throughput scale linearly when adding concurrent I/O threads without spiking SoftIRQ?

You know the risk of carrying forward architectural debt. Now, we tear down the mechanics. Track the series below as we map the exact physical changes required for a deterministic migration.

Skip the wait. Download the complete Deterministic Migration Playbook (including the Nutanix Metro Cluster Implementation Checklist) and get actionable engineering guides delivered via The Dispatch.

SEND THE BLUEPRINTArchitectural References & Additional Reading

To further explore the kernel-level mechanics and vendor-specific documentation referenced in this architectural guide, consult the following canonical resources:

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session