The Control Plane Shift: Every Infrastructure Decision Now Looks the Same

The control plane shift is the most important infrastructure concept of 2026 — and most teams are experiencing it three or four times simultaneously without recognizing it as the same decision each time.

Your VMware renewal lands on the desk. The number is larger than last year. You open a spreadsheet and start modeling Nutanix. That’s one decision.

Your platform team flags that Terraform is on the IBM/HashiCorp BSL and they want to evaluate OpenTofu. That’s another decision.

Your Kubernetes backup posture comes up in an audit. Someone asks whether Velero gives you real portability or just the appearance of it. That’s a third decision.

Your AI inference bill arrives and it’s 40% higher than the compute spend it replaced. Engineering wants to know where the budget went. That’s a fourth.

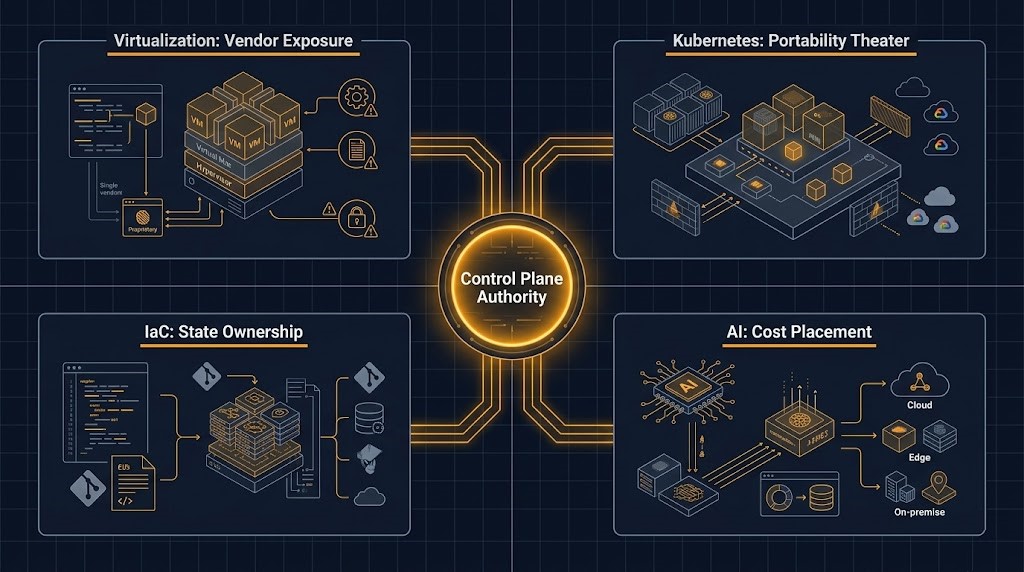

These feel like separate conversations. Different vendors, different teams, different budget lines, different timelines. But underneath each one, the structural question is identical: who controls your control plane, and what does it cost you when that control shifts?

That is the decision. Everything else is surface.

The Control Plane Series

Each post below maps one axis of the same structural decision. This post is the capstone — start here, then go deep on whichever axis is most urgent for your stack.

Axis 01 — Virtualization

Nutanix vs VMware: Post-Broadcom Decision Framework

Vendor exposure, migration physics, and conditional exit strategy

Axis 02 — IaC

Terraform vs OpenTofu: Cost, Control, and the Post-BSL Decision

State ownership, IBM acquisition risk, and the operational model trade-off

Axis 03 — Kubernetes

Velero CNCF: What Vendor-Neutral Governance Actually Changes

Governance vs. operational independence — and what CNCF actually fixed

Axis 04 — AI Infrastructure

AI Inference Infrastructure Split: What GTC 2026 Changed

Training vs. inference separation, cost placement, and hardware decisions

What the Control Plane Shift Actually Means

Control plane is an overloaded term. In networking it means routing decisions. In Kubernetes it means the API server and etcd. In a broader architectural sense — the sense that matters here — it means the system that determines what your infrastructure does, how it changes, and who has authority to make it change.

Every major infrastructure platform ships with a control plane embedded in the product. You don’t buy compute — you buy compute plus the control plane that manages it. You don’t buy a hypervisor — you buy a hypervisor plus the licensing and management stack that governs it. You don’t buy a backup tool — you buy backup behavior plus the governance model that determines who controls the recovery logic.

The shift happening across all four domains simultaneously is this: the cost and risk of that embedded control plane has become the dominant factor in the platform decision — more than features, more than performance, more than ecosystem maturity.

This isn’t a new idea. What’s new is that it’s happening at once, across every layer of the stack, with compounding effects. An enterprise that accepted VMware’s control plane terms in 2022, HashiCorp’s in 2023, and a hyperscaler’s AI infrastructure terms in 2024 has now accepted three separate vendor control plane dependencies — and the renewal cycles on all three are approaching simultaneously.

That’s the architecture problem of 2026.

Axis 01 — The Virtualization Shift: From Architecture to Vendor Exposure

Before the Broadcom acquisition, a VMware evaluation was an architecture evaluation. vSphere vs. Nutanix AHV vs. Proxmox was a question about hypervisor performance, HCI maturity, management overhead, and ecosystem depth. Architects ran benchmarks. They evaluated vSAN replication factors. They modeled RTO/RPO against SRM runbooks.

Post-Broadcom, that conversation doesn’t start with architecture. It starts with the renewal number.

The unit of decision changed. VMware customers are no longer primarily optimizing their infrastructure architecture — they’re managing vendor exposure. The question isn’t which hypervisor is technically superior. It’s whether you accept Broadcom’s new contract model or design around it.

The four axes that govern this decision in practice:

| Axis | The Real Question |

|---|---|

| Cost Predictability | Can you model your VMware bill 3 years out? |

| Control Plane Ownership | Who dictates how your architecture evolves? |

| Migration Physics | What does your actual workload inventory look like? |

| Exit Cost (Future) | Are you trading one control plane lock-in for another? |

That last axis is the one most migration assessments skip. Nutanix’s control plane is Prism. It’s local, it survives network partitions, and it doesn’t phone home for licensing validation the way vCenter does. But it’s still a proprietary control plane. The question isn’t whether you’re eliminating vendor control — you’re not. The question is whether the new vendor’s control plane terms are structurally better than the one you’re leaving.

The conditional verdict: under 500 VMs with no deep NSX dependency and a renewal approaching, the architecture case for re-evaluation is strong. Deep NSX and SRM runbooks embedded in DR compliance mean a structured exit — not an immediate one. Mid-transformation with hybrid chaos means run a parallel evaluation before the next budget cycle forces the decision.

The control plane insight: VMware’s shift wasn’t a pricing change. It was a change in who controls the terms of your infrastructure’s future. That’s a different kind of risk than a 30% price increase — and it’s the risk that most renewal models fail to quantify.

Full analysis: Nutanix vs VMware Post-Broadcom Decision Framework

Axis 02 — The IaC Shift: From Tooling to State Ownership

The HashiCorp BSL move in August 2023 looked like a licensing story. It was a control plane story.

Terraform’s state file is not metadata. It is not a cache. It is the authoritative mapping between every resource declaration in your HCL and its real-world identity in the provider. It is the control plane record that makes apply deterministic rather than destructive. When HashiCorp moved to the BSL — and when IBM acquired HashiCorp in 2025 — the question that mattered wasn’t whether the binary still worked. It was: who controls the evolution of the system that owns your infrastructure state?

The IBM acquisition sharpened this. HashiCorp’s product leadership responses on Terraform’s roadmap have been, as one analyst noted, “vague enough to make risk committees nervous.” Meanwhile Terraform Cloud pricing has increased an average of 18% year-over-year. The enterprise teams that recognized this earliest aren’t the ones who switched to OpenTofu fastest — they’re the ones who asked the right question first: what are we actually migrating when we evaluate this?

The answer: you’re not migrating a binary. You’re migrating state ownership — and all the operational complexity that comes with it.

OpenTofu’s CNCF membership and Linux Foundation governance provide a structurally different control plane model. Multi-vendor Technical Steering Committee. MPL 2.0 license. Community-driven roadmap. The divergence from Terraform is now real at the feature level — native client-side state encryption in v1.7, provider-defined functions in v1.9, features that Terraform’s enterprise tier withheld for years. At Spacelift, 50% of all deployments now run on OpenTofu. The fork executed.

But OpenTofu’s control plane model shifts risk inward. There’s no SLA. When a state locking edge case surfaces during a production deployment window, the escalation path is a GitHub issue and a Slack channel. That’s not a reason to avoid OpenTofu — it’s a reason to evaluate whether your team has the internal operational ownership to carry it.

The honest frame: migrating to OpenTofu is replacing a vendor support contract with internal operational ownership. That trade is worth it for many teams. It is not cost-free for any of them.

The control plane insight: The IaC control plane lives in the state file. The licensing conversation is downstream of that. Teams that evaluate Terraform vs. OpenTofu as a tooling comparison will make a worse decision than teams that evaluate it as a state ownership and operational model question.

Full analysis: Terraform vs OpenTofu: Cost, Control, and the Post-BSL Decision

Axis 03 — The Kubernetes Shift: Portability Theater vs. Real Recovery Authority

Kubernetes solved the compute orchestration problem. It created a different one: the illusion of portability.

The promise of Kubernetes was workload portability — run anywhere, migrate freely, no vendor lock-in at the application layer. That promise is structurally true at the container spec level and operationally incomplete at every layer below it. Your workloads are portable. Your control plane dependencies are not.

The Velero CNCF move at KubeCon EU 2026 crystallized this. Broadcom contributed Velero — the Kubernetes-native backup, restore, and migration tool — to the CNCF Sandbox, transitioning it to vendor-neutral community governance. The announcement was framed as an open source story. It was a control plane story.

Vendor-neutral governance means no single vendor controls the roadmap. Vendor-independent operations means your recovery path survives without them. CNCF governance gives you the first. It does not give you the second.

Velero’s restore path still requires live access to external object storage. Your IAM credential chain still needs to survive the same incident your cluster didn’t. Your restore-time complexity is still proportional to the external dependencies your workloads carry. None of those operational dependencies changed on March 24th.

The deeper Kubernetes control plane problem runs further than backup tooling. The Gateway API replacing Ingress, the ongoing VKS vs. OpenShift vs. EKS platform decision, the service mesh architecture question — each of these is a control plane dependency layered on top of the Kubernetes API. Each one answers “who controls how traffic, identity, and state move through my cluster” differently.

The teams building the most resilient Kubernetes architectures in 2026 are not the ones with the most portable container specs. They’re the ones who have explicitly engineered what survives when the control plane fails — and for each dependency, they’ve answered the question deliberately rather than inherited the default.

The control plane insight: Kubernetes portability is real at the workload layer. Control plane survivability is an engineering problem that must be solved explicitly at the backup, networking, identity, and state layers. Governance changes (like Velero CNCF) reduce project-level vendor risk. They do not reduce operational dependency risk.

Full analysis: Velero CNCF Backup: What Vendor-Neutral Governance Actually Changes

Axis 04 — The AI Infrastructure Shift: From Compute to Cost Placement

AI infrastructure introduced a new kind of control plane problem — one that doesn’t come from vendor licensing or governance models, but from architecture decisions that compound invisibly.

The AI inference cost shift is the clearest expression of this. Inference crossed 55% of total AI cloud infrastructure spend in early 2026. It is now the dominant AI workload by cost — not training. Yet most enterprise teams are still running inference on the same GPU clusters they use for training: the architectural equivalent of running your production database on your development server because both technically work.

The control plane problem here is cost placement. In traditional infrastructure, cost is a provisioning decision. You provision a VM, you pay for it. The cost model is predictable and bounded. In AI inference infrastructure, cost is a behavioral decision. Every token, every API call, every pipeline invocation adds to the tab. The compute is always on. The cost model is compounding and unbounded without explicit architectural controls.

The teams that have accepted a hyperscaler’s AI infrastructure control plane — where model selection, routing logic, token budgets, and scaling behavior are all governed by the platform defaults — have accepted a cost control plane they do not own. The bill reflects the platform’s decisions as much as their own.

The architectural response is cost-aware model routing: a decision layer between request and model that routes on complexity, confidence, and cost tolerance before the inference call is made. A simple keyword lookup should not get the same compute as a multi-step reasoning task. That routing decision is a control plane decision — and most teams have left it at the platform default.

The GPU placement question extends this further. Private LLM training hardware decisions — the cluster architecture, the network fabric, the PCIe topology, the BIOS configuration that affects the Adam optimizer tax — all of these are control plane decisions that determine the economics of the entire AI infrastructure stack. Teams that treat these as procurement decisions rather than architecture decisions will pay 30-40% more for the same effective compute.

The control plane insight: AI infrastructure cost is not a spend problem. It is a control plane placement problem. The teams controlling their AI cost curves are the ones who own the routing logic, the model selection policy, and the placement decisions — not the ones who accepted the platform defaults and hoped the bill would stabilize.

Full analysis: AI Inference Infrastructure Split | AI Inference Cost: Model Routing in Production

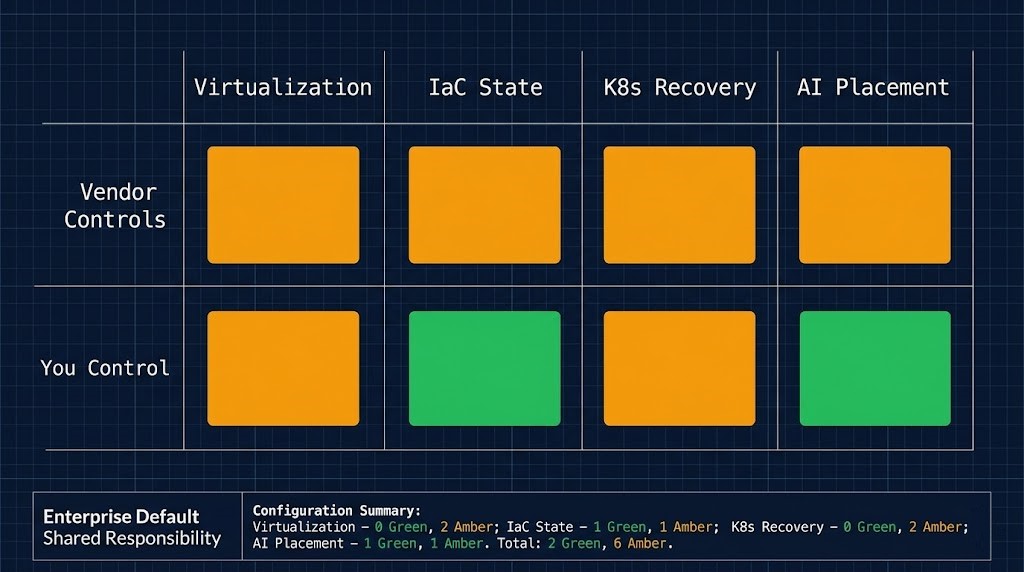

The Unified Decision Framework

Mapped across all four axes, the pattern is consistent. Every control plane shift follows the same structural sequence:

The Control Plane Shift Sequence

Vendor embeds control plane in product

Product adoption creates operational dependency

Vendor adjusts terms — pricing, licensing, governance, or architecture

Exit cost is revealed — higher than anticipated at adoption

Architect decides: accept new terms or engineer around them — under time pressure

The mistake most teams make is treating each instance of this sequence as a separate vendor negotiation. It isn’t. It’s a portfolio of control plane exposures — and the compounding risk is that renewal cycles on multiple dependencies are now arriving simultaneously.

The three questions that should anchor every control plane evaluation:

1. Who controls the evolution of this system? Not just today — over a three to five year horizon. Vendor roadmap, community governance, internal ownership model, licensing trajectory. The Terraform BSL, the Broadcom VCF bundling, and the hyperscaler AI pricing model all looked reasonable at adoption. The control plane exposure was only visible at renewal.

2. What does it cost to change the answer to question one? This is the exit cost calculation — and it must include migration physics, not just licensing delta. NSX runbooks embedded in DR compliance are not a line item. Terraform state files encrypted under TFC-managed keys are not a line item. These are architectural dependencies that convert vendor contracts into operational facts.

3. Are you trading one control plane lock-in for another? OpenTofu replaces HashiCorp governance with community governance — and transfers support contract risk to internal operational ownership. Nutanix replaces vCenter’s control plane with Prism’s. A different vendor’s AI infrastructure replaces one cost model with another. The answer to question one changes. The structural question does not disappear.

The Architect’s Verdict

The control plane shift is not a trend. It is the operating condition of enterprise infrastructure in 2026.

Every platform decision your organization makes this year — hypervisor renewal, IaC toolchain, Kubernetes backup posture, AI inference architecture — contains an embedded control plane question. The teams that recognize this will evaluate each decision against a consistent framework. The teams that don’t will negotiate each one separately, optimize each one locally, and wonder in eighteen months why the portfolio of decisions feels incoherent.

The right response is not to eliminate all vendor control planes. That’s neither possible nor desirable — vendor control planes exist because they solve real operational problems. The right response is to make the control plane decision explicitly, with visibility into the exit cost, before the renewal cycle forces it.

The three-part test for every platform decision:

The Three-Question Control Plane Test

01 / If the vendor changes the terms tomorrow — what breaks and what survives?

Map every dependency: licensing validation, management APIs, backup paths, routing logic. The answer is your actual exposure, not your theoretical one.

02 / If you migrate in three years — what is the actual cost?

Not licensing delta. State files, runbooks, operational muscle memory, team retraining, and the migration window itself. This is the exit cost that never appears in the initial evaluation.

03 / If you accept the control plane as-is — what architectural choices does it foreclose?

Every control plane dependency narrows the option space for future decisions. What can you no longer build, migrate to, or audit independently once you’ve accepted this dependency?

Answer those three questions for every platform in your stack. The control plane shift is already happening. The only variable is whether you’re navigating it deliberately or reacting to it under pressure.

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session