The GKE “Zombie” Feature: Why gcloud Hides What the API Knows

When a Kubernetes founder tells you that you might be wrong about a platform limitation, you don’t argue with them. You open a terminal and try to break something.

This week, following my autopsy of a GKE IP Exhaustion Outage, I entered a debate with Tim Hockin (thockin), one of the original creators of Kubernetes. The contention was simple: is the GKE Service CIDR in GKE Standard truly immutable, or does the managed control plane just pretend it is?

Vendor documentation says “No.” The CLI says “Error.” But the architectural intuition says, “Upstream Kubernetes can do this, so why can’t GKE?”

I spent the morning in the lab proving that sometimes, the “impossible” is just “hidden.” This is the story of a Zombie Feature — a capability that is alive in the kernel but dead in the dashboard — and why knowing the difference between a technical block and a tooling block can save your architecture during an outage.

The Decision Framework: Tooling Trust Levels

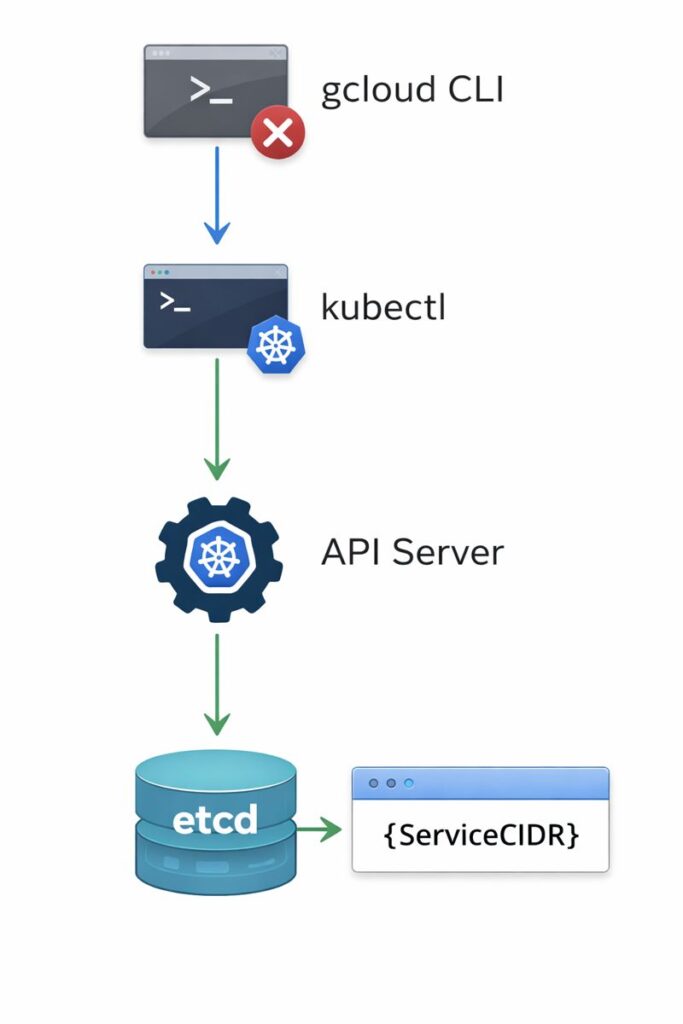

As architects, we often treat the vendor CLI — gcloud, aws, az — as the single source of truth. This is a mistake. When debugging edge cases or resource constraints, you must decide which layer of the stack to interrogate.

| Tooling Layer | Trust Level | Best Use Case | The Trap |

| Vendor Console (GUI) | Low | Quick visualization, billing checks. | Hides 80% of configuration options to “simplify” UX. |

| Vendor CLI (gcloud) | Medium | Day 1 provisioning, standard CRUD ops. | Enforces guardrails that may not exist in the engine. |

| Native API (kubectl) | High | Debugging, state validation, granular edits. | Shows raw state, including “unsupported” or beta objects. |

Architect’s Rule: When the CLI says “No,” but the upstream docs say “Yes,” always verify with the Native API.

This pattern appears across every major cloud provider — GKE Service CIDR constraints, AWS VPC subnet limits, Azure NSG rule caps. The CLI enforces opinionated guardrails that frequently don’t reflect actual API capability. For the broader GKE IP exhaustion context that triggered this investigation, see Client’s GKE Cluster Ate Their VPC: The Class E Rescue.

The Experiment: The Thockin Challenge

To test the theory, I spun up a disposable GKE Standard cluster (1.31+) and attempted to force-feed it a new GKE Service CIDR range. The goal was to expand the Service network without rebuilding the cluster — a critical requirement during a live outage.

Test 1: The Front Door (gcloud)

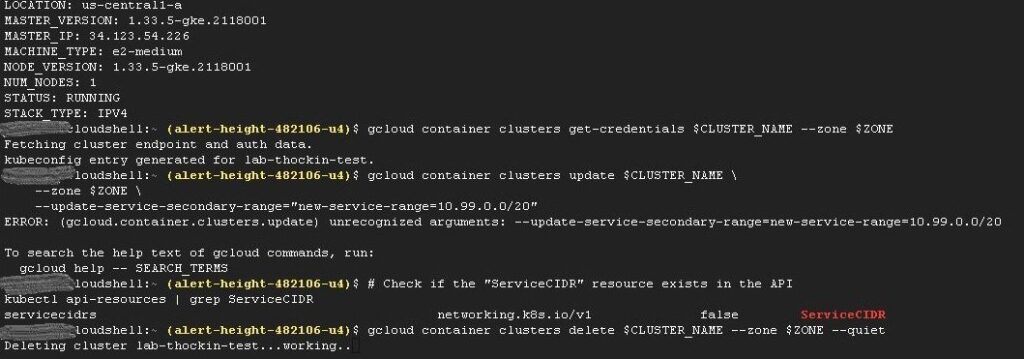

The Google Cloud CLI is the curated path. It applies the “safe” defaults Google wants you to use. I attempted to update the cluster using standard secondary range flags.

gcloud container clusters update $CLUSTER_NAME \

--update-service-secondary-range="new-service-range=10.99.0.0/20"

The result: unrecognized arguments.

The CLI didn’t just fail — it gaslighted me. It threw an error claiming the argument doesn’t exist. If you were an engineer troubleshooting an outage at 3 AM, this is where you would stop. You would assume the platform physically cannot support the request.

Test 2: The Back Door (kubectl)

If the CLI is the menu, the API is the kitchen. I bypassed the vendor tooling and queried the API server directly to see if it recognized the upstream ServiceCIDR resource.

kubectl api-resources | grep ServiceCIDR

The result:

NAME APIGROUP NAMESPACED KIND

servicecidrs networking.k8s.io/v1 false ServiceCIDR

The resource is right there. It is false (cluster-scoped), it is v1 (stable), and the API server is actively listening for it. The feature isn’t missing — the door handle is just removed.

Operational Cost Analysis: OpEx Impact

We often talk about cloud costs in terms of licensing and compute, but the hidden killer is Operational Expense during downtime. In the outage that sparked this research, we faced a rebuild vs. repair decision.

- Scenario A — Trust the CLI: The CLI says we can’t add IPs. We must rebuild the cluster. Cost: 4 Engineers × 12 Hours + business downtime = high OpEx.

- Scenario B — Trust the API: The API reveals a path to patch the configuration. Cost: 1 Architect × 2 Hours + zero downtime = low OpEx.

Knowing that ServiceCIDR exists in the API changes the risk calculation entirely. Even if patching it is “unsupported” by Google Support, having the option to fix it in a crisis is a powerful architectural lever. To avoid getting trapped by these vendor limitations, the Engineering Workbench has tools designed specifically to audit your environments before the outage hits.

The broader pattern of Kubernetes Day 2 failures — where the platform appears healthy but a hidden constraint is the real cause — is covered in Kubernetes Day-2 Incidents: 5 Real-World Failures.

Architect’s Verdict

Would I cowboy-engineer a manual API patch for GKE Service CIDR in a production cluster on a Tuesday afternoon? No — that creates drift between your Terraform state and the actual cluster state, and drift is a governance problem that compounds. The infrastructure as state model explains exactly why.

However, during a P0 outage, the rules change. Knowing the difference between a Technical Block (the kernel can’t do it) and a Tooling Block (the CLI won’t let you) is what separates a Senior Engineer from a Staff Architect.

If you’re running GKE Standard and haven’t audited your Service CIDR headroom: do it before you need to. The IP exhaustion failure mode is well-documented, and the remediation options narrow significantly once you’re in a live outage. The GKE IP Exhaustion triage post covers the math and the Class E rescue path.

If you’re designing a new GKE cluster: plan your Service CIDR with 3x headroom from day one. The /20 default sounds generous until you’re running 200+ services across multiple namespaces. Changing it later is the conversation this post is about.

If you’re troubleshooting any cloud platform constraint: always interrogate the API before accepting the CLI’s answer as a hard limit. The CLI enforces opinionated guardrails — the API reflects actual capability. Treat them as different tools with different trust levels, as the table above shows.

Special thanks to Tim Hockin for sending me down this rabbit hole.

The Kubernetes Cluster Orchestration pillar is the full reference for GKE and Kubernetes architecture on rack2cloud.

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session