Hybrid Cloud vs Multi-Cloud Architecture: The Engineering Reality Nobody Documents

The boardroom debate about moving to the cloud is over. What replaced it is harder: the engineering reality of managing what that decision actually produced. Hybrid cloud vs multi-cloud architecture isn’t a vendor comparison anymore — it’s a description of the operational burden your team carries every day, measured in egress bills, fragmented identity planes, and networking complexity that no migration guide prepared you for.

By 2025, most enterprise infrastructure teams weren’t choosing between hybrid and multi-cloud. They were already running both, simultaneously, under budget pressure, with tooling that was designed for a simpler world. This post documents what that actually looks like on the ground — not the marketing diagram version, but the version your on-call rotation lives in.

Resetting the Definitions

Before you can reason clearly about hybrid cloud vs multi-cloud architecture, you need definitions that reflect production environments rather than analyst frameworks.

Hybrid Cloud: The Tethered Anchor

Hybrid cloud in production isn’t a transitional state on the way to fully cloud-native. For most enterprise teams it’s a permanent architectural condition driven by physics and economics. You have on-premises infrastructure — mainframe workloads, manufacturing OT systems, regulatory-bound storage, or databases too large to move — that cannot be migrated on any realistic timeline. The cloud portion attaches to this anchor to provide burst compute, user-facing front-ends, or DR sites.

The engineering reality is that you are managing the tether — the DirectConnect or ExpressRoute link — not the cloud. Your production constraints are latency sensitivity between an app server in us-east-1 and a database in your Ohio datacenter. You aren’t cloud-native. You are network-dependent, and the network is the failure domain.

Multi-Cloud: Permanent Silos, Not Workload Mobility

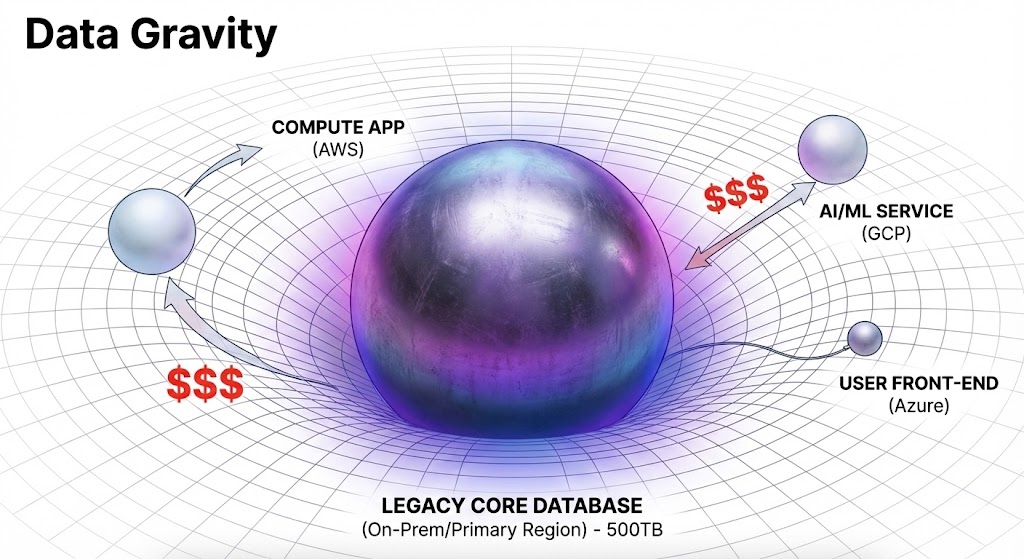

The 2020 vision of multi-cloud was workload mobility — run the same VM in AWS today and Azure tomorrow to arbitrage costs. That vision failed. Data gravity killed it. What multi-cloud actually means in production is best-of-breed silos, each locked in by the organizational decisions that pre-date your tenure:

- Azure because your organization runs on M365 and Active Directory, making Entra ID the path of least resistance for corporate OS workloads — which typically leads to the Azure Landing Zone governance model.

- AWS because your developers built their CI/CD pipelines around Lambda and S3 five years ago and the replatforming cost exceeds the organizational will to do it.

- GCP because the data analytics and AI/ML stack fit a specific workload profile that neither AWS nor Azure matched at the time the decision was made — a dynamic the AWS vs Azure vs GCP decision framework maps in detail.

You aren’t moving workloads between providers. You are building secure networking bridges and consistent governance policies across three radically different IAM models, API surfaces, and billing structures — simultaneously.

The Three Engineering Battlegrounds

In hybrid cloud vs multi-cloud architecture, the operational burden concentrates in three specific areas. These aren’t theoretical risks — they’re the problems your on-call rotation encounters in production.

Egress Shock and Networking Complexity

In a single cloud, networking is relatively flat and cost-predictable. In hybrid and multi-cloud environments it becomes expensive spaghetti. Data gravity is the governing constraint — compute is cheap and elastic, multi-terabyte datasets are not. The surprise bill arrives when an application in Cloud A pulls a large dataset from Cloud B or on-premises, and nobody modeled the transfer cost at design time.

The engineering challenges are hub-and-spoke transit network design that doesn’t hairpin traffic unnecessarily, and strict egress filtering with cost alerting at the network level to prevent a misconfigured dev/test environment from draining the budget over a weekend. Cloud egress costs are an architecture problem before they’re a FinOps problem — the teams that control them modeled data movement as a first-class constraint at design time, not after the first invoice. The deeper cost mechanics of what egress shock looks like on an actual invoice are documented in the lift-and-shift cost trap analysis.

Vendor lock-in in multi-cloud environments also arrives through networking before it arrives through APIs. The networking layer is where exit cost accumulates silently — and it compounds with every workload you add to a provider’s transit fabric.

The Lowest Common Denominator Tooling Trap

Managing multiple clouds drives teams toward abstraction tools — Terraform, Pulumi, Crossplane — with the promise of write-once, deploy-anywhere IaC. The promise doesn’t survive contact with provider divergence. To support AWS, Azure, and vSphere simultaneously, abstraction layers force a lowest-common-denominator feature set. A new AWS capability that would reduce your compute cost 30% can’t be used if the abstraction layer hasn’t caught up or if Azure has no equivalent.

The engineering decision this creates — when to use the generic abstraction versus when to break glass and use native CloudFormation or ARM templates — generates tooling drift that compounds over time. The Wrapper Tax is the accumulated cost of that drift: feature lag, debugging complexity, and tribal knowledge requirements that make every incident response slower. The full mechanics of why Terraform multi-cloud module abstraction fails in production are worth understanding before you commit to a universal module pattern.

The Fragmented Security Boundary

This is the highest-stakes battleground. In an on-premises environment your perimeter was a firewall. In hybrid and multi-cloud architecture your perimeter is identity — and your attack surface is fragmented across dozens of accounts, subscriptions, and providers with no unified control plane.

The lateral movement path looks like this in practice: phish a developer’s M365 credential, pivot into Azure, use a stored service principal key to access an AWS S3 bucket, find a backup file containing on-premises credentials, deploy ransomware to the VMware environment. Each hop crosses a provider boundary. Each boundary is a detection gap.

Your identity system is your largest single point of failure in a multi-cloud environment — and most teams don’t model it as a failure domain until after a breach. The fragmentation that makes multi-cloud operationally complex makes it forensically difficult as well. Detecting lateral movement across provider boundaries requires logging and correlation infrastructure that most teams build reactively.

In this environment, backup architecture is no longer just recovery insurance. It’s the last line of defense when the control plane is compromised. If your production data spans three clouds and an on-premises datacenter, your backup data must sit outside that entire blast radius — on a separate credential plane, with administrative air-gapping enforced architecturally rather than by policy. Designing backup systems for an adversary that knows your playbook starts with understanding that the attacker’s first target is the backup catalog, not the production data.

Architect’s Verdict

Hybrid cloud vs multi-cloud architecture isn’t a choice most teams make deliberately — it’s the environment they inherit. The engineering challenge isn’t which model to adopt. It’s how to impose governance, cost visibility, and security boundaries on infrastructure that was assembled from organizational decisions made years apart, by different teams, under different constraints.

- ✓ Model egress cost as a first-class architectural constraint at design time — not after the first invoice

- ✓ Treat identity as your perimeter in hybrid and multi-cloud environments — the firewall model doesn’t apply across provider boundaries

- ✓ Accept that multi-cloud means permanent silos — design for governance across silos rather than workload portability between them

- ✓ Place backup infrastructure outside the blast radius of all production clouds — separate credential plane, administrative air-gapping enforced architecturally

- ✓ Use native IaC resources per provider rather than universal abstraction layers — the Wrapper Tax compounds faster in multi-cloud environments than in single-cloud ones

- ✗ Assume workload portability between clouds is achievable at scale — data gravity makes it economically unviable for most enterprise workloads

- ✗ Wait for the egress bill to model transfer costs — by the time it arrives the architecture is deployed and the cost structure is locked

- ✗ Treat provider IAM models as equivalent — AWS, Azure, and GCP have fundamentally different identity architectures that require provider-specific governance

- ✗ Count backup infrastructure as secure if it shares credentials with production — administrative air-gapping by policy is not air-gapping

- ✗ Build universal Terraform abstraction layers to manage multi-cloud complexity — you’re creating a fourth control plane to manage on top of the three you already have

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session