Azure Landing Zone vs. AWS Control Tower: The Architect’s Deep Dive

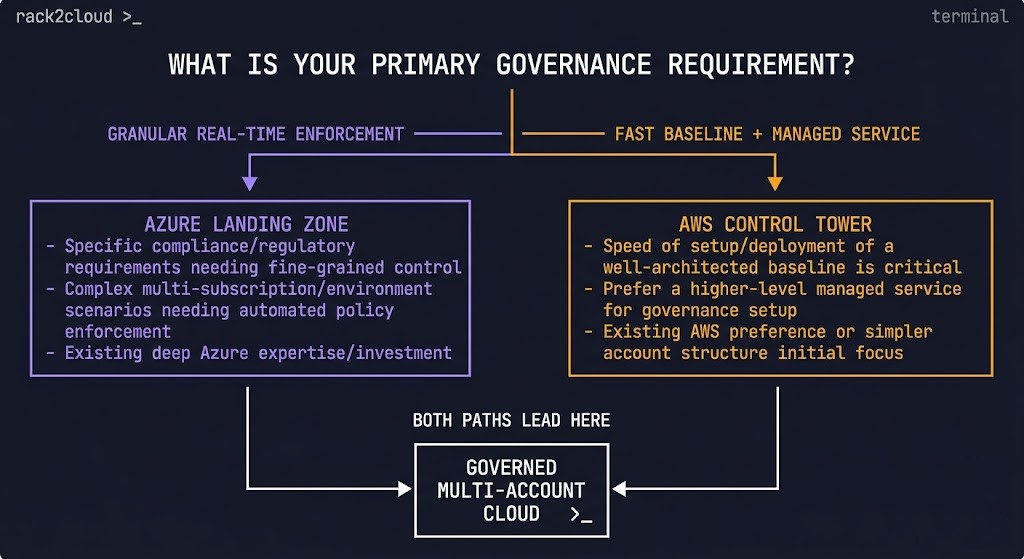

In 2026, the Azure Landing Zone vs AWS Control Tower decision remains one of the most consequential governance choices an architect makes before a single workload goes live. Both solve the same problem — a secure, governed, scalable multi-account foundation — but they solve it in fundamentally different ways, with fundamentally different operational consequences downstream.

For architects working across Azure Cloud Architecture and AWS Cloud Architecture environments, knowing both platforms offer a landing zone solution isn’t enough. The differences in how they’re architected, deployed, and maintained have massive implications for Day-2 overhead, policy granularity, and how much customization debt your team accumulates over time.

AWS Control Tower is a managed service — an opinionated orchestrator you turn on. Azure Landing Zone (specifically the Enterprise-Scale architecture) is a prescriptive architecture and methodology implemented via infrastructure as code. One is a product. The other is a blueprint. That distinction defines everything that follows.

Before the governance model decision comes the provider selection decision. If your team is still evaluating whether Azure or AWS is the right foundation — or validating that choice against architectural tradeoffs — the Cloud Provider Decision Framework: AWS vs Azure vs GCP covers the five axes that should precede this conversation, including how Azure’s enterprise abstraction model and AWS’s low-level control primitives point teams toward fundamentally different governance patterns from day one.

AWS Control Tower gives you a governed baseline immediately — at the cost of long-term flexibility.

The decision is less about features and more about whether your team is prepared to operate governance as code.

This deep dive covers the mechanics beneath both approaches — governance engines, hierarchy models, networking integration, where each breaks in production, and the Day-2 reality most comparison guides skip. For a structured progression covering the full cloud governance stack, see the Cloud Architecture Learning Path.

Azure Landing Zone vs AWS Control Tower: Same Goal, Different Control Model

The most important thing to understand about the Azure Landing Zone vs AWS Control Tower comparison is that you are not comparing two products. You are comparing a managed service against an architecture pattern — and that distinction has operational consequences that compound over years, not sprints.

AWS Control Tower is a product you enable. It sits as an abstraction layer on top of AWS Organizations, CloudTrail, Config, and IAM Identity Center. It makes decisions for you. It moves fast from zero to governed. It also constrains you in ways that become expensive to work around as organizational complexity grows.

Azure Landing Zone (Enterprise-Scale) is a reference architecture you deploy — Bicep or Terraform implementations of a prescriptive blueprint maintained by Microsoft. You own the IaC. You own the pipeline. You own the drift detection. That ownership is a cost on day one and a competitive advantage at year two.

Think of AWS Control Tower as a guarded highway — fast entry, strict rules, limited deviation. Azure Landing Zone is a city plan — customizable, but requires construction expertise to execute correctly and maintain over time.

The Core Philosophy: Managed Service vs Modular Architecture

AWS Control Tower: The Prescriptive Service

Control Tower is highly opinionated, aimed at getting teams from zero to a baseline-governed state quickly. It abstracts the complexity of Organizations, CloudTrail, Config, and IAM Identity Center behind a single service interface.

Pros: Speed of deployment, standardized best practices out-of-the-box, simplified initial experience — particularly for teams without deep IaC maturity.

Cons: Can feel like a black box. Customization outside its prescriptive path requires add-on frameworks — Customizations for Control Tower (CfCT) or Account Factory for Terraform (AFT) — which increases complexity significantly and creates a second layer of tooling to maintain.

Azure Landing Zone (Enterprise-Scale): The Modular Architecture

Azure provides reference implementations in Bicep and Terraform of the Enterprise-Scale architecture. It’s a blueprint you deploy and own entirely — which means you can modify it to fit exact organizational requirements, but deviating from the blueprint requires both the expertise to do it and the discipline to maintain it.

Pros: Highly flexible and modular. Integrates natively with existing Azure DevOps or GitHub workflows. Rewards teams with IaC maturity.

Cons: Higher initial learning curve. Requires a solid grasp of Management Groups, Policy, and RBAC before deployment. IaC ownership is permanent — the architecture doesn’t manage itself.

For teams managing Azure Landing Zone via Terraform, OpenTofu adoption is a control plane migration — not just a license change. The OpenTofu Readiness Bridge audits HCL configurations for BSL-divergence and generates migration templates, which matters if you’re managing landing zone IaC across a long-lived enterprise codebase.

Hierarchy and Structure: Management Groups vs Organizational Units

Azure: The Power of Management Group

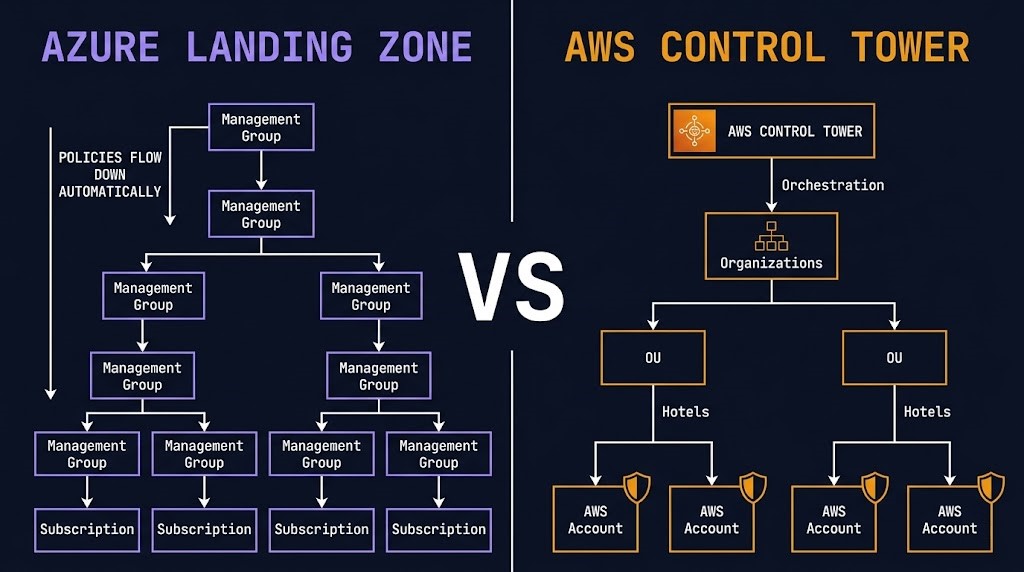

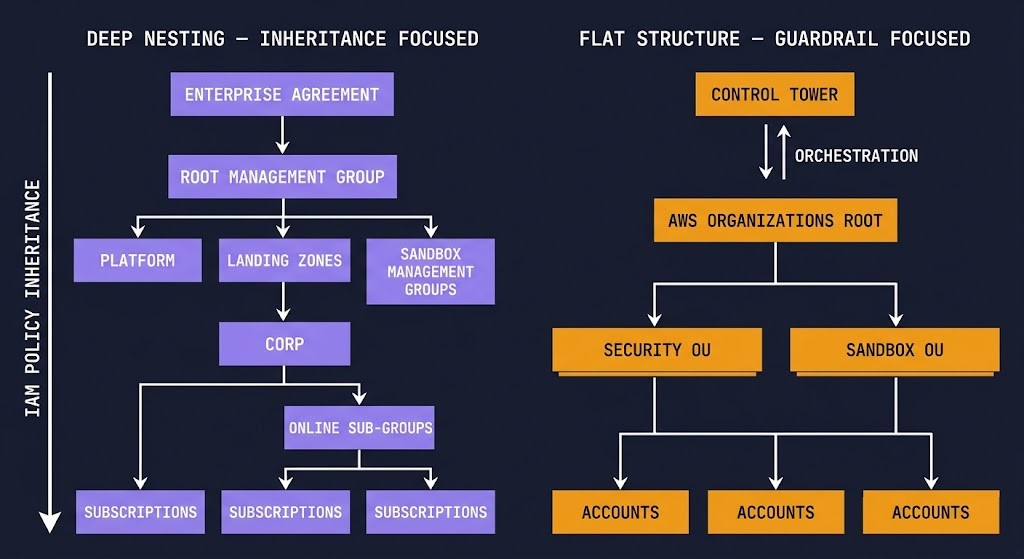

Azure’s structural advantage is the Management Group hierarchy. Unlike AWS Organizations, which historically favor flatter structures, Azure encourages deep nesting designed specifically for policy inheritance. A single policy assignment at the root Management Group cascades automatically to every subscription below it — including new ones provisioned after the policy was written.

Azure separates the billing construct (Enterprise Agreement enrollment) from the organizational construct (Management Groups). The ALZ reference architecture dictates a specific hierarchy — Corp vs Online management groups — to apply distinct policies to different workload types. Subscriptions live under these groups and inherit policies automatically. This inheritance model is what makes Azure Policy genuinely powerful at enterprise scale.

AWS: Organizations and OUs

AWS uses AWS Organizations and Organizational Units. Control Tower mandates a specific starting structure: a Security OU (for log archive and audit) and a Sandbox OU for experimentation.

While OUs can be nested deeply, Control Tower’s rigidity means you must work within its defined structure for enrolled accounts. Moving accounts after enrollment — or integrating existing complex organizations — can break Control Tower’s orchestration guardrails in ways that are difficult to recover from cleanly. This is the most common Day-1 mistake teams make when adopting Control Tower at scale.

Governance Engines: Where the Operational Consequences Live

This is where the technical differences become most pronounced — and where the downstream operational consequences are most severe.

Azure Policy: The Scalpel

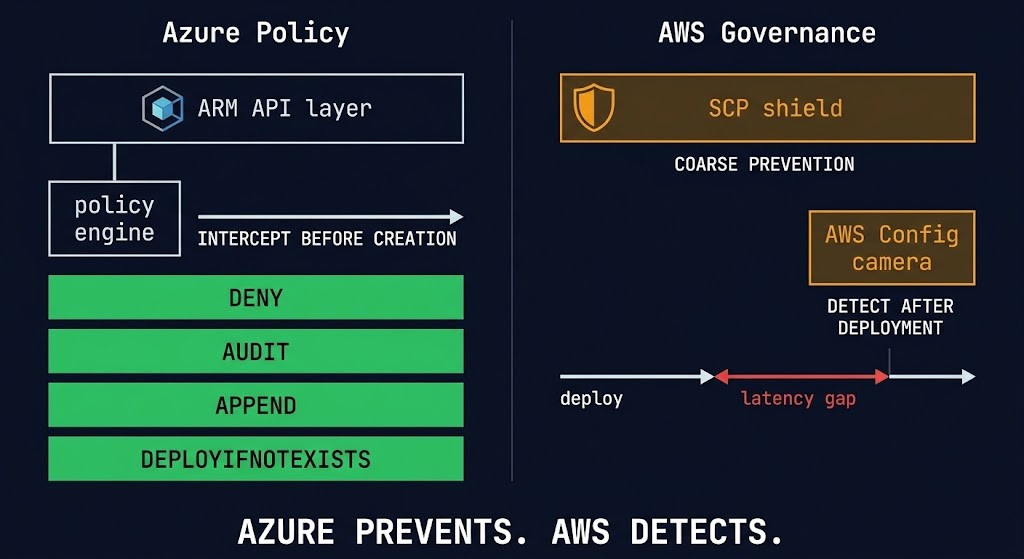

Azure Policy is arguably the most powerful governance engine in public cloud. It sits directly at the ARM (Azure Resource Manager) API layer — every request to create or modify a resource passes through Azure Policy first, before the resource exists, not after.

It operates at four enforcement levels: Deny blocks non-compliant deployments in real-time. Audit flags non-compliant resources without blocking. Append automatically adds required tags or configurations. DeployIfNotExists remediates non-compliant resources automatically — no Lambda, no separate remediation pipeline.

Because policies are applied to Management Groups, a single assignment can govern thousands of subscriptions instantly — even retroactively auditing existing resources. For teams enforcing cost center tagging and resource governance at scale, see the Azure Policy: Enforce CostCenter Tag implementation guide. For understanding policy scope inheritance, the Azure Policy: Management Groups vs Subscriptions deep dive covers the enforcement hierarchy in full.

The evolution of this model in 2026 is worth noting — static guardrails are giving way to AI policy agents that can reason about compliance context rather than just pattern-match against rules. Azure Policy’s API-layer interception model is exactly the integration surface those agents require.

For teams enforcing sovereignty constraints across Azure Landing Zone, the governance BSD jail pattern covers how to apply Unix-style isolation principles to Azure Management Group scopes — a particularly effective model for regulated workloads.

AWS Control Tower: The Shield and the Camera

AWS uses a two-pronged approach that is generally less granular but effective for broad-stroke governance.

Service Control Policies (SCPs) — The Shield: Preventive guardrails acting as permission boundaries on AWS accounts. Excellent for broad denials — deny access to unregistered regions, deny deleting CloudTrail logs. However, SCPs are coarse. An SCP cannot enforce a specific tag value on an EC2 instance — it can only deny RunInstances if tags are missing entirely.

AWS Config — The Camera: Detective guardrails that monitor resources after deployment. If someone deploys a non-compliant S3 bucket, Config detects the drift and alerts — or triggers a Lambda for remediation. There is inherent latency between deployment and detection.

The Architect’s Takeaway: Azure Policy prevents bad configurations from ever existing, with high granularity. AWS relies on broad preventive blocks combined with reactive detection for fine-grained issues. Neither approach is wrong — they reflect fundamentally different operational philosophies. The choice between them is a choice about where you want to absorb complexity.

For teams managing infrastructure drift across either platform via Terraform or OpenTofu, the Sovereign Drift Auditor audits terraform plan.json for non-sovereign drift and unencrypted storage — surfacing compliance gaps before they reach production.

Networking Integration

Azure Landing Zone Networking

Networking is deeply integrated into the ALZ architecture. The accelerators assume a Hub-and-Spoke model and natively orchestrate deployment of the Hub VNet, Azure Firewall, VPN Gateways, and ExpressRoute connections. Azure Virtual WAN (vWAN) is the preferred model for large-scale global deployments, making transit routing dramatically simpler at enterprise scale.

The real-world complexity of Azure Landing Zone networking surfaces at Day-2 — not Day-1. The Azure Landing Zone Hub-and-Spoke refactor guide covers the most common production refactoring scenarios: what breaks when you deviate from the reference topology and how to recover without rebuilding the foundation.

For Azure Private Endpoint architecture within the Landing Zone network boundary, the Azure Private Endpoint Auditor Breakdown and the Azure PE DNS recursive loop and subnet exhaustion fix cover the failure modes that don’t surface until production load hits the DNS resolution chain.

AWS Control Tower Networking

Control Tower’s native networking is basic. It provisions VPCs in new accounts based on simple parameters but does not natively orchestrate complex transit networking like AWS Transit Gateway out of the box. Building a true Hub-and-Spoke in AWS requires add-ons — AFT or custom automation to handle Transit Gateway attachments and routing tables as new accounts are vended. This is functional but adds a third layer of tooling to maintain alongside Control Tower and Organizations.

| Feature | Azure Landing Zone (Enterprise-Scale) | AWS Control Tower |

|---|---|---|

| Delivery Mechanism | Prescriptive Architecture (IaC blueprints) | Managed Service (Opinionated Orchestrator) |

| Primary Hierarchy | Management Groups (deep nesting, inheritance focus) | AWS Organizations & OUs (rigid initial structure) |

| Primary Policy Engine | Azure Policy (granular, API-layer interception, real-time deny/remediate) | SCPs (coarse preventive boundaries) + AWS Config (reactive, granular detection) |

| Networking | Native Hub-and-Spoke / vWAN orchestration | Basic VPC provisioning — complex transit requires add-ons (AFT/CfCT) |

| Customization | High — modify the IaC directly | Medium/Low — requires extensions like CfCT or AFT |

| Day-2 Operations | Managed via code (GitOps flow) | Managed via Console or Service Catalog |

| What Breaks First | IaC maturity gap — the architecture assumes ownership your team may not have | Account enrollment rigidity — moving accounts post-enrollment breaks guardrails |

Where This Breaks in Production

Most comparison guides end at feature parity. This section covers what actually fails.

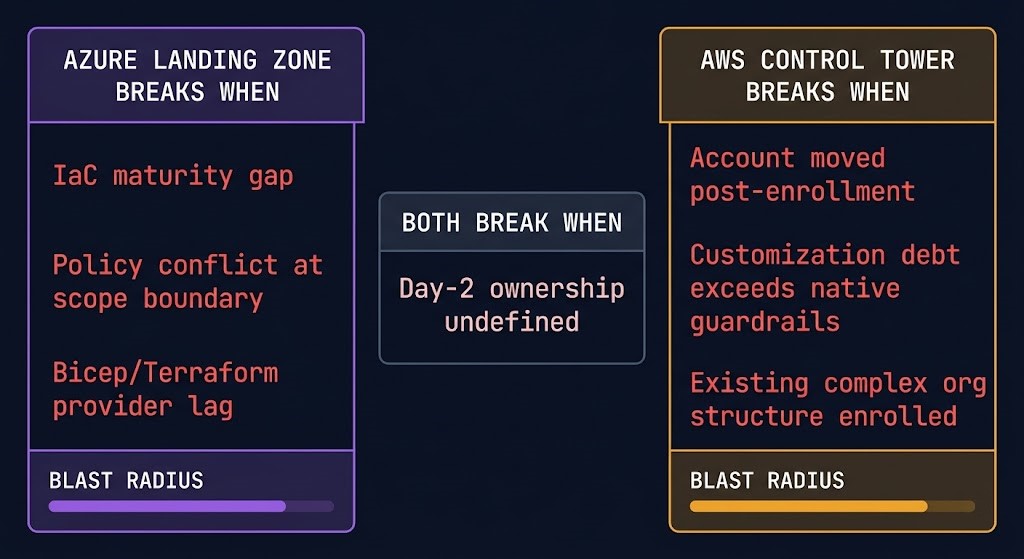

Azure Landing Zone breaks when:

IaC maturity gap — the architecture is a complex set of Bicep/Terraform templates that require active ownership. Teams that deploy the reference implementation without the pipeline discipline to manage it accumulate drift faster than they can remediate it. The architecture assumes GitOps maturity. If that maturity doesn’t exist before deployment, it needs to be built alongside it — which doubles the initial implementation cost.

Policy conflict at scope boundary — when policies assigned at multiple Management Group levels produce conflicting enforcement rules, the resolution behavior is non-obvious. Deny takes precedence, but the interaction between inherited policies and directly-assigned policies at lower scopes creates compliance gaps that don’t surface until an audit or an incident.

Bicep/Terraform provider lag — Azure releases new resource types and API versions faster than providers update. Teams managing landing zone IaC via Terraform hit provider gaps mid-sprint. The Terraform Feature Lag Tracker surfaces these delays before they block a deployment.

AWS Control Tower breaks when:

Account moved post-enrollment — this is the most common and most damaging failure pattern. Moving an enrolled account to a different OU outside Control Tower’s managed structure breaks the guardrail enforcement chain silently. The account exists. The guardrails appear active. They’re not enforcing against the new OU’s policy set.

Customization debt exceeds native guardrails — Control Tower’s native guardrails cover broad-stroke governance. As organizational requirements mature — granular tag enforcement, workload-specific compliance boundaries, complex remediation logic — the gap between what Control Tower provides natively and what the organization needs widens. Every gap gets filled with CfCT or AFT customization. That customization is technical debt with a compounding interest rate.

Existing complex org structure enrolled — organizations with established AWS Organizations hierarchies that attempt to enroll existing accounts into Control Tower regularly hit enrollment failures. The service assumes greenfield. Brownfield enrollment is documented but fragile.

Both break when Day-2 ownership is undefined. This is the failure mode that no vendor documentation covers. Deploying the landing zone is a project. Operating it is a permanent capability. Teams that treat it as a project handoff — deploy, document, move on — accumulate drift, policy gaps, and orphaned accounts until an audit or incident forces a remediation sprint. Configuration drift enforcement at the infrastructure level is the discipline that prevents this.

Day-2 Operations: Where the Real Costs Live

Initial deployment complexity is a one-time cost. Day-2 operational overhead is permanent — and this is where the organizational DNA question becomes a financial question.

Azure Landing Zone Day-2

Managed via code. Changes flow through a GitOps pipeline — pull request, review, apply. Policy drift is detected automatically. New subscription provisioning follows the same IaC pipeline as everything else. The architecture rewards teams that treat infrastructure as a software asset with a CI/CD pipeline, and penalizes teams that don’t.

Cloud cost is now an architectural constraint — and Azure Landing Zone’s Management Group hierarchy is the right place to enforce it. Cost center tagging policies applied at the Management Group level cascade automatically to every subscription, making FinOps governance a structural property rather than a manual audit process.

For teams using Terraform to manage the full lifecycle, the Terraform state model — understanding infrastructure as state rather than code — is the mental shift that makes Landing Zone IaC maintainable at enterprise scale.

AWS Control Tower Day-2

Managed primarily via console or Service Catalog with drift detection provided by the service itself. Lower IaC maturity is required to get started, but customization debt accumulates quickly as organizational complexity grows beyond Control Tower’s native guardrails.

The operational cost that most teams underestimate is account vending at scale. Account Factory for Terraform handles this — but AFT is its own operational surface, with its own pipeline, its own state management, and its own failure modes. The cloud cost analysis for 2026 covers where AWS operational overhead quietly accumulates in ways that don’t appear on infrastructure invoices.

For a full framework covering IaC governance, drift management, and policy-as-code across both platforms, see the Modern Infrastructure & IaC Learning Path.

Zero-Trust Considerations for Azure Landing Zone

If you’ve chosen Azure Landing Zone as your foundation, the governance architecture you’ve just deployed is the control plane for everything that runs on top of it — including your security posture. A Landing Zone with well-designed Management Groups and Azure Policy is a strong starting point. It is not a finished zero-trust architecture.

The gaps that most Azure Landing Zone implementations leave open: identity plane design across Entra ID and workload identities, private endpoint coverage and DNS resolution integrity, network segmentation between Landing Zones, and policy enforcement on workload-level resources that aren’t covered by the baseline ALZ policies.

Architect’s Verdict

The Azure Landing Zone vs AWS Control Tower decision is not a technical comparison. It is an organizational decision about where you want to carry complexity — upfront in architecture, or over time in operations.

Azure Landing Zone rewards teams with IaC maturity and the discipline to operate governance as code. The upfront cost is higher, but the model scales with organizational complexity without introducing a second layer of abstraction or customization debt.

AWS Control Tower optimizes for speed — a governed baseline in days, not sprints. That speed comes with constraints. As requirements evolve, those constraints are bypassed with extensions, and those extensions become the operational surface you inherit.

The failure modes are predictable. Azure Landing Zone fails when teams underestimate the operational discipline required to own it. Control Tower fails when organizational complexity exceeds what the managed service model was designed to handle.

The right choice is not which platform is more capable. It is which model your team can sustain under real-world conditions — after Day 1, when governance becomes an ongoing responsibility instead of a deployment milestone.

To understand how sovereign and compliance constraints further shape this decision, see the Sovereign Infrastructure Strategy Guide and the Sovereign Cloud vs Public Cloud compliance analysis.

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session