Ingress-NGINX Deprecation: What to Do Next (Four Paths, Four Failure Modes)

On March 24, 2026, the ingress-nginx deprecation became official — the repository went read-only. No more patches. No more CVE fixes. No more releases of any kind.

Half the Kubernetes clusters running in production today were routing traffic through it.

The coverage that followed was immediate and mostly unhelpful — migration guides, controller comparisons, annotation checklists. All of it assumes you’ve already made the architectural decision. Most teams haven’t. They’re still looking at four realistic paths, each with a different cost structure and a different failure identity, trying to figure out which one to hand to their leadership team on Monday morning.

We just watched this play out with VMware. Forced change exposes architectural assumptions most teams didn’t know they had. The teams that fared worst weren’t the ones who moved slowly — they were the ones who picked a direction before they understood how their choice would fail.

That’s the conversation this post is about. Not which path to pick. How each path breaks.

The Annotation Complexity Trap Comes First

Before the four paths — one diagnostic question that determines how hard any of this is.

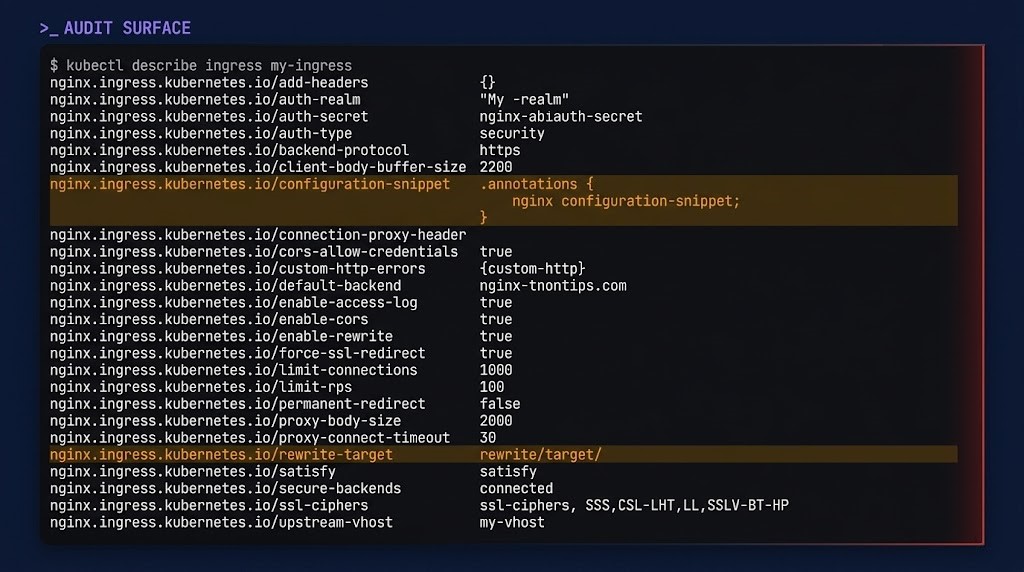

Open your ingress manifests and count the annotations. Not the objects. The annotations per object.

Teams running five or fewer annotations per ingress resource have a straightforward migration surface. Teams running twenty, thirty, or more — with nginx.ingress.kubernetes.io/configuration-snippet blocks doing custom Lua and rewrite-target gymnastics accumulated over three years — are looking at a completely different problem. The 502 patterns that annotation interactions produce don’t disappear when you swap the controller. They surface differently, in different layers, at the worst possible moment.

Audit your annotation surface first. That number shapes which path is realistic for your environment.

Decision Table

| Path | Control | Complexity | Risk Profile | Breaks When |

|---|---|---|---|---|

| Stay with NGINX (vendor) | High | Low | Vendor dependency | Patching timeline slips or contract ends |

| New Ingress controller | Medium-High | Medium | Annotation drift | Behavior gaps surface under production load |

| Gateway API | High | High short-term | Tooling maturity | Adjacent stack isn’t Gateway API-ready yet |

| Exit ingress layer | Low-Medium | High | Operational model shift | Kubernetes-native control requirements return |

The Security and Compliance Reality

CVE exposure from running unpatched ingress infrastructure is not theoretical. IngressNightmare — an unauthenticated RCE via exposed admission webhooks — hit in early 2025. Four additional HIGH-severity CVEs dropped simultaneously in February 2026. With the repository now archived, the next one stays open indefinitely.

For teams operating under SOC 2, PCI-DSS, ISO 27001, or HIPAA: EOL software in the L7 data path is an automatic audit finding. Compliance teams are already blocking production promotions. That’s a forcing function that makes Path 1 (vendor fork) the shortest bridge and Path 3 or 4 the architectural destination.

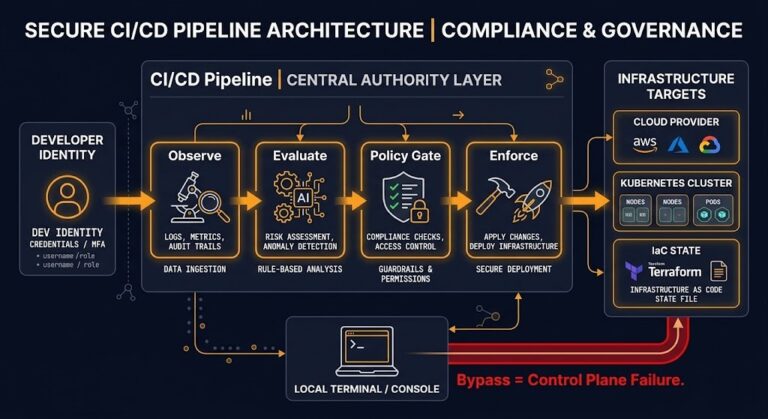

The full security controls model — including how ingress-layer policy connects to supply chain and runtime controls — is in the Container Security Architecture guide.

What This Is Actually About

The ingress-nginx deprecation is a control plane decision, not a controller swap. The Gateway API architectural shift — role separation between platform and application teams, HTTPRoute ownership at the namespace level, policy enforcement without shared annotation state — is a different model, not a migration target.

Teams that treat this as a like-for-like replacement will get Path 2 to work in staging and discover Path 2’s failure modes in production six months later.

The right question before selecting a path: what does your routing control plane need to look like in 24 months? Pick the path that bridges to that state, not the one that resolves the immediate alert.

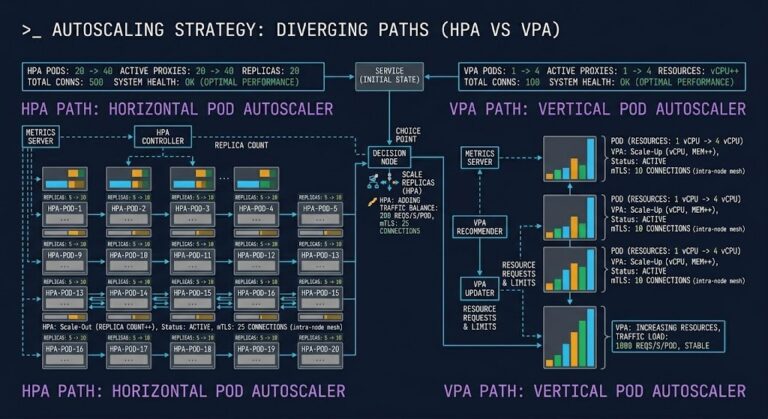

For teams evaluating service mesh as the exit path, the east-west vs north-south distinction matters. A mesh solves a different problem than ingress — and Cilium vs Calico is the eBPF alternative worth understanding before adding sidecar overhead to the equation.

The Platform Engineering Architecture layer is where this decision lands operationally — the ingress controller is a platform team concern, and Gateway API’s role separation model aligns directly with the IDP control plane model.

Architect’s Verdict

Pick your path based on how it fails — not how it’s marketed. Every option here works in a demo. Each one has a specific production failure signature, and that failure signature is what should drive the decision.

Path 1 buys time with known behavior. Path 2 is fast if your annotation surface is clean. Path 3 is the right destination for most teams, arrived at on the right timeline. Path 4 makes sense if the mesh investment is already on the roadmap.

The teams that will execute this well aren’t the ones who move fastest. They’re the ones who audit their annotation complexity first, map their 24-month control plane model, and select the path whose failure mode they can manage — not the one that looks cleanest in a migration guide.

If you’re evaluating the full implementation depth on each path, we’ll break them down individually next — starting with the Kubernetes-native options in Part 1.

This is Part 0 of the Rack2Cloud Ingress-NGINX Series — The Decision Layer. Part 1 covers the Kubernetes-native paths: Gateway API and alternative ingress controllers in depth.

Frequently Asked Questions

Q: What does the ingress-nginx deprecation actually mean for running clusters?

A: Existing deployments continue to function — the repository is archived, not deleted. What ends is all patch activity. Any CVE discovered after March 24, 2026 in the kubernetes/ingress-nginx project will remain unpatched permanently. Running it in production is a risk acceptance decision, not a technical blocker.

Q: Does the Ingress resource type go away too?

A: No. The Kubernetes Ingress API spec itself remains GA and fully supported. What retired is the community-maintained ingress-nginx controller implementation. Third-party controllers (Traefik, HAProxy, Kong, F5 NGINX) still read and serve Ingress resources.

Q: How long does a Gateway API migration actually take?

A: Depends entirely on annotation complexity. Low-annotation environments with ingress2gateway 1.0 can migrate in days. Enterprise clusters with hundreds of services and custom annotation logic are 3-6 month projects with proper rollback planning. Audit annotation surface first — that number defines the timeline more accurately than any vendor estimate.

Q: What is the compliance deadline?

A: There is no single universal deadline. SOC 2 and PCI-DSS auditors will flag EOL software in the L7 path in their next audit cycle. AKS Application Routing extends vendor support until November 2026 for teams on that managed path. Define your audit schedule and work backward from there.

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session