The Terraform Wrapper Tax: Why Multi-Cloud Module Abstraction Fails in Production

Terraform multi-cloud modules were supposed to be the answer. Write once, deploy anywhere — a single module "compute" that could target AWS, Azure, or GCP by flipping a variable. Abstract the provider. Commoditize the infrastructure. In 2018, that vision was compelling enough that entire platform teams built their IaC strategy around it.

By the time those teams hit Day 2, the abstraction had become the problem. The promise of provider-agnostic Terraform multi-cloud modules doesn’t survive contact with the reality that AWS, Azure, and GCP are not interchangeable. They never were. And the engineering tax of pretending otherwise compounds every time a new feature ships, a new engineer joins the team, or a production incident requires fast debugging under pressure.

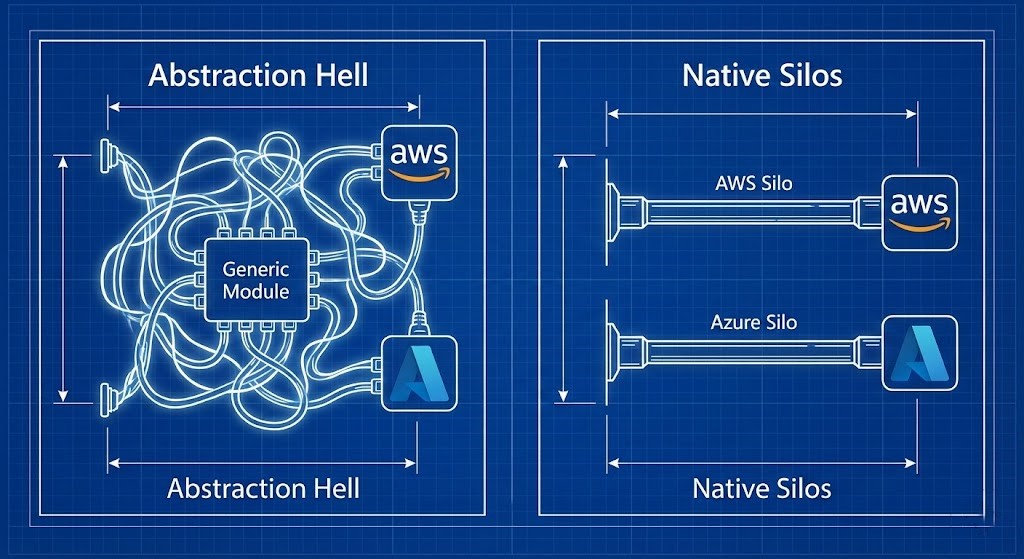

The Lowest Common Denominator Problem

The structural flaw in universal module design is that it forces you to choose between two bad outcomes the moment the providers diverge — and they always diverge.

Take network security as an example. AWS relies on stateful Security Groups applied at the instance network interface level. Azure uses Network Security Groups that are generally stateless and applied at the subnet level. These are not implementation details. They are fundamentally different security models. If you write a single module "firewall" to handle both, you face the same choice every time:

- The LCD Trap: Expose only the features that exist identically in both clouds. You get a lowest-common-denominator security model that can’t use advanced capabilities on either platform.

- The Complexity Trap: Write

dynamicblocks and ternary operators (count = var.cloud == "aws" ? 1 : 0) to translate generic inputs into provider-specific API calls. You haven’t simplified anything — you’ve built a brittle, bespoke translation layer that only your team understands and that breaks silently when provider APIs change.

This is what I call the Wrapper Tax. It’s the accumulated engineering cost of maintaining an abstraction layer that was never equipped to handle the divergence it was built to hide. As the broader analysis of hybrid and multi-cloud engineering realities makes clear, the operational friction of managing multiple clouds is already significant from data gravity and identity sprawl alone — the Wrapper Tax compounds that friction at the IaC layer.

What the Wrapper Looks Like vs. Native Terraform Multi-Cloud Modules

The difference isn’t academic. Here’s what it looks like in a real codebase.

The opaque wrapper:

# What does this actually deploy? You have to open the module source to find out.

module "prod_web_server" {

source = "./modules/generic_compute_v3"

cloud = "azure"

server_name = "web-01"

flavor = "large"

disk_tier = "fast"

}A new engineer needs to know what disk tier is attached to a production VM. What is “fast” in Azure? Premium SSD v1 or v2? To answer that question they need to open the module, unpick the variable maps, trace the conditional logic, and hope the internal documentation is current. That’s the Wrapper Tax in action — paid in engineering time, every time.

The native approach — verbose, explicit, side-by-side:

# AWS Implementation

resource "aws_instance" "web_server" {

ami = data.aws_ami.ubuntu.id

instance_type = "t3.large"

root_block_device {

volume_type = "gp3"

iops = 3000

}

}

# Azure Implementation — side-by-side in the same state file

resource "azurerm_linux_virtual_machine" "web_server" {

name = "web-01"

resource_group_name = azurerm_resource_group.rg.name

location = azurerm_resource_group.rg.location

size = "Standard_D4s_v5"

os_disk {

caching = "ReadWrite"

storage_account_type = "Premium_LRS"

}

}More lines of code. Infinitely easier to read, debug, and operate. The instance type, disk tier, and storage account type are explicit and publicly documented. No internal Rosetta Stone required.

The Day 2 Cost: Feature Lag

The most expensive part of the Wrapper Tax doesn’t show up in your first sprint. It shows up eighteen months later when AWS ships a new EBS volume type that’s 30% cheaper and faster — and your generic compute module can’t use it.

With native resources, the change is one line: volume_type = "gp3". Plan, apply, done in ten minutes. With a generic wrapper, you file a ticket with the platform team to update the generic_compute module, wait for them to test that the change doesn’t break Azure deployments, version the module, update your reference, and then apply — weeks later, if you’re lucky.

Generic Terraform multi-cloud modules actively prevent you from leveraging the innovation of the providers you’re paying for. The Terraform Feature Lag Tracker was built specifically to surface this problem — provider feature gaps that your automation can’t natively support yet, before they become production blockers. The lag is real, it’s measurable, and wrapper architectures make it structurally worse.

Feature lag also compounds into configuration drift. When engineers work around wrapper limitations by making manual console changes — because the module can’t expose the feature they need — you get infrastructure state that diverges from your Terraform definitions. Configuration drift is an architectural problem, not an operational one, and wrapper-based IaC is one of its primary generators.

The Exit Cost Dimension

There’s a fourth cost that rarely gets modeled when teams adopt universal module patterns: exit cost. The assumption behind Terraform multi-cloud modules is that provider portability reduces lock-in. In practice, the abstraction layer creates its own lock-in — to the module architecture itself, to the internal team that maintains it, and to the tribal knowledge required to operate it.

When the platform team leaves, or the module architecture diverges from provider APIs after a major release, or you need to migrate workloads under time pressure, the wrapper doesn’t help you move faster. It adds another layer to unpick before you can touch the actual infrastructure. Exit cost is a first-class architectural metric — and it applies to your IaC layer as much as it applies to your cloud provider choice. The teams that model it at design time avoid the unwinding cost entirely. The teams that don’t discover it during incidents.

Architect’s Verdict

If your organization runs multiple clouds, the engineering reality is that you have multiple distinct infrastructure stacks. Your Terraform multi-cloud modules should reflect that reality, not abstract it away. Verbose, native, side-by-side resource blocks aren’t a failure of elegance — they’re the correct architectural response to providers that were never designed to be interchangeable.

- ✓ Write native, provider-specific resource blocks — accept that AWS and Azure require different code and treat that as correct, not a problem to solve

- ✓ Structure your repository with explicit provider directories (

aws/,azure/) — separation is clarity, not duplication - ✓ Use the Terraform Feature Lag Tracker before adopting new provider features — know where your automation has gaps before production requires them

- ✓ Model exit cost at IaC design time — the wrapper architecture you choose today determines how fast you can change providers tomorrow

- ✓ Treat verbose code as an operational asset — explicit resource definitions reduce onboarding time and incident response time equally

- ✗ Build generic wrapper modules that abstract provider differences — you’re not reducing complexity, you’re moving it into a layer only your team understands

- ✗ Use ternary operators and dynamic blocks to translate generic inputs into provider calls — that’s a bespoke API translation layer, not IaC

- ✗ Assume abstraction reduces lock-in — wrapper architectures create their own lock-in to the module and the team that maintains it

- ✗ Let module update lag block new provider features — if you can’t adopt a cost-saving feature in ten minutes, your abstraction layer is costing you money

- ✗ Conflate code conciseness with operational simplicity — a 10-line wrapper that requires tribal knowledge to debug is not simpler than 40 lines of explicit native resources

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session