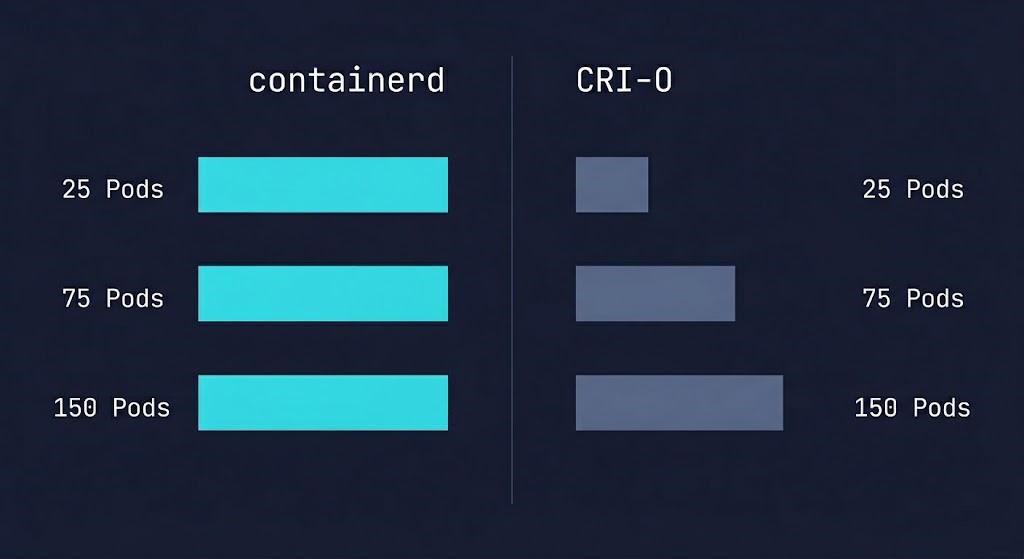

containerd vs CRI-O: Memory Overhead at Scale (Real Node Density Limits)

When evaluating containerd vs CRI-O, the decision rarely comes down to features — it comes down to what happens at node density limits.

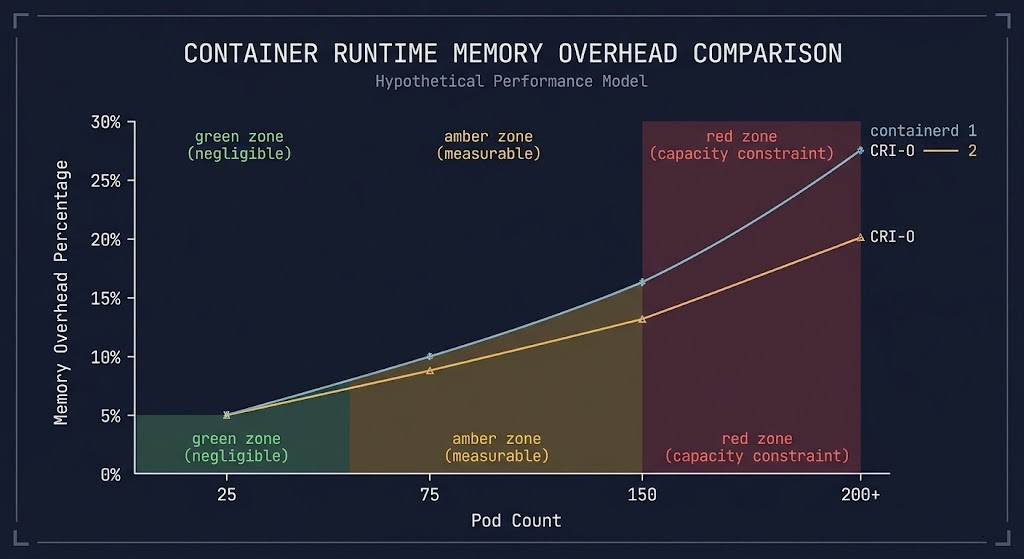

At low pod counts, every container runtime looks efficient. At scale, memory overhead becomes the limit you didn’t plan for.

This isn’t a benchmark. It’s about how many pods you actually fit per node — and what happens to your infrastructure cost when the runtime you chose starts eating into that headroom.

Why Runtime Memory Overhead Gets Ignored Until It Hurts

Most runtime comparisons test containerd and CRI-O at idle or single-digit pod counts. The numbers look clean. The difference looks negligible. Teams make a selection based on ecosystem alignment or documentation quality and move on.

Then the cluster scales.

What changes isn’t the per-pod overhead in isolation — it’s the compound effect of runtime daemons, kubelet interaction, and scheduling burst behavior under real workloads. That’s where containerd and CRI-O start to diverge in ways that matter to infrastructure cost. If you’ve already selected a runtime and are tuning for density, the Container Runtime Optimization guide covers the next layer.

What Most Benchmarks Miss

What Benchmarks Test

- Baseline runtime memory at rest

- Single container startup time

- Low-density scenarios (10–20 pods)

- Isolated runtime behavior

What They Miss

- Memory behavior under scheduling bursts

- Daemon overhead as pod count climbs

- Kubelet + runtime interaction at high churn

- System pressure when nodes approach capacity

The result is a clean number that tells you almost nothing about how your nodes behave at 60% or 80% capacity. Real clusters don’t idle. They schedule, reschedule, crash-loop, and scale — and runtime overhead compounds with every event.

containerd vs CRI-O: The Scaling Curve

Based on observed patterns across production environments and CNCF published data, the overhead picture looks like this:

CRI-O’s stricter CRI compliance and leaner daemon model gives it a measurable edge at the 150+ tier. The tradeoff is ecosystem reach and operational tooling, covered below.

What That Overhead Actually Costs

Memory overhead at scale isn’t an abstract performance metric — it translates directly to node count, and node count translates directly to your cloud bill.

Consider a cluster running 1,000 pods across worker nodes sized at 8GB RAM:

- At 150 pods per node, you need roughly 7 nodes

- A 10% memory overhead difference means one of those nodes is effectively running at reduced usable capacity

- Across 10 nodes, you’re looking at the equivalent of one full node consumed by runtime overhead

At AWS on-demand pricing for a standard compute-optimized instance, that’s $150–$400/month depending on instance class — for overhead that never appeared in your initial sizing model.

That’s not a reason to immediately switch runtimes. It’s a reason to factor runtime selection into your node sizing from the start.

Operational Reality: What the Memory Number Doesn’t Tell You

Debugging complexity containerd’s tooling ecosystem is broader. ctr, crictl, and third-party integrations are more mature and better documented. When something breaks at 3AM, the containerd debugging path has wider community coverage. CRI-O’s stricter model means fewer surprises at runtime — but fewer resources when you hit an edge case outside the OpenShift ecosystem.

Ecosystem alignment containerd is the default runtime for EKS, GKE, and most upstream Kubernetes distributions. If your team operates across multiple cluster environments, standardizing on containerd reduces cognitive overhead. CRI-O is the native runtime for OpenShift and optimized for environments where strict CRI compliance is a hard requirement. The Container Security Architecture guide covers how runtime selection affects your security posture at the policy enforcement layer.

Stability under churn High pod churn — rolling deployments, HPA scaling events, crash-loop recovery — stresses runtime stability differently than steady-state operation. containerd’s production hardening across large-scale deployments gives it an edge in high-churn environments. For operational failure patterns that surface under churn, the Kubernetes Day 2 Operations guide covers the runtime-level failure modes worth knowing before they hit production.

How to Use This in Your Node Sizing

Three inputs for your next capacity model:

1. Know your target pod density. If you’re running under 50 pods per node, runtime memory overhead is not a decision factor. If you’re targeting 100+, it belongs in your sizing calculation.

2. Add 10–15% runtime overhead buffer to your memory allocation model at high density, regardless of runtime choice. This accounts for runtime daemons, kubelet overhead, and system-level processes that compound under scheduling pressure.

3. Match runtime to ecosystem, not benchmarks. containerd wins on reach, tooling, and churn stability. CRI-O wins on memory efficiency at extreme density and strict CRI compliance. If you’re on OpenShift, the decision is already made for you.

Architect’s Verdict

containerd is the right default for most teams — broader ecosystem support, better tooling, and proven stability under high churn make it the lower-risk choice at scale. CRI-O earns its place in environments where pod density is extreme and operational complexity is tightly controlled, or where OpenShift is already the platform. The memory delta between them is real at 150+ pods per node, but it’s a sizing input, not a reason to fight your ecosystem. Model the overhead, right-size your nodes, and pick the runtime your platform already expects.

Additional Resources

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session