SOVEREIGN NETWORKING

The Network Plane Is Where Sovereignty Is Enforced — or Lost.

Sovereign networking is not a firewall rule. It is not a private VLAN. It is not a network access control policy.

It is the architectural property that determines whether every decision your network makes — every name resolution, every routing decision, every access boundary enforcement, every egress path — operates independently of external authority.

Most organizations with sovereign infrastructure ambitions have addressed the Identity Plane and the Data Plane. They have deployed local identity providers, HSM-anchored PKI, and immutable storage outside the primary control plane. Then they have left the network boundary open — not visibly, not obviously, but structurally. A recursive DNS resolver that calls a public upstream. An API gateway that authenticates via an external OIDC provider. A telemetry agent that phones home on port 443. A container registry pull that exits the boundary on every deployment.

None of these failures alert. None of them appear in dashboards. All of them are sovereignty failures — and they are active continuously, under normal operations, while the architecture appears sovereign on every diagram that was ever drawn of it.

This page answers one question: what must be true — architecturally, testably, and simultaneously — for the Network Plane to be sovereign?

Sovereign Networking and the Network Plane

Sovereign networking is the property of a network boundary that enforces control plane independence at every layer — DNS, routing, API boundary, and egress — without requiring any external system to be reachable for decisions to be made.

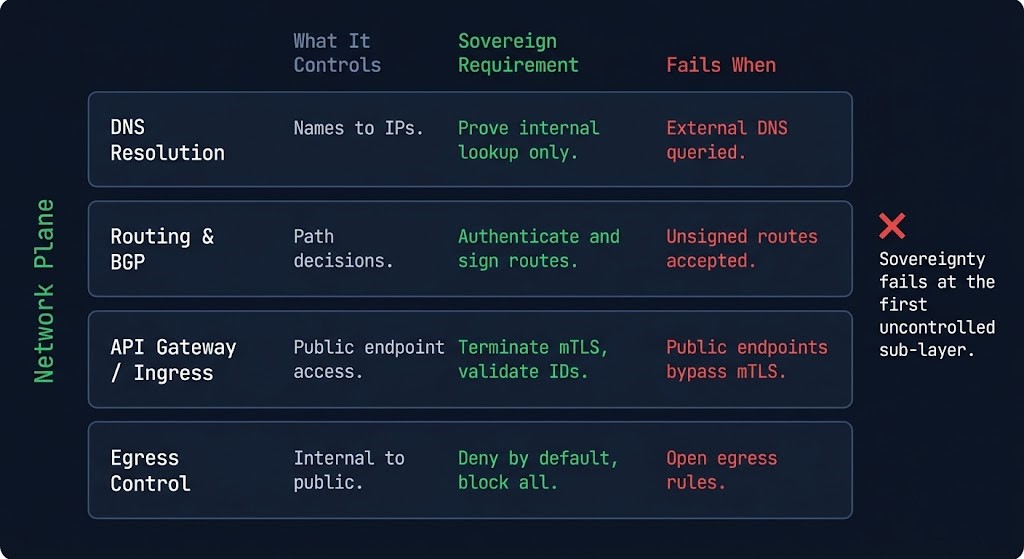

The Network Plane is defined by four sub-layers. Each sub-layer represents a category of network decisions your infrastructure makes continuously. Sovereignty requires that all four operate without external authority.

This is the Rack2Cloud Network Plane Model.

| Sub-Layer | What It Controls | Sovereign Requirement | Fails When |

|---|---|---|---|

| DNS Resolution | Name-to-address translation for every internal call | Authoritative DNS server within sovereign boundary; recursive resolver with no public upstream for internal zones | External DNS required; internal FQDNs resolve via public recursive resolver; split-horizon gaps allow upstream escape |

| Routing & BGP | Traffic path decisions; which network carries which workload | All routing decisions made without external BGP peer dependency; intra-boundary routing self-contained | Provider network controls routing tables; BGP session with external peer required for internal workload routing |

| API Gateway / Ingress | External boundary enforcement; what can reach what from outside | API gateway authenticates via locally controlled identity; TLS terminates within the sovereign boundary | Gateway authenticates via external OAuth/OIDC; TLS private keys held by CDN or external load balancer |

| Egress Control | What leaves the boundary and where it goes | All outbound traffic policy-governed and audited; no unmonitored egress paths for any reason | Management agents, telemetry, license validators, NTP, container pulls create unmonitored external reachability |

The core rule: sovereignty fails at the first sub-layer you don’t control.

This is not a compound failure requiring multiple planes to fail simultaneously. The routing and API boundary can be fully controlled, the egress policy perfectly enforced — and a single DNS recursive query to 8.8.8.8 for an internal FQDN breaks sovereignty at the resolution layer. The decision about what your system reaches is being made externally. Everything else is correctly architected infrastructure built on a network boundary you don’t actually control.

The Sovereign Networking Illusion

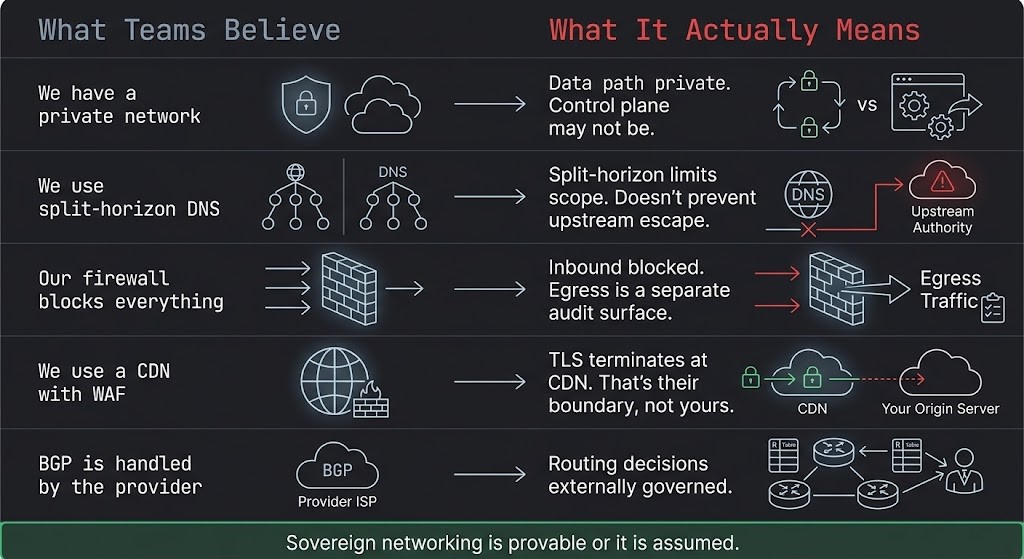

The illusion of network sovereignty is maintained by a set of beliefs that are individually accurate as descriptions of real investments and collectively wrong as claims about sovereignty. Each belief describes something real. None describes the sovereign property.

| What Teams Believe | What It Actually Means |

|---|---|

| We have a private network | The data path is private. The control plane managing it — DNS, BGP, API authentication — may not be. A private wire between two externally controlled endpoints is a private pipe, not a sovereign boundary. |

| We use split-horizon DNS | Split-horizon limits what resolves where. It does not prevent recursive queries from escaping to public upstream resolvers when internal resolvers lack authoritative answers. The split is in resolution scope, not in the resolver’s external reachability. |

| Our firewall blocks everything | Perimeter enforcement limits inbound access. Egress — DNS on port 53, NTP on 123, HTTPS telemetry on 443, container pulls on 443 — is a separate audit surface that firewalls typically allow by policy. Inbound blocking and outbound sovereignty are independent properties. |

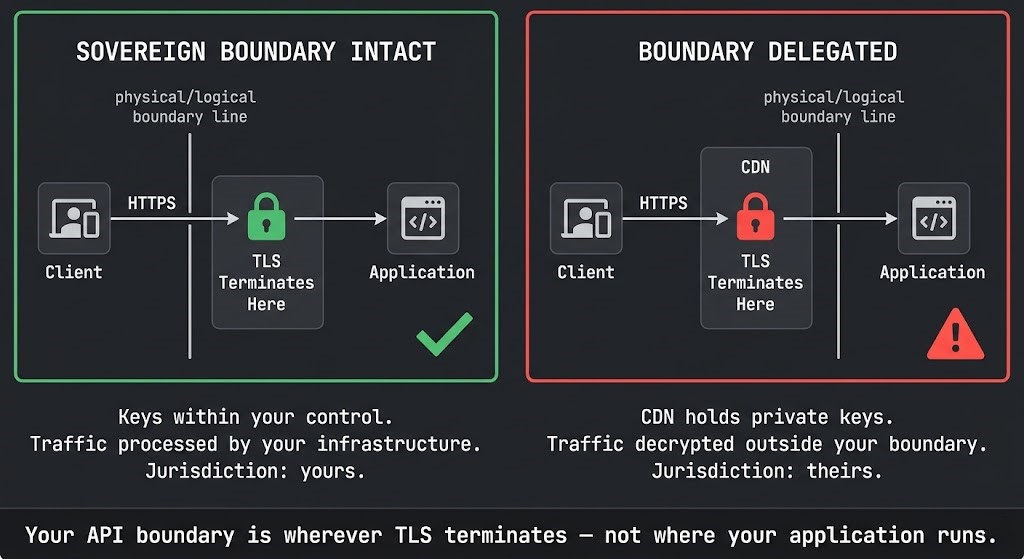

| We use a CDN with WAF | Your CDN terminates TLS before your application sees the traffic. Your API boundary is wherever TLS terminates — not where your application runs. If TLS terminates at the CDN, the CDN operator holds your private keys and controls your external boundary. |

| BGP is handled by the provider | Routing decisions are controlled by whoever controls BGP. If your provider controls your BGP sessions, your workload routing is externally governed. Provider-managed BGP is provider-controlled network sovereignty. |

| We have network segmentation | Segmentation controls lateral movement within the boundary. It does not address what the segments themselves depend on externally — their DNS, their NTP, their management plane reachability. A perfectly segmented network with external DNS is a network where every segment’s name resolution is externally controlled. |

The illusion persists because network sovereignty gaps are invisible under normal operations. The DNS query resolves. The telemetry agent reaches its endpoint. The API gateway authenticates successfully. Everything functions. The sovereignty failure is present at rest — and it only matters during the conditions it was designed to prevent: network isolation, provider unreachability, jurisdiction enforcement, or an adversary who understands that DNS is the first thing to compromise.

Sovereign networking is provable or it is assumed. There is no operational state between them.

DNS: The Most Common Sovereign Networking Failure

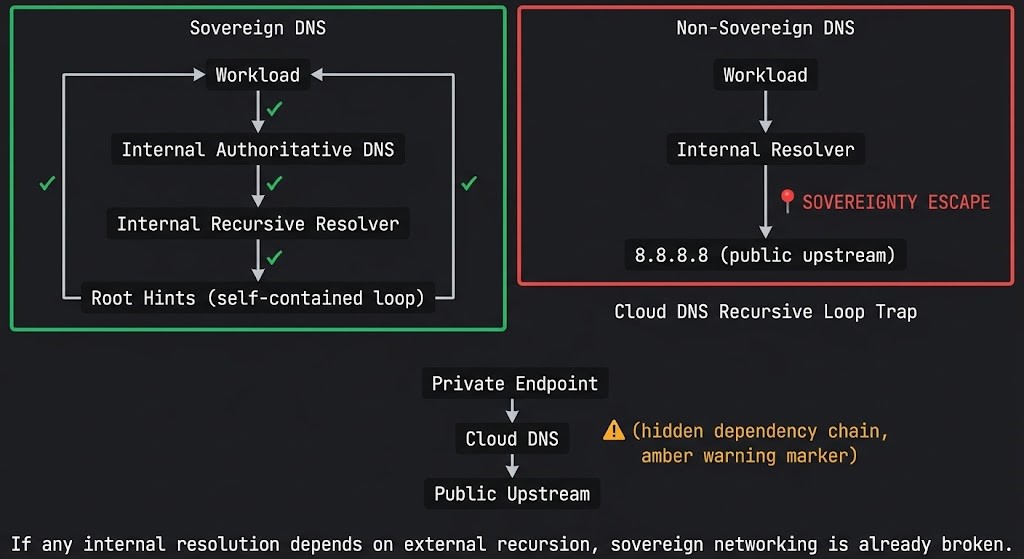

DNS is the most common sovereign networking failure. It is also the least monitored control plane in enterprise infrastructure.

Every system call begins with a name. Before a workload can reach a database, authenticate a token, validate a certificate, or pull a configuration file, it resolves a name. That resolution is a control plane decision — and in most enterprise environments, including many described as sovereign, that decision depends on a resolver that calls a public upstream when it lacks a local authoritative answer.

If any internal resolution depends on external recursion, sovereign networking is already broken. Not degraded. Not partially compromised. Broken — because the decision about what your system can reach is being delegated to an external system you do not control, under every name resolution event that occurs in your environment.

The DNS sovereignty failures break into four patterns:

External Recursive Dependency — the most common failure. Internal resolvers configured with public upstream forwarders (8.8.8.8, 1.1.1.1, 9.9.9.9) or provider-managed DNS as the upstream. Internal FQDNs that lack local authoritative answers route to public resolvers. Detection: sever all external DNS paths. Does internal name resolution continue for every FQDN your workloads require?

Split-Horizon Gaps — split-horizon DNS configures different answers for internal versus external queries. It does not guarantee that internal resolvers are authoritative for all internal zones. Gaps in zone coverage cause recursive fallback to external resolvers for any FQDN not covered by the internal authoritative zones. Detection: enumerate every FQDN your internal workloads resolve. Verify each has a local authoritative answer.

Cloud DNS Recursive Loop Dependency — the most architecturally subtle failure, and one that breaks “private” network architectures specifically. The pattern: a private endpoint is configured to resolve via a cloud-managed DNS service (Azure Private DNS, AWS Route 53 Resolver, GCP Cloud DNS). The cloud DNS service is itself dependent on an upstream public resolver for zones it doesn’t hold authoritative answers for. The private endpoint resolves — but the chain resolves through the provider’s DNS infrastructure, which retains external reachability. The network appears private. The DNS control plane is externally anchored. This failure mode is documented in detail in the Azure Private Endpoint DNS recursive loop analysis — where a “private” resolution path creates a dependency chain that exits the supposed sovereign boundary.

DNS as Exfiltration Channel — data encoded in DNS query strings and resolved against attacker-controlled authoritative nameservers. DNS tunneling is operationally silent in environments where DNS traffic is not inspected at the boundary. The exfiltration path is the same port, the same protocol, and the same traffic pattern as legitimate resolution. Detection requires DNS query inspection — query frequency analysis, entropy analysis of query strings, and verification that all external resolution targets are expected authoritative nameservers.

The DNS sovereignty test: Disable all external DNS paths. Can every FQDN your workloads, authentication systems, certificate validation, and management tools require resolve from internal authoritative sources alone? Any “no” answer is an active sovereignty failure.

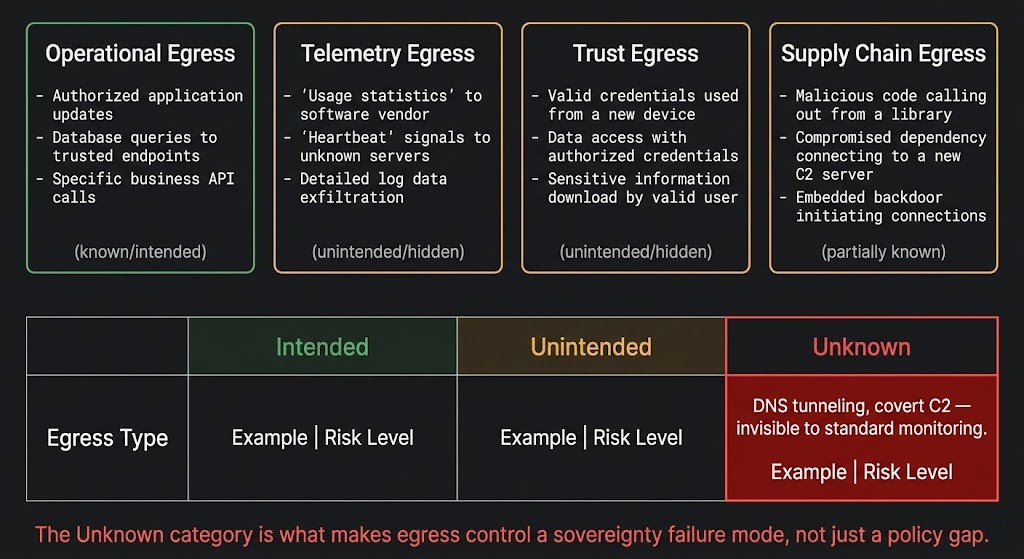

Egress Control: What Actually Leaves the Boundary

Egress control is the most consistently incomplete layer of network sovereignty architecture — not because organizations don’t have egress policies, but because the egress audit has never been run against the actual traffic profile of the environment.

Most egress policies are written against known, intentional traffic: application API calls, software update endpoints, cloud service access. They are not written against the full egress surface of a production environment — which includes categories of traffic that are unintentional, unmonitored, and in some cases unknown.

The Rack2Cloud Egress Audit Framework defines four egress categories. Every sovereign networking architecture must account for all four.

| Egress Type | Example | Risk Profile |

|---|---|---|

| Intended | Application API calls, cloud service access | Known — policy can be written and enforced |

| Unintended | Telemetry agents, license validators, crash reporters | Hidden — policy is typically absent; not in scope of standard review |

| Unknown | DNS tunneling, covert C2 channels, exfiltration via permitted protocols | Critical — invisible to standard monitoring; operates within permitted egress rules |

The egress sovereignty test: Can you enumerate every external destination your environment contacts in a 24-hour period? Any destination you cannot explain is an uncontrolled egress path.

Trust Egress deserves specific attention because it connects directly to the Identity Plane’s sovereignty status. NTP time synchronization to external servers introduces clock drift in isolated environments — which causes short-lived token expiry validation to fail, exactly as documented in the Sovereign Identity & Access Architecture time dependency failure mode. OCSP certificate revocation checks to external endpoints mean that compromised credentials cannot be confirmed as revoked during network isolation — the blast radius of a credential compromise expands for the entire duration of the isolation event. Trust egress is not a secondary concern. It is the network-layer mechanism that either supports or undermines the cryptographic sovereignty architecture.

API Boundary Architecture: Where TLS Terminates Is Where Sovereignty Ends

Your API boundary is wherever TLS terminates — not where your application runs.

This statement has a binary consequence for sovereign networking architecture:

| TLS Termination | Sovereignty Status |

|---|---|

| Terminates inside your sovereign boundary | Boundary intact — your infrastructure processes the decrypted traffic; keys are within your control |

| Terminates outside your sovereign boundary | Boundary delegated — the terminating party processes all decrypted traffic; their infrastructure is between your clients and your application |

There is no gray area. TLS termination is the cryptographic boundary. If TLS terminates at a CDN provider, a third-party WAF, or an externally managed load balancer, every decrypted API request is processed by infrastructure outside your sovereign boundary. The provider can read, log, modify, or withhold that traffic. The provider’s jurisdiction applies to it. A jurisdiction-targeted data access order served to the CDN provider is served to your API boundary — and your application has no visibility into or control over the response.

CDN-Terminated TLS is the most common API boundary sovereignty failure. Organizations deploy CDNs for performance, DDoS mitigation, and geographic distribution — all legitimate capabilities. The sovereignty consequence is that TLS termination at the CDN means the CDN operator becomes the de facto API boundary authority. For regulated workloads where data access must be controlled at the architectural level, CDN-terminated TLS requires either mTLS bypass for regulated traffic or an architecture where the CDN handles only unencrypted distribution and sovereign workloads terminate TLS at the application boundary directly.

External OIDC/OAuth at the API gateway is the second most common failure. An API gateway configured to validate tokens against an external OIDC provider has an identity dependency that connects the API boundary to the external identity provider’s availability. If the external provider is unreachable — by network failure, by jurisdiction order, or by incident — the API gateway cannot validate access requests. The boundary fails in the direction of the provider’s availability, not the application’s. This connects directly to the Identity Plane: the token validation escape failure mode documented in the Sovereign Identity & Access Architecture manifests at the API gateway, not at the identity system.

Sovereign API boundary architecture requires: TLS termination within the sovereign perimeter, token validation against locally served JWKS, and mTLS enforcement for service-to-service traffic within the boundary. The Service Mesh Architecture and the Service Mesh vs eBPF analysis cover the mTLS enforcement layer in detail.

The network control plane architecture that makes these boundary decisions enforceable — topology class selection, east-west enforcement mechanism, and control plane blast radius — is covered in the Modern Networking Logic strategy guide.

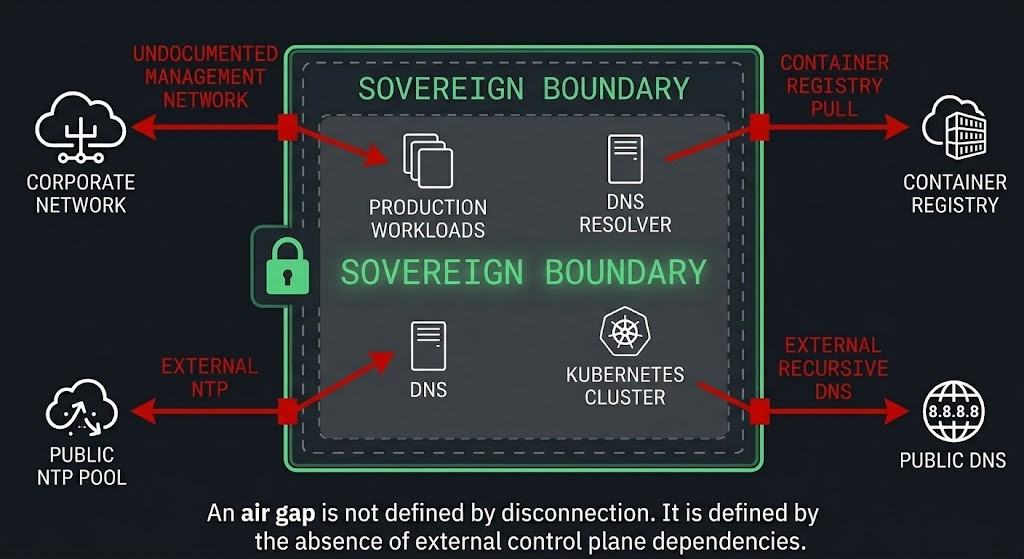

The Fake Air Gap — Network Edition

An air gap is not defined by disconnection. It is defined by the absence of external control plane dependencies.

Physical network isolation — severed cables, RF shielding, separate power domains — is real isolation. It prevents packets from crossing the physical boundary. It does not prevent the systems inside from depending on external control planes that must be reachable for those systems to function. An air-gapped system that cannot authenticate users because the identity provider is external is not sovereign — it is unusable. An air-gapped system that cannot resolve DNS because the resolver requires a public upstream is not isolated — it is broken. The isolation is physical. The sovereignty failure is architectural.

The Fake Air Gap failure modes in network architecture follow a consistent pattern: physical or logical isolation exists at the perimeter while control plane dependencies create invisible external reachability requirements inside it.

Physical Isolation, Undocumented Management Network — the most common form. The primary network is physically isolated. A management network — for remote access, monitoring, or vendor support — has connectivity to the corporate network or the internet that bypasses the primary isolation. The air gap is real for data traffic. The management plane bypasses it. Detection: full egress audit on the management network. Any unexpected external endpoint fails the sovereignty test.

Air-Gapped Kubernetes with External Container Registry — an isolated Kubernetes cluster that pulls container images from an external registry on every pod deployment or node initialization. The cluster is network-isolated. Every workload deployment requires external reachability. In practice, this means the cluster is operationally dependent on external connectivity for any state change — not just at deployment, but whenever a node restarts and re-pulls images. Detection: trace every container image pull. If any image source is external, workload sovereignty is dependent on external registry availability.

Isolated Network with External NTP — short-lived tokens, certificate validity windows, and authentication timestamps all depend on clock synchronization. An isolated network with external NTP dependencies develops clock drift in the absence of external connectivity — which causes token validation failures, certificate expiry mismatches, and authentication system errors that appear to be identity failures but are actually network sovereignty failures manifesting through the identity layer.

VPN-Connected “Isolated” Segments — network segments described as isolated that maintain VPN connectivity for management or monitoring. The VPN connection is the control plane escape path. Traffic crosses the boundary on an encrypted channel that is treated as acceptable because it is encrypted and authenticated — but it is still external reachability, and it is still a sovereignty boundary violation for any control plane decision that traverses it.

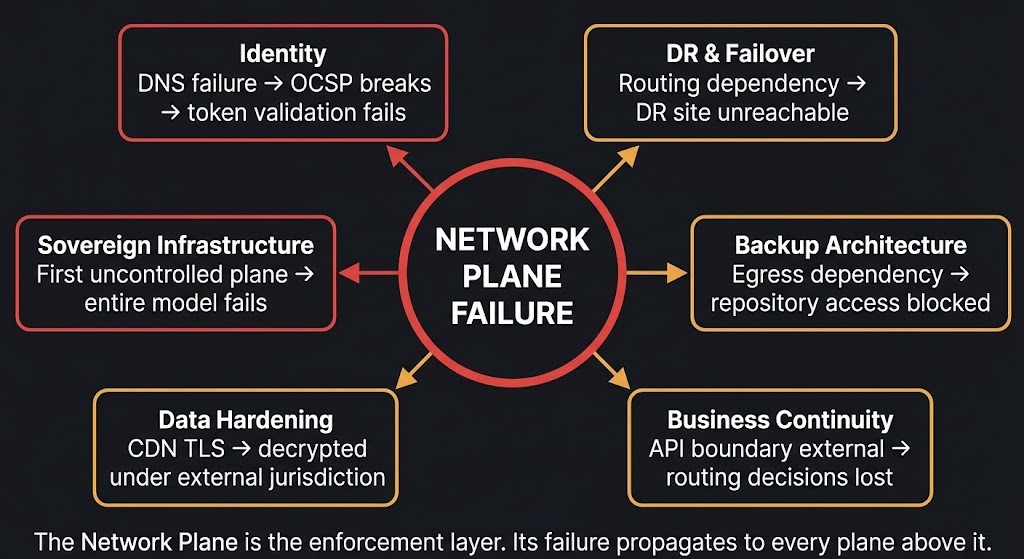

What Happens When the Network Plane Fails

Network plane failures do not stay in the network layer. Because the network plane is the enforcement layer for every other sovereignty claim, its failure propagates immediately to the planes that depend on it.

Sovereign DNS Architecture Patterns

Six architectural patterns implement sovereign DNS. Each solves a specific DNS sovereignty dependency class.

Network Plane Failure Modes

These are the architectural failure modes that break sovereign networking in environments that appear network-sovereign on paper. Unlike a DDoS attack or a routing incident — which are visible — these are structural conditions that maintain the appearance of sovereignty while eliminating its substance. They are present at rest and only reveal themselves under the conditions sovereign infrastructure was built to survive.

Cross-Pillar Impact: How Network Plane Failures Propagate

Network sovereignty failures do not remain isolated to the network layer. Every architecture that depends on name resolution, authenticated access, or routable connectivity — which is every architecture — inherits the network plane’s sovereignty status. When the network plane fails, the architectures built on it fail with it.

| Architecture | Network Failure | First Thing That Breaks | Specific Failure Mode |

|---|---|---|---|

| Backup Architecture | Egress dependency | Repository access | Backup jobs fail if the backup control plane requires external reachability; off-site repository inaccessible if routing depends on external BGP |

| Disaster Recovery & Failover | Routing dependency | Failover unreachable | DR site cannot be reached if provider BGP controls cross-site routing during the event that triggered failover |

| Cybersecurity & Ransomware Survival | DNS as C2 channel | Undetected exfiltration | Ransomware C2 communication via DNS tunneling is invisible to standard network monitoring; egress policy permits DNS by necessity |

| Business Continuity & Resilience | API boundary external | Traffic cannot be re-routed | BC routing decisions require an API gateway that authenticates externally; gateway unavailable during the incident that triggered BC |

| Sovereign Identity & Access | DNS failure | Token validation | OCSP resolution fails; OIDC JWKS endpoint unreachable; certificate revocation cannot be confirmed; identity plane non-functional |

| Data Hardening Logic | Egress: CDN-terminated TLS | Data access boundary delegated | Encrypted data accessible via CDN operator under jurisdiction order; customer encryption does not protect data processed at CDN boundary |

| Sovereign Infrastructure | Any network sub-layer | Entire sovereignty model | Per the Rack2Cloud Sovereignty Control Plane Model: sovereignty fails at the first plane you don’t control. Network is the enforcement layer — its failure propagates to every plane above it. |

Threat Model

Sovereign networking is not a compliance architecture. It is a survival architecture — designed for the specific threat conditions where non-sovereign network boundaries are targeted, exploited, or compelled.

DNS Exfiltration as Ransomware C2 — ransomware operators increasingly use DNS tunneling for command-and-control communication and data exfiltration because DNS traffic is permitted by virtually every egress policy and passes through most network monitoring without inspection. A ransomware operator with DNS C2 capability can exfiltrate data, receive commands, and coordinate payload delivery entirely within the bounds of permitted network traffic. The ransomware backup architecture documents this attack pattern — the backup control plane is targeted specifically because operators understand that recovery depends on it and that DNS is the covert channel that survives standard monitoring.

BGP Hijacking in Shared Provider Environments — BGP route injection by a malicious participant in a shared routing domain can redirect workload traffic to adversary-controlled infrastructure without any visible network failure. From inside the affected network, connectivity appears normal. Traffic is being processed by a different endpoint. For organizations with sovereign data requirements, BGP-level traffic redirection in a provider-controlled routing environment is not a theoretical threat — it is the structural consequence of delegating routing sovereignty to a shared provider BGP infrastructure.

TLS Interception at CDN Boundary Under Jurisdiction Order — a foreign jurisdiction issues a data access order to a CDN provider operating in that jurisdiction. The provider complies — legally, under domestic law. The customer’s API traffic, decrypted at the CDN’s TLS termination point, is accessible to the jurisdiction’s enforcement authority. The customer’s application is running. The customer’s encryption is intact from client to CDN. The CDN’s decryption at the boundary is the access point. Sovereign networking architecture removes this attack surface by ensuring TLS terminates within the sovereign boundary, under the customer’s key custody.

Supply Chain Attack via Container Registry Egress — a compromised image in an external container registry is pulled by an isolated Kubernetes cluster that depends on external registries for image sourcing. The workload deployed inside the sovereign boundary is running adversary-controlled code delivered through the supply chain egress path. The network boundary is intact. The supply chain egress was the entry point. This is documented in the container security architecture — sovereign workloads require image sources within the sovereign boundary, not verified external sources that can be compromised.

Management Agent as Covert Exfiltration Path — a vendor management or monitoring agent installed in a sovereign environment establishes outbound connections to vendor infrastructure on port 443 as part of normal operation. The connections are authenticated, encrypted, and indistinguishable from legitimate HTTPS traffic. An adversary who compromises the vendor’s infrastructure — or a jurisdiction that serves a legal order to the vendor — has an authenticated channel into the sovereign environment through the management agent that was deployed to make operations easier. Trust Egress is the category. The management agent is the specific instance.

The Network Sovereignty Test

Before treating sovereign networking as complete, run this test. Sever all external network paths — DNS, NTP, external routing, external API endpoints. Then answer:

Sever all external network paths. Then answer:

Can all internal FQDNs resolve with all external DNS paths severed?

If yes: DNS sovereignty is likely intact for internal resolution. If no: name resolution depends on external infrastructure — the network plane has failed before any other system has been touched.

Can workloads route to all required internal destinations without external BGP peer reachability?

If yes: routing sovereignty is intact for internal workload traffic. If no: routing decisions depend on provider BGP — workload reachability is externally governed.

Does your API gateway authenticate and enforce access without calling an external OIDC or OAuth endpoint?

If no: the API boundary depends on external identity provider availability. The boundary fails in the direction of provider availability — not application availability.

Does TLS terminate within your sovereign boundary for all externally accessible endpoints?

If no for some endpoints: those endpoints operate under the jurisdiction and key custody of the external termination point. Sovereignty is intact for the application. The boundary is delegated.

Can you enumerate every external destination your environment contacts in a 24-hour period?

Any destination you cannot explain is an uncontrolled egress path. The Egress Audit Framework defines four categories — Unknown egress is the critical failure class that standard monitoring cannot detect.

Can you disable all outbound internet access and still operate your system fully?

If no: the system has structural external dependencies that prevent full operation under isolation. These may be acceptable for specific workload classifications — they are not compatible with sovereign networking claims.

When did you last run a full egress audit against actual traffic — not policy documentation?

Never audited = assumed sovereignty. Egress policy documents intent. Traffic capture documents reality. The gap between them is the uncontrolled egress surface.

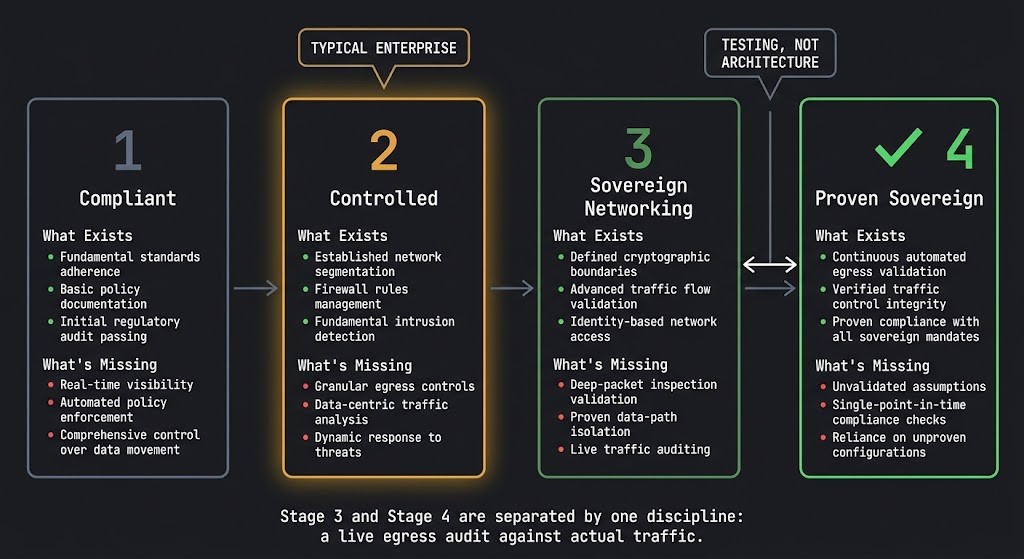

Network Sovereignty Maturity Model

Sovereignty maturity is measured by one criterion: is sovereignty provable, or assumed? Stage 3 and Stage 4 are separated by testing, not architecture.

| Stage | State | What Exists | What’s Missing | Sovereignty Test |

|---|---|---|---|---|

| 1 | Compliant | Perimeter firewall deployed; basic egress policy documented; DNS provided by cloud or ISP | All DNS externally controlled; no egress audit performed; TLS terminates at CDN; no internal NTP | Can you identify which DNS resolver handles internal FQDNs? |

| 2 | Controlled | Internal DNS deployed for primary zones; basic egress filtering active; some TLS termination moved internally | Recursive resolver still has public upstream forwarders; egress audit not completed; NTP external; container registry external; split-horizon gaps present | Disable external DNS forwarders. Does resolution degrade for any internal FQDN? |

| 3 | Sovereign Networking | Internal authoritative DNS with no public upstreams; full egress audit completed; TLS terminates within boundary; internal NTP hierarchy; container registry mirrored internally; BGP routing independence validated | Network sovereignty documented but not tested under full isolation; AI inference endpoints not addressed for sovereign workloads; egress audit not run against current traffic profile | Full external network isolation test: can every workload operate and resolve without any external path? |

| 4 | Proven Sovereign Networking | All Stage 3 components plus: isolation test executed and documented; egress audit run against live traffic and reconciled against policy; DNS RPZ active for exfiltration prevention; DNSSEC deployed for internal zones; quarterly re-audit scheduled | Ongoing operational overhead — sovereign networking requires active maintenance and periodic re-audit as the environment changes | All Network Sovereignty Test questions answered PASS — with test results, not policy documents |

Stage 3 and Stage 4 are separated by testing, not architecture. The gap between “correctly designed” and “proven sovereign” is a single discipline: executed validation under real isolation conditions. A DNS architecture that has never been tested under external DNS blackout is an assumed architecture. A sovereign network that has never been audited against actual egress traffic is a policy document, not an enforcement layer.

Decision Framework

| Scenario | Network Sovereignty Requirement | Minimum Architecture | Risk if Skipped |

|---|---|---|---|

| Regulated data under GDPR, DORA, NIS2 | Internal DNS + TLS boundary termination + egress audit | Authoritative internal DNS, no public upstream; TLS termination at application boundary | DNS queries leaking internal FQDNs to external resolvers; TLS decrypted by CDN under jurisdiction order |

| Defense or national security workloads | Full Network Plane Model — all four sub-layers sovereign | Internal DNS + BGP independence + boundary TLS + complete egress policy + internal NTP + internal container registry | Nation-state DNS manipulation; BGP hijacking; management agent exfiltration channel; supply chain attack |

| Healthcare or financial with adversarial threat model | Internal DNS + egress audit + DNS RPZ | Authoritative internal DNS; DNS RPZ for exfiltration prevention; egress audit with Unknown category addressed | DNS C2 during ransomware event; certificate revocation failures; covert exfiltration undetected for months |

| AI workloads processing regulated data | Inference endpoint network sovereignty + model registry internal | TLS terminates within boundary for all inference endpoints; model weights served from internal registry | Inference traffic decrypted at CDN; model weights accessible via CDN operator under jurisdiction order |

| Hybrid cloud with classified workloads | Network segmentation between sovereign and non-sovereign zones | Separate DNS, routing, and egress policy per classification tier; no shared network control plane | Cloud-managed DNS for sovereign zone queries; shared routing leaks sovereign workload reachability |

| Startup or early-stage organization | Internal DNS minimum + basic egress documentation | Replace public DNS with internal resolver for production zones; document egress policy | Full Stage 3–4 network sovereignty overhead exceeds benefit at this scale; revisit at regulated workload threshold |

All four control planes are now documented. Identity, Management, Data, and Network — each with independent architecture, testable sovereignty conditions, and a failure mode catalog. The model is complete. The test that determines whether your architecture passes it is not.

You’ve Read the Architecture.

Now Test Whether Your Network Boundary Actually Holds.

External DNS dependencies, unaudited egress paths, CDN-terminated TLS, provider BGP coupling — most sovereign network architectures look correct in documentation and surface their failure modes during the first real isolation event, jurisdiction order, or adversarial DNS manipulation. The triage session validates whether your specific environment passes the Network Sovereignty Test before a failure event does it for you.

Sovereign Infrastructure Audit

Vendor-agnostic review of your network sovereignty posture — DNS dependency mapping, egress audit against live traffic, TLS termination analysis, BGP routing independence assessment, and API boundary sovereignty review.

- > DNS dependency mapping — recursive chain audit

- > Egress audit against live traffic — all four categories

- > TLS termination analysis — boundary vs. external

- > Network Sovereignty Test execution and documentation

Architecture Playbooks. Every Week.

Field-tested blueprints from real sovereign network environments — DNS exfiltration post-mortems, CDN TLS boundary failures, BGP routing independence patterns, and the egress audit frameworks that surface the Unknown egress category before an adversary does.

- > DNS Sovereignty Architecture Patterns

- > Egress Audit Execution & Unknown Channel Detection

- > TLS Boundary Design & CDN Sovereignty Tradeoffs

- > Network Plane Failure Post-Mortems

Zero spam. Unsubscribe anytime.

Architect’s Verdict

Sovereign networking is the enforcement layer. Not a security posture. Not a network architecture. The layer that either validates every sovereignty claim made by the Identity Plane and the Data Plane — or silently negates them.

The organizations that believe they have network sovereignty — and don’t — are not making obvious mistakes. They have private networks. They have perimeter firewalls. They have egress policies. They have split-horizon DNS and CDN-accelerated API endpoints and network segmentation across every environment they operate. What they have not done is run the test: disconnect all external paths, then ask whether the system can still resolve names, route workloads, authenticate access, and operate fully.

For most organizations, the answer reveals the same three failures. A DNS resolver that needs an upstream call for internal FQDNs that lack local authoritative answers. An API gateway that validates tokens against an external OIDC endpoint. A telemetry agent, a license checker, or an NTP client that reaches outside the boundary continuously, silently, and without any alert. None of these failures are visible. All of them are active. And all of them mean that a jurisdiction order, a provider outage, or an adversary who understands DNS as an exfiltration channel can act inside the boundary — not despite the sovereignty architecture, but through the gaps it left open.

The path to provable network sovereignty runs through four sub-layers and one discipline that most Stage 3 environments have never applied: a live egress audit against actual traffic, not policy documentation. Policy documents intent. Traffic captures reality. The gap between them — the Unknown egress category — is where DNS tunneling operates for 200 days before detection. It is where the management agent that was deployed for operational convenience becomes the covert channel that an adversary finds first.

The Rack2Cloud Sovereignty Control Plane Model is now complete. Four planes. Four independent architectures. One test that most organizations have never run. Build the network boundary that makes the test answerable. Then run it.

Sovereignty is provable or it is assumed. The Network Plane is where you find out which one you have.

Frequently Asked Questions

Q: Is a private network the same as a sovereign network?

A: No. A private network controls the data path — traffic travels on infrastructure you own or have exclusive use of. A sovereign network controls the control plane decisions that govern that infrastructure — DNS resolution, routing, API boundary enforcement, and egress authorization. A private network with external DNS is a network where every name resolution decision is delegated to an external system. The wire is private. The control plane is not. Sovereign networking requires both: private infrastructure and independently controlled control planes operating that infrastructure.

Q: Why is DNS the most common sovereign networking failure?

A: Because DNS is a prerequisite for everything else — and it is the control plane most commonly left in the default configuration. Every workload call, every authentication event, every certificate validation, and every service discovery operation begins with a DNS resolution. Organizations configure firewalls, manage access policies, and audit IAM permissions — and then leave the recursive resolver pointing at 8.8.8.8. The resolver is the control plane that decides what your system can reach. External recursion is external control. The failure is common because DNS is infrastructure that “just works” until it doesn’t — and when it doesn’t under isolation, the entire dependent stack fails with it.

Q: What is the difference between egress control and a firewall?

A: A firewall enforces perimeter policy — which connections are permitted inbound and outbound based on port, protocol, and address rules. Egress control addresses what actually exits the boundary — including traffic that firewall policy permits by design. DNS on port 53 is typically permitted. NTP on port 123 is typically permitted. HTTPS on port 443 is typically permitted. All three are egress paths that carry sovereign networking failures — DNS recursive queries, external NTP, and vendor telemetry — through permitted firewall rules. Egress control requires auditing actual traffic against a sovereignty model, not verifying that firewall rules match a policy document.

Q: Does sovereign networking mean being disconnected from the internet?

A: No. Sovereign networking means that internet connectivity — or its absence — does not determine whether your control planes can function. An internet-connected system with independently controlled DNS, internally terminated TLS, and a complete egress audit can be sovereign. A physically isolated system with external DNS dependencies and unaudited management agent egress is not. The network boundary matters for the threat model. Control plane independence is the sovereignty definition.

Q: How does CDN use affect sovereign networking?

A: CDN providers terminate TLS before traffic reaches your application. This means the CDN operator decrypts, inspects, and re-encrypts every request — and holds the private keys that make this possible. For regulated workloads, CDN-terminated TLS means the CDN provider processes decrypted data under their infrastructure and their jurisdiction. A government order served to the CDN is served to your API boundary. For non-regulated workloads, CDN termination is an acceptable performance and availability trade-off. For sovereign workloads, TLS must terminate within the sovereign boundary. The decision is binary: your boundary or theirs.

Q: What is Trust Egress and why does it matter for sovereignty?

A: Trust Egress is the category of outbound connections that your infrastructure requires to maintain its own security posture — NTP time synchronization, OCSP certificate revocation checks, and CRL distribution point access. These are not application traffic and not telemetry. They are the operational dependencies of the cryptographic and authentication systems that sovereign infrastructure runs on. In isolated environments, blocked Trust Egress produces clock drift (token failures), inability to confirm certificate revocation (blast radius expansion during incidents), and authentication system failures that appear to be identity problems but are actually network sovereignty gaps. Trust Egress cannot simply be blocked — it must be served from within the sovereign boundary via internal NTP hierarchies, local OCSP responders, and locally hosted CRL endpoints.

Q: How often should an egress audit be run?

A: The initial egress audit should be run before any sovereign networking claim is made — against live traffic, not policy documentation. After that, a full re-audit is warranted whenever new software is deployed (particularly vendor-provided tooling with telemetry capabilities), when the infrastructure changes significantly, or when a new regulatory requirement is being satisfied. Quarterly re-audits are the minimum for environments that describe themselves as Stage 4 sovereign. The Unknown egress category — covert channels, DNS tunneling — requires continuous DNS query inspection and traffic analysis rather than periodic audits alone.