The Nutanix Migration Stutter: Why AHV Cutovers Freeze High-IO Workloads

Infrastructure migration is not a compute event. It is a storage convergence event.

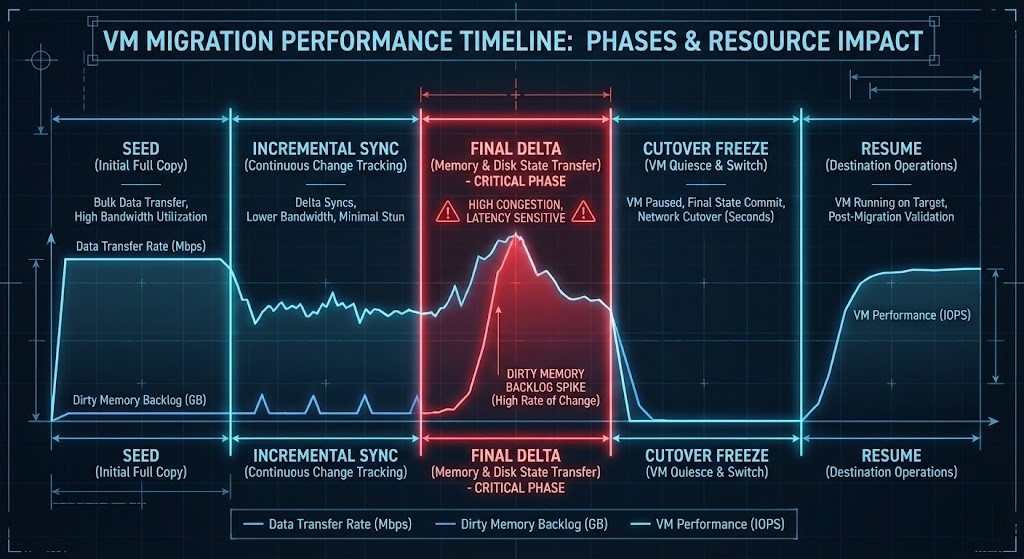

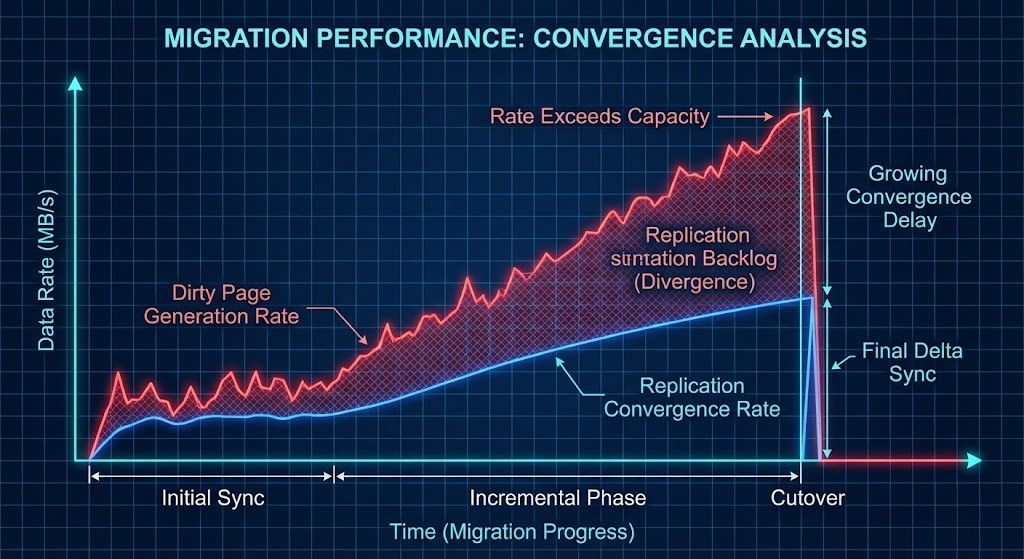

Most migration failures are not network failures. They occur during the final delta sync, when the system must quiesce writes, replicate dirty memory pages, finalize metadata, and flip compute ownership. On AHV, this is where the “stutter” appears.

Why This Feels Different Than ESXi

Architects moving from VMware often expect a “vMotion-like” experience, but the underlying physics differ. ESXi environments often offload storage differently with less CVM-style mediation and different memory convergence characteristics. Relying on historical tuning habits from the ESXi world is often what leads to the “stutter” on Nutanix.

The Hidden Physics of the Final Cutover

Unlike vMotion’s aggressive pre-copy algorithm, Nutanix Move encounters unique “gravity” during the final phase. If a database heartbeat threshold is less than 3 seconds, even a brief I/O freeze triggers a catastrophic failover.

1. The Dirty Page Problem

In high-transaction VMs—SQL log writers, Oracle redo logs, or high-IOPS file servers—the memory dirty rate often exceeds the rate at which Move can converge the delta.

- The Result: The final switchover must absorb a massive backlog of changes.

- The Mechanism: This is driven by application write bursts, log flush amplification, storage replication overhead, and intense metadata sync pressure.

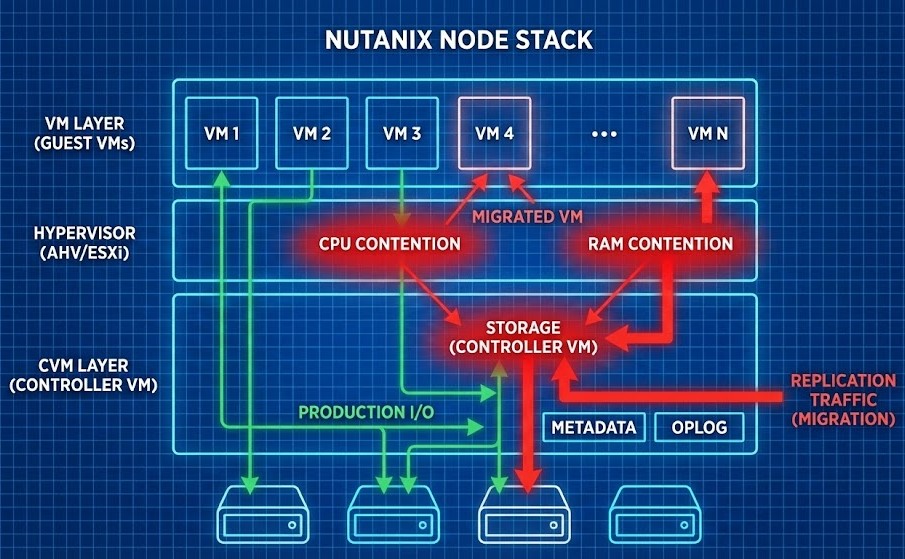

2. The Real Bottleneck: CVM Contention

The Controller VM (CVM) does not “pause” for migration; it compounds the workload. During migration, the CVM is not only ingesting replication traffic—it is also reconciling metadata, maintaining replication topology, and servicing existing production I/O. If CVM RAM is undersized or CPU contention exists, storage latency explodes.

The Deterministic Fix Blueprint

1️⃣ Temporarily Lift Container QoS

Remove I/O caps and allow maximum ingest throughput. This prevents artificial throttling during final convergence.

2️⃣ Pre-Inject VirtIO Drivers

Injecting current VirtIO drivers reduces re-discovery latency and avoids post-cutover I/O stalls.

3️⃣ CVM Resource Reservation Boost

Increase CVM RAM allocation and pin vCPUs to physical cores to avoid oversubscription during the migration window.

4️⃣ The Quiet Window Strategy

Reduce dirty page churn by pausing heavy batch jobs and reducing log shipping bursts.

Pre-Migration Risk Checklist

Before you hit “Cutover,” measure these signals. If they are out of spec, you are at risk for a stutter:

Master the Knowledge Loop

This analysis is a core module within our virtualization architecture series. To ensure your environment is tuned and secured for these bursts, explore our related guides:

Infrastructure migration is not a mobility event. It is a convergence event.

If dirty memory outpaces storage reconciliation, the stutter is not a bug. It is physics. Master the convergence layer, and the cutover becomes deterministic.

Additional Resources

- Microsoft Docs: SQL Server Heartbeat Thresholds: Understanding cluster timeout sensitivity.

- Nutanix Move Documentation: Official requirements and VirtIO driver guidance.

- Nutanix vs. VMware vs. Hyper-V: How to build a fair comparison as a Solutions Engineer.

Editorial Integrity & Security Protocol

This technical deep-dive adheres to the Rack2Cloud Deterministic Integrity Standard. All benchmarks and security audits are derived from zero-trust validation protocols within our isolated lab environments. No vendor influence.

Get the Playbooks Vendors Won’t Publish

Field-tested blueprints for migration, HCI, sovereign infrastructure, and AI architecture. Real failure-mode analysis. No marketing filler. Delivered weekly.

Select your infrastructure paths. Receive field-tested blueprints direct to your inbox.

- > Virtualization & Migration Physics

- > Cloud Strategy & Egress Math

- > Data Protection & RTO Reality

- > AI Infrastructure & GPU Fabric

Zero spam. Includes The Dispatch weekly drop.

Need Architectural Guidance?

Unbiased infrastructure audit for your migration, cloud strategy, or HCI transition.

>_ Request Triage Session